Product

Introducing Reachability for PHP

Reachability analysis for PHP is now available in experimental, helping teams identify which vulnerabilities are actually exploitable.

fastapi-advanced-rate-limiter

Advanced tools

High-performance, multi-algorithm rate limiting for FastAPI with Redis and in-memory backends

High-performance, production-ready rate limiting for FastAPI applications.

FastAPI Advanced Rate Limiter is a battle-tested library providing 6 different rate limiting algorithms with support for both in-memory and Redis backends. Perfect for APIs, microservices, and any FastAPI application that needs protection from abuse, overload, or DDoS attacks.

get_status(), get_wait_time(), get_retry_after() helpersRate limiting is essential for:

Without rate limiting, a single user can:

30 seconds to your first rate limiter:

from fastapi import FastAPI, Request, HTTPException

from fastapi_advanced_rate_limiter import SlidingWindowRateLimiter

import redis

app = FastAPI()

# Initialize rate limiter

redis_client = redis.Redis.from_url("redis://localhost:6379", decode_responses=True)

limiter = SlidingWindowRateLimiter(

capacity=100, # 100 requests

fill_rate=10, # per 10 seconds

scope="user", # per-user limits

backend="redis", # distributed

redis_client=redis_client

)

@app.middleware("http")

async def rate_limit_middleware(request: Request, call_next):

# Get user identifier (from auth, IP, etc.)

user_id = request.headers.get("X-User-ID") or request.client.host

# Check rate limit

if not limiter.allow_request(user_id):

wait_time = limiter.get_wait_time(user_id)

raise HTTPException(

status_code=429,

detail=f"Rate limit exceeded. Retry after {wait_time:.0f} seconds.",

headers={"Retry-After": str(int(wait_time))}

)

return await call_next(request)

@app.get("/")

async def root():

return {"message": "Hello World!"}

Run it:

uvicorn main:app --reload

Test it:

# Make requests

curl http://localhost:8000/

# After 100 requests in 10 seconds, you'll get:

# {"detail":"Rate limit exceeded. Retry after 5 seconds."}

pip install fastapi-advanced-rate-limiter

pip install fastapi-advanced-rate-limiter redis

If you want to contribute or modify the package:

git clone https://github.com/awais7012/FastAPI-RateLimiter.git

cd FastAPI-RateLimiter

pip install -e .

For contributors who want to run tests and development tools:

pip install -e ".[dev]"

Choose the right algorithm for your use case:

How it works: Imagine a bucket that slowly fills with tokens. Each request takes a token. If the bucket is empty, requests are denied.

from fastapi_advanced_rate_limiter import TokenBucketLimiter

limiter = TokenBucketLimiter(

capacity=10, # Bucket holds 10 tokens (burst size)

fill_rate=1.0, # Refill 1 token per second

scope="user",

backend="memory"

)

Behavior:

capacityfill_ratePros: ✅ Flexible, allows bursts

Cons: ⚠️ Can be "drained" by burst traffic

Use when: User-triggered actions (file uploads, form submissions)

How it works: Requests fill a bucket with a hole at the bottom. The bucket leaks at a constant rate. Overflow = rejected.

from fastapi_advanced_rate_limiter import LeakyBucketLimiter

limiter = LeakyBucketLimiter(

capacity=5, # Bucket capacity

fill_rate=1.0, # Leak rate (1 req/sec)

scope="user",

backend="memory"

)

Behavior:

Pros: ✅ Smooth, predictable rate

Cons: ❌ Strict (can frustrate users with spiky traffic)

Use when: Calling rate-limited external APIs, message queues

How it works: Count requests in fixed time windows (e.g., per minute). Reset count at window boundaries.

from fastapi_advanced_rate_limiter import FixedWindowRateLimiter

limiter = FixedWindowRateLimiter(

capacity=1000, # 1000 requests

fill_rate=100, # per 10 seconds (capacity/fill_rate)

scope="global",

backend="redis"

)

Behavior:

Pros: ✅ Fastest, O(1), minimal memory

Cons: ⚠️ Boundary burst vulnerability

Use when: High-throughput internal APIs, coarse-grained limits

How it works: Combines current and previous window counts with a weighted average to smooth transitions.

from fastapi_advanced_rate_limiter import SlidingWindowRateLimiter

limiter = SlidingWindowRateLimiter(

capacity=100,

fill_rate=10,

scope="user",

backend="redis" # Redis version performs best!

)

Behavior:

Pros: ✅ Best balance of accuracy and performance

Cons: ⚠️ Slight approximation (but negligible in practice)

Use when: Production APIs, user-facing services (RECOMMENDED for most cases)

How it works: Logs exact timestamp of each request. Prunes old timestamps outside the window.

from fastapi_advanced_rate_limiter import SlidingWindowLogRateLimiter

limiter = SlidingWindowLogRateLimiter(

capacity=10,

fill_rate=10/300, # 10 per 5 minutes

scope="ip",

backend="redis"

)

Behavior:

Pros: ✅ Perfect accuracy, no boundary issues

Cons: ❌ Higher memory usage, not suitable for huge scale

Use when: Security-critical endpoints (login, payment), billing APIs

How it works: Maintains a queue of recent request timestamps. Similar to Sliding Window Log.

from fastapi_advanced_rate_limiter import QueueLimiter

limiter = QueueLimiter(

capacity=50,

fill_rate=5.0,

scope="global",

backend="memory"

)

Behavior:

Use when: Background job processing, task queues

Test Setup: 5 concurrent users, 10-second tests, mixed traffic patterns

| Algorithm | Memory (req/s) | Redis (req/s) | Success Rate | Best For |

|---|---|---|---|---|

| Fixed Window | 15.99 🥇 | 15.33 | 53-59% | High throughput |

| Sliding Window | 15.03 | 14.32 | 51-66% | Production (best balance) ⭐ |

| Sliding Window Log | 15.41 | 14.58 | 52-67% | Accuracy-critical |

| Token Bucket | 4.76 | 3.69 | 14-16% | Burst-friendly APIs |

| Leaky Bucket | 4.76 | 2.93 | 10-16% | Traffic shaping |

| Queue Limiter | 2.76 | 3.03 | 9-12% | Fair queuing |

Key Findings:

Winner: 🏆 Sliding Window (Redis) — Best for production APIs

from fastapi import FastAPI, Depends, HTTPException

from fastapi.security import HTTPBearer

from fastapi_advanced_rate_limiter import SlidingWindowRateLimiter

app = FastAPI()

security = HTTPBearer()

limiter = SlidingWindowRateLimiter(capacity=100, fill_rate=10, scope="user", backend="redis")

def get_current_user(token: str = Depends(security)):

# Your auth logic here

return {"user_id": "user_123"}

@app.get("/api/data")

async def get_data(user = Depends(get_current_user)):

if not limiter.allow_request(user["user_id"]):

raise HTTPException(status_code=429, detail="Rate limit exceeded")

return {"data": "Your protected data"}

from fastapi import FastAPI, Request, HTTPException

from fastapi_advanced_rate_limiter import FixedWindowRateLimiter

app = FastAPI()

limiter = FixedWindowRateLimiter(capacity=1000, fill_rate=100, scope="ip", backend="redis")

@app.get("/public/api")

async def public_endpoint(request: Request):

client_ip = request.client.host

if not limiter.allow_request(client_ip):

raise HTTPException(

status_code=429,

detail="Too many requests from your IP",

headers={"Retry-After": "60"}

)

return {"message": "Public data"}

from fastapi_advanced_rate_limiter import LeakyBucketLimiter

# Limit total API traffic

global_limiter = LeakyBucketLimiter(

capacity=10000,

fill_rate=1000, # 1000 req/sec globally

scope="global",

backend="redis"

)

@app.middleware("http")

async def global_rate_limit(request: Request, call_next):

if not global_limiter.allow_request(None): # None for global scope

raise HTTPException(status_code=503, detail="Service temporarily unavailable")

return await call_next(request)

# Layer 1: Global limit (protect infrastructure)

global_limiter = FixedWindowRateLimiter(capacity=100000, fill_rate=10000, scope="global", backend="redis")

# Layer 2: Per-IP limit (prevent DDoS)

ip_limiter = SlidingWindowRateLimiter(capacity=1000, fill_rate=100, scope="ip", backend="redis")

# Layer 3: Per-user limit (fair usage)

user_limiter = TokenBucketLimiter(capacity=100, fill_rate=10, scope="user", backend="redis")

@app.middleware("http")

async def layered_rate_limit(request: Request, call_next):

# Check global limit first

if not global_limiter.allow_request(None):

raise HTTPException(status_code=503, detail="Service overloaded")

# Check IP limit

if not ip_limiter.allow_request(request.client.host):

raise HTTPException(status_code=429, detail="IP rate limit exceeded")

# Check user limit (if authenticated)

user_id = request.headers.get("X-User-ID")

if user_id and not user_limiter.allow_request(user_id):

raise HTTPException(status_code=429, detail="User rate limit exceeded")

return await call_next(request)

from fastapi.responses import JSONResponse

@app.exception_handler(HTTPException)

async def rate_limit_handler(request: Request, exc: HTTPException):

if exc.status_code == 429:

# Get wait time from limiter

user_id = request.headers.get("X-User-ID") or request.client.host

wait_time = limiter.get_wait_time(user_id)

return JSONResponse(

status_code=429,

content={

"error": "Rate limit exceeded",

"retry_after_seconds": int(wait_time),

"message": f"Please wait {int(wait_time)} seconds before retrying"

},

headers={"Retry-After": str(int(wait_time))}

)

return exc

allow_request(identifier=None) -> boolCheck if a request should be allowed.

Parameters:

identifier (str, optional): User ID, IP address, or None for global scopeReturns:

bool: True if allowed, False if rate limitedExample:

if limiter.allow_request("user_123"):

# Process request

pass

else:

# Return 429

pass

get_status(identifier=None) -> dictGet current limiter status for monitoring.

Returns:

{

"tokens_remaining": 7.5, # Token Bucket

"capacity": 10,

"fill_rate": 1.0,

"utilization_pct": 25.0

}

Example:

status = limiter.get_status("user_123")

print(f"User has {status['tokens_remaining']} requests remaining")

get_wait_time(identifier=None) -> floatCalculate seconds until next request would be allowed.

Returns:

float: Seconds to wait (0.0 if request would be allowed immediately)Example:

wait = limiter.get_wait_time("user_123")

if wait > 0:

print(f"Please wait {wait:.1f} seconds")

reset(identifier=None) -> NoneReset rate limit for an identifier (useful for testing or admin actions).

Example:

# Admin endpoint to reset user's rate limit

@app.post("/admin/reset-limit/{user_id}")

async def reset_user_limit(user_id: str):

limiter.reset(user_id)

return {"message": f"Rate limit reset for {user_id}"}

Pros:

Cons:

Use when:

Pros:

Cons:

Use when:

Setup Redis:

# Docker

docker run -d --name redis -p 6379:6379 redis

# Or use Redis Cloud (free tier)

# https://redis.com/try-free/

# For most APIs → Sliding Window

limiter = SlidingWindowRateLimiter(...)

# For burst-heavy traffic → Token Bucket

limiter = TokenBucketLimiter(...)

# For critical operations → Sliding Window Log

limiter = SlidingWindowLogRateLimiter(...)

# Authenticated users → per-user

scope="user"

# Public APIs → per-IP

scope="ip"

# Infrastructure protection → global

scope="global"

# Don't be too strict!

# Bad: 10 req/hour (users will be frustrated)

# Good: 1000 req/hour (generous but protective)

limiter = SlidingWindowRateLimiter(

capacity=1000,

fill_rate=1000/3600, # 1000 per hour

scope="user",

backend="redis"

)

if not limiter.allow_request(user_id):

wait_time = limiter.get_wait_time(user_id)

raise HTTPException(

status_code=429,

headers={"Retry-After": str(int(wait_time))}

)

@app.get("/metrics/rate-limits")

async def get_rate_limit_metrics():

return {

"global": global_limiter.get_status(None),

"sample_user": user_limiter.get_status("user_123")

}

# Run comprehensive test suite

python tests/all_limiter_test.py

# Or with pytest

pytest tests/

==========================================================================================

FINAL COMPARISON SUMMARY

==========================================================================================

Limiter Backend Allowed Blocked Rate/s Success%

------------------------------------------------------------------------------------------

Fixed Window memory 168 145 15.99 53.7%

Sliding Window redis 153 80 14.32 65.7% ⭐

Sliding Window Log redis 155 95 14.58 62.0%

Token Bucket memory 50 262 4.76 16.0%

🏆 Best Performance: Sliding Window (redis): 14.32 req/s with 65.7% success rate

We welcome contributions! Here's how:

git checkout -b feature/amazing-featuregit commit -m 'Add amazing feature'git push origin feature/amazing-featuregit clone https://github.com/awais7012/FastAPI-RateLimiter.git

cd FastAPI-RateLimiter

pip install -e ".[dev]"

# Problem: redis.exceptions.ConnectionError

# Solution: Ensure Redis is running

# Check Redis

docker ps | grep redis

# Or start Redis

docker run -d --name redis -p 6379:6379 redis

# Problem: Rate limits don't work across multiple app instances

# Solution: Use Redis backend, not memory

# ❌ Wrong (memory backend)

limiter = SlidingWindowRateLimiter(..., backend="memory")

# ✅ Correct (Redis backend)

limiter = SlidingWindowRateLimiter(..., backend="redis", redis_client=redis_client)

# Problem: ModuleNotFoundError: No module named 'fastapi_advanced_rate_limiter'

# Solution: Install the package from PyPI

pip install fastapi-advanced-rate-limiter

# Or if you cloned the repo

pip install -e .

MIT License - see LICENSE file for details.

Built for developers who love clean, scalable FastAPI tooling.

⭐ Star us on GitHub if you find this useful!

FAQs

High-performance, multi-algorithm rate limiting for FastAPI with Redis and in-memory backends

We found that fastapi-advanced-rate-limiter demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Reachability analysis for PHP is now available in experimental, helping teams identify which vulnerabilities are actually exploitable.

Product

Export Socket alert data to your own cloud storage in JSON, CSV, or Parquet, with flexible snapshot or incremental delivery.

Research

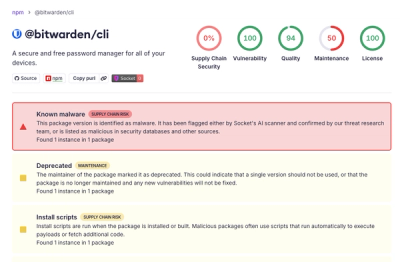

/Security News

Bitwarden CLI 2026.4.0 was compromised in the Checkmarx supply chain campaign after attackers abused a GitHub Action in Bitwarden’s CI/CD pipeline.