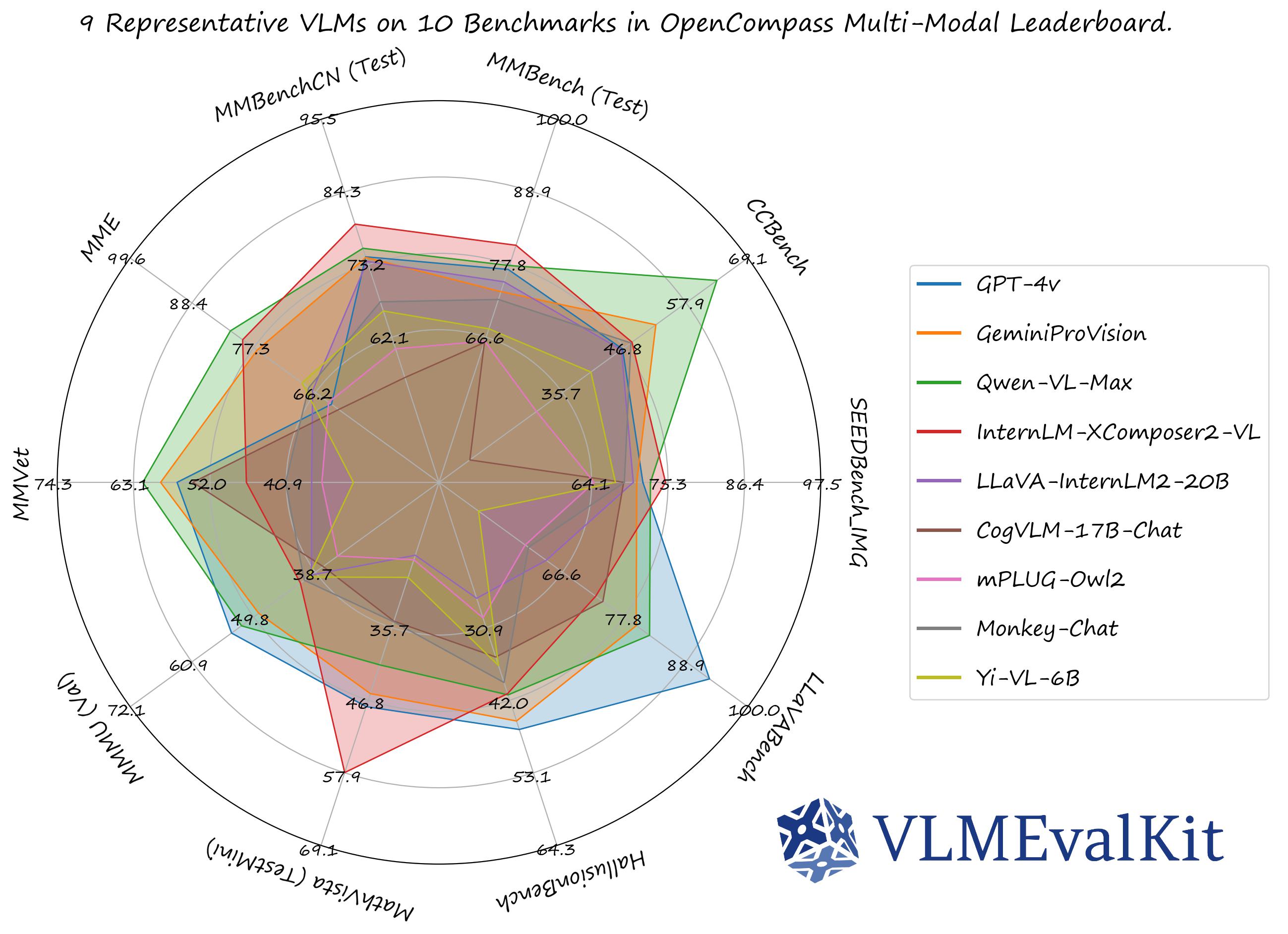

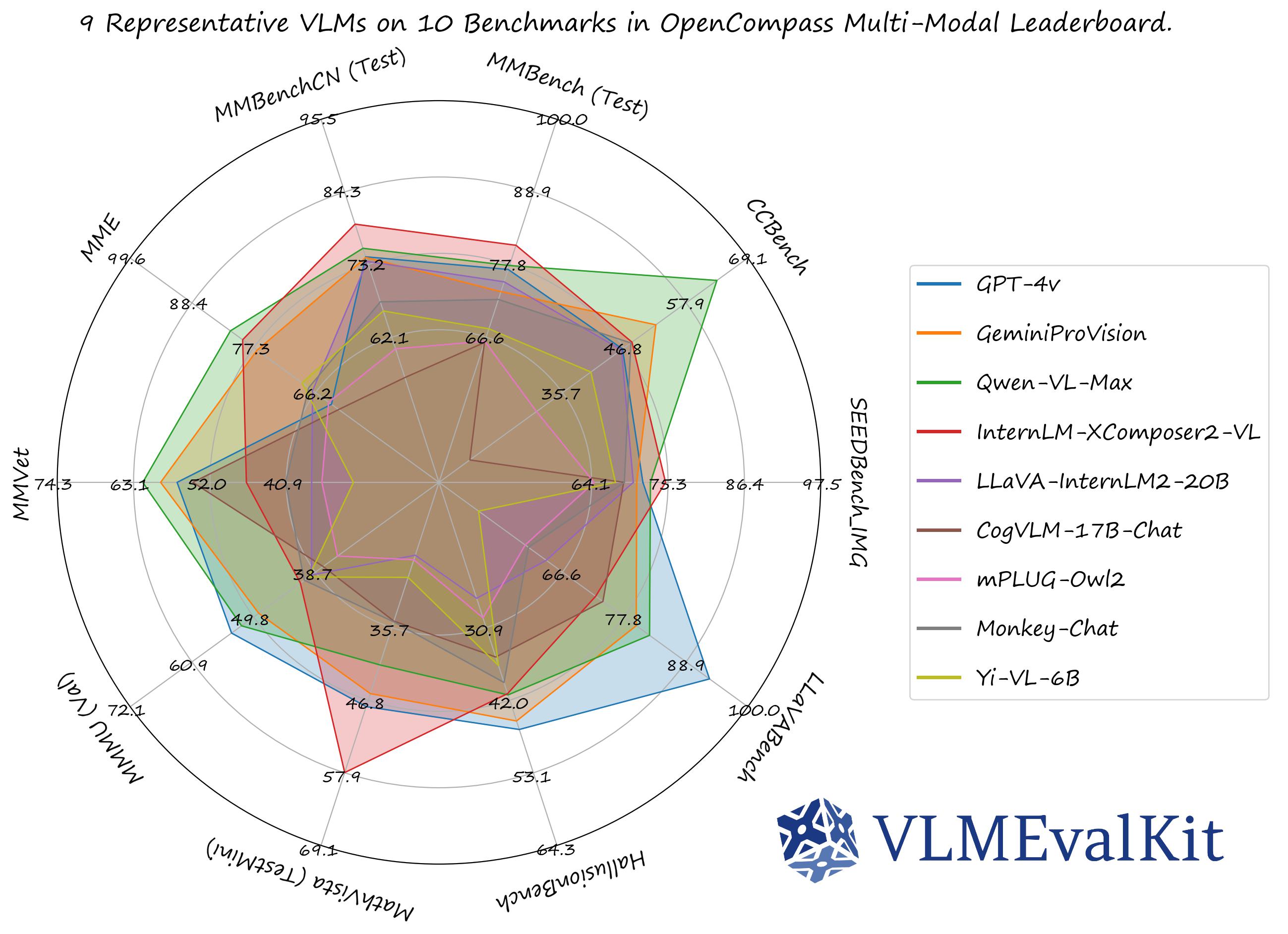

A Toolkit for Evaluating Large Vision-Language Models.

•

•  •

•  •

•  •

•

English | 简体中文 | 日本語

🏆 OC Learderboard •

🏗️Quickstart •

📊Datasets & Models •

🛠️Development

🤗 HF Leaderboard •

🤗 Evaluation Records •

🤗 HF Video Leaderboard •

🔊 Discord •

📝 Report •

🎯Goal •

🖊️Citation

VLMEvalKit (the python package name is vlmeval) is an open-source evaluation toolkit of large vision-language models (LVLMs). It enables one-command evaluation of LVLMs on various benchmarks, without the heavy workload of data preparation under multiple repositories. In VLMEvalKit, we adopt generation-based evaluation for all LVLMs, and provide the evaluation results obtained with both exact matching and LLM-based answer extraction.

🆕 News

We have presented a comprehensive survey on the evaluation of large multi-modality models, jointly with MME Team and LMMs-Lab 🔥🔥🔥

- [2025-06-16] Supported PhyX, a benchmark aiming to assess capacity for physics-grounded reasoning in visual scenarios. 🔥🔥🔥

- [2025-05-24] To facilitate faster evaluations for large-scale or thinking models, VLMEvalKit supports multi-node distributed inference using LMDeploy (supports InternVL Series, QwenVL Series, LLaMa4) or VLLM(supports QwenVL Series, LLaMa4). You can activate this feature by adding the

use_lmdeploy or use_vllm flag to your custom model configuration in config.py . Leverage these tools to significantly speed up your evaluation workflows 🔥🔥🔥

- [2025-05-24] Supported Models: InternVL3 Series, Gemini-2.5-Pro, Kimi-VL, LLaMA4, NVILA, Qwen2.5-Omni, Phi4, SmolVLM2, Grok, SAIL-VL-1.5, WeThink-Qwen2.5VL-7B, Bailingmm, VLM-R1, Taichu-VLR. Supported Benchmarks: HLE-Bench, MMVP, MM-AlignBench, Creation-MMBench, MM-IFEval, OmniDocBench, OCR-Reasoning, EMMA, ChaXiv,MedXpertQA, Physics, MSEarthMCQ, MicroBench, MMSci, VGRP-Bench, wildDoc, TDBench, VisuLogic, CVBench, LEGO-Puzzles, Video-MMLU, QBench-Video, MME-CoT, VLM2Bench, VMCBench, MOAT, Spatial457 Benchmark. Please refer to VLMEvalKit Features for more details. Thanks to all contributors 🔥🔥🔥

- [2025-02-20] Supported Models: InternVL2.5 Series, Qwen2.5VL Series, QVQ-72B, Doubao-VL, Janus-Pro-7B, MiniCPM-o-2.6, InternVL2-MPO, LLaVA-CoT, Hunyuan-Standard-Vision, Ovis2, Valley, SAIL-VL, Ross, Long-VITA, EMU3, SmolVLM. Supported Benchmarks: MMMU-Pro, WeMath, 3DSRBench, LogicVista, VL-RewardBench, CC-OCR, CG-Bench, CMMMU, WorldSense. Thanks to all contributors 🔥🔥🔥

- [2024-12-11] Supported NaturalBench, a vision-centric VQA benchmark (NeurIPS'24) that challenges vision-language models with simple questions about natural imagery.

- [2024-12-02] Supported VisOnlyQA, a benchmark for evaluating the visual perception capabilities 🔥🔥🔥

- [2024-11-26] Supported Ovis1.6-Gemma2-27B, thanks to runninglsy 🔥🔥🔥

- [2024-11-25] Create a new flag

VLMEVALKIT_USE_MODELSCOPE. By setting this environment variable, you can download the video benchmarks supported from modelscope 🔥🔥🔥

- [2024-11-25] Supported VizWiz benchmark 🔥🔥🔥

- [2024-11-22] Supported the inference of MMGenBench, thanks lerogo 🔥🔥🔥

- [2024-11-22] Supported Dynamath, a multimodal math benchmark comprising of 501 SEED problems and 10 variants generated based on random seeds. The benchmark can be used to measure the robustness of MLLMs in multi-modal math solving 🔥🔥🔥

- [2024-11-21] Integrated a new config system to enable more flexible evaluation settings. Check the Document or run

python run.py --help for more details 🔥🔥🔥

- [2024-11-21] Supported QSpatial, a multimodal benchmark for Quantitative Spatial Reasoning (determine the size / distance, e.g.), thanks andrewliao11 for providing the official support 🔥🔥🔥

- [2024-11-21] Supported MM-Math, a new multimodal math benchmark comprising of ~6K middle school multi-modal reasoning math problems. GPT-4o-20240806 achieces 22.5% accuracy on this benchmark 🔥🔥🔥

🏗️ QuickStart

See [QuickStart | 快速开始] for a quick start guide.

📊 Datasets, Models, and Evaluation Results

Evaluation Results

The performance numbers on our official multi-modal leaderboards can be downloaded from here!

OpenVLM Leaderboard: Download All DETAILED Results.

Check Supported Benchmarks Tab in VLMEvalKit Features to view all supported image & video benchmarks (70+).

Check Supported LMMs Tab in VLMEvalKit Features to view all supported LMMs, including commercial APIs, open-source models, and more (200+).

Transformers Version Recommendation:

Note that some VLMs may not be able to run under certain transformer versions, we recommend the following settings to evaluate each VLM:

- Please use

transformers==4.33.0 for: Qwen series, Monkey series, InternLM-XComposer Series, mPLUG-Owl2, OpenFlamingo v2, IDEFICS series, VisualGLM, MMAlaya, ShareCaptioner, MiniGPT-4 series, InstructBLIP series, PandaGPT, VXVERSE.

- Please use

transformers==4.36.2 for: Moondream1.

- Please use

transformers==4.37.0 for: LLaVA series, ShareGPT4V series, TransCore-M, LLaVA (XTuner), CogVLM Series, EMU2 Series, Yi-VL Series, MiniCPM-[V1/V2], OmniLMM-12B, DeepSeek-VL series, InternVL series, Cambrian Series, VILA Series, Llama-3-MixSenseV1_1, Parrot-7B, PLLaVA Series.

- Please use

transformers==4.40.0 for: IDEFICS2, Bunny-Llama3, MiniCPM-Llama3-V2.5, 360VL-70B, Phi-3-Vision, WeMM.

- Please use

transformers==4.42.0 for: AKI.

- Please use

transformers==4.44.0 for: Moondream2, H2OVL series.

- Please use

transformers==4.45.0 for: Aria.

- Please use

transformers==latest for: LLaVA-Next series, PaliGemma-3B, Chameleon series, Video-LLaVA-7B-HF, Ovis series, Mantis series, MiniCPM-V2.6, OmChat-v2.0-13B-sinlge-beta, Idefics-3, GLM-4v-9B, VideoChat2-HD, RBDash_72b, Llama-3.2 series, Kosmos series.

Torchvision Version Recommendation:

Note that some VLMs may not be able to run under certain torchvision versions, we recommend the following settings to evaluate each VLM:

- Please use

torchvision>=0.16 for: Moondream series and Aria

Flash-attn Version Recommendation:

Note that some VLMs may not be able to run under certain flash-attention versions, we recommend the following settings to evaluate each VLM:

- Please use

pip install flash-attn --no-build-isolation for: Aria

from vlmeval.config import supported_VLM

model = supported_VLM['idefics_9b_instruct']()

ret = model.generate(['assets/apple.jpg', 'What is in this image?'])

print(ret)

ret = model.generate(['assets/apple.jpg', 'assets/apple.jpg', 'How many apples are there in the provided images? '])

print(ret)

🛠️ Development Guide

To develop custom benchmarks, VLMs, or simply contribute other codes to VLMEvalKit, please refer to [Development_Guide | 开发指南].

Call for contributions

To promote the contribution from the community and share the corresponding credit (in the next report update):

- All Contributions will be acknowledged in the report.

- Contributors with 3 or more major contributions (implementing an MLLM, benchmark, or major feature) can join the author list of VLMEvalKit Technical Report on ArXiv. Eligible contributors can create an issue or dm kennyutc in VLMEvalKit Discord Channel.

Here is a contributor list we curated based on the records.

🎯 The Goal of VLMEvalKit

The codebase is designed to:

- Provide an easy-to-use, opensource evaluation toolkit to make it convenient for researchers & developers to evaluate existing LVLMs and make evaluation results easy to reproduce.

- Make it easy for VLM developers to evaluate their own models. To evaluate the VLM on multiple supported benchmarks, one just need to implement a single

generate_inner() function, all other workloads (data downloading, data preprocessing, prediction inference, metric calculation) are handled by the codebase.

The codebase is not designed to:

- Reproduce the exact accuracy number reported in the original papers of all 3rd party benchmarks. The reason can be two-fold:

- VLMEvalKit uses generation-based evaluation for all VLMs (and optionally with LLM-based answer extraction). Meanwhile, some benchmarks may use different approaches (SEEDBench uses PPL-based evaluation, eg.). For those benchmarks, we compare both scores in the corresponding result. We encourage developers to support other evaluation paradigms in the codebase.

- By default, we use the same prompt template for all VLMs to evaluate on a benchmark. Meanwhile, some VLMs may have their specific prompt templates (some may not covered by the codebase at this time). We encourage VLM developers to implement their own prompt template in VLMEvalKit, if that is not covered currently. That will help to improve the reproducibility.

🖊️ Citation

If you find this work helpful, please consider to star🌟 this repo. Thanks for your support!

If you use VLMEvalKit in your research or wish to refer to published OpenSource evaluation results, please use the following BibTeX entry and the BibTex entry corresponding to the specific VLM / benchmark you used.

@inproceedings{duan2024vlmevalkit,

title={Vlmevalkit: An open-source toolkit for evaluating large multi-modality models},

author={Duan, Haodong and Yang, Junming and Qiao, Yuxuan and Fang, Xinyu and Chen, Lin and Liu, Yuan and Dong, Xiaoyi and Zang, Yuhang and Zhang, Pan and Wang, Jiaqi and others},

booktitle={Proceedings of the 32nd ACM International Conference on Multimedia},

pages={11198--11201},

year={2024}

}

🔝Back to top