Security News

Risky Biz Podcast: Making Reachability Analysis Work in Real-World Codebases

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

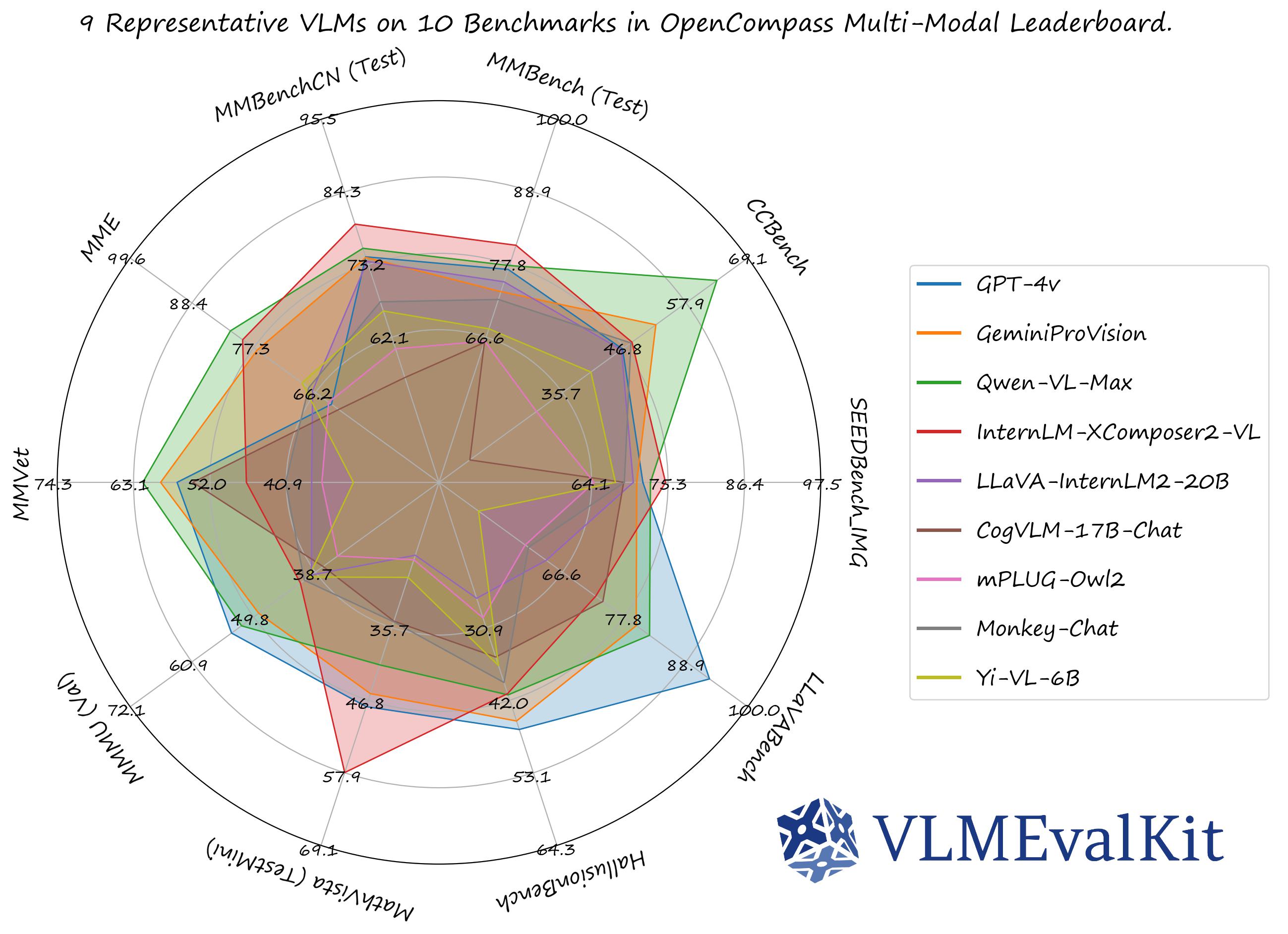

A Toolkit for Evaluating Large Vision-Language Models.

🏆 OC Learderboard • 🏗️Quickstart • 📊Datasets & Models • 🛠️Development

🤗 HF Leaderboard • 🤗 Evaluation Records • 🤗 HF Video Leaderboard •

🔊 Discord • 📝 Report • 🎯Goal • 🖊️Citation

VLMEvalKit (the python package name is vlmeval) is an open-source evaluation toolkit of large vision-language models (LVLMs). It enables one-command evaluation of LVLMs on various benchmarks, without the heavy workload of data preparation under multiple repositories. In VLMEvalKit, we adopt generation-based evaluation for all LVLMs, and provide the evaluation results obtained with both exact matching and LLM-based answer extraction.

We have presented a comprehensive survey on the evaluation of large multi-modality models, jointly with MME Team and LMMs-Lab 🔥🔥🔥

use_lmdeploy or use_vllm flag to your custom model configuration in config.py . Leverage these tools to significantly speed up your evaluation workflows 🔥🔥🔥VLMEVALKIT_USE_MODELSCOPE. By setting this environment variable, you can download the video benchmarks supported from modelscope 🔥🔥🔥python run.py --help for more details 🔥🔥🔥See [QuickStart | 快速开始] for a quick start guide.

The performance numbers on our official multi-modal leaderboards can be downloaded from here!

OpenVLM Leaderboard: Download All DETAILED Results.

Check Supported Benchmarks Tab in VLMEvalKit Features to view all supported image & video benchmarks (70+).

Check Supported LMMs Tab in VLMEvalKit Features to view all supported LMMs, including commercial APIs, open-source models, and more (200+).

Transformers Version Recommendation:

Note that some VLMs may not be able to run under certain transformer versions, we recommend the following settings to evaluate each VLM:

transformers==4.33.0 for: Qwen series, Monkey series, InternLM-XComposer Series, mPLUG-Owl2, OpenFlamingo v2, IDEFICS series, VisualGLM, MMAlaya, ShareCaptioner, MiniGPT-4 series, InstructBLIP series, PandaGPT, VXVERSE.transformers==4.36.2 for: Moondream1.transformers==4.37.0 for: LLaVA series, ShareGPT4V series, TransCore-M, LLaVA (XTuner), CogVLM Series, EMU2 Series, Yi-VL Series, MiniCPM-[V1/V2], OmniLMM-12B, DeepSeek-VL series, InternVL series, Cambrian Series, VILA Series, Llama-3-MixSenseV1_1, Parrot-7B, PLLaVA Series.transformers==4.40.0 for: IDEFICS2, Bunny-Llama3, MiniCPM-Llama3-V2.5, 360VL-70B, Phi-3-Vision, WeMM.transformers==4.42.0 for: AKI.transformers==4.44.0 for: Moondream2, H2OVL series.transformers==4.45.0 for: Aria.transformers==latest for: LLaVA-Next series, PaliGemma-3B, Chameleon series, Video-LLaVA-7B-HF, Ovis series, Mantis series, MiniCPM-V2.6, OmChat-v2.0-13B-sinlge-beta, Idefics-3, GLM-4v-9B, VideoChat2-HD, RBDash_72b, Llama-3.2 series, Kosmos series.Torchvision Version Recommendation:

Note that some VLMs may not be able to run under certain torchvision versions, we recommend the following settings to evaluate each VLM:

torchvision>=0.16 for: Moondream series and AriaFlash-attn Version Recommendation:

Note that some VLMs may not be able to run under certain flash-attention versions, we recommend the following settings to evaluate each VLM:

pip install flash-attn --no-build-isolation for: Aria# Demo

from vlmeval.config import supported_VLM

model = supported_VLM['idefics_9b_instruct']()

# Forward Single Image

ret = model.generate(['assets/apple.jpg', 'What is in this image?'])

print(ret) # The image features a red apple with a leaf on it.

# Forward Multiple Images

ret = model.generate(['assets/apple.jpg', 'assets/apple.jpg', 'How many apples are there in the provided images? '])

print(ret) # There are two apples in the provided images.

To develop custom benchmarks, VLMs, or simply contribute other codes to VLMEvalKit, please refer to [Development_Guide | 开发指南].

Call for contributions

To promote the contribution from the community and share the corresponding credit (in the next report update):

Here is a contributor list we curated based on the records.

The codebase is designed to:

generate_inner() function, all other workloads (data downloading, data preprocessing, prediction inference, metric calculation) are handled by the codebase.The codebase is not designed to:

If you find this work helpful, please consider to star🌟 this repo. Thanks for your support!

If you use VLMEvalKit in your research or wish to refer to published OpenSource evaluation results, please use the following BibTeX entry and the BibTex entry corresponding to the specific VLM / benchmark you used.

@inproceedings{duan2024vlmevalkit,

title={Vlmevalkit: An open-source toolkit for evaluating large multi-modality models},

author={Duan, Haodong and Yang, Junming and Qiao, Yuxuan and Fang, Xinyu and Chen, Lin and Liu, Yuan and Dong, Xiaoyi and Zang, Yuhang and Zhang, Pan and Wang, Jiaqi and others},

booktitle={Proceedings of the 32nd ACM International Conference on Multimedia},

pages={11198--11201},

year={2024}

}

FAQs

OpenCompass VLM Evaluation Kit for Eval-Scope

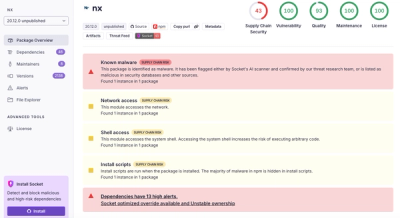

We found that ms-vlmeval demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.

Security News

CISA’s 2025 draft SBOM guidance adds new fields like hashes, licenses, and tool metadata to make software inventories more actionable.