Security News

Crates.io Users Targeted by Phishing Emails

The Rust Security Response WG is warning of phishing emails from rustfoundation.dev targeting crates.io users.

Installation | Usage | Leaderboard | Documentation | Citing

pip install mteb

import mteb

from sentence_transformers import SentenceTransformer

# Define the sentence-transformers model name

model_name = "average_word_embeddings_komninos"

model = mteb.get_model(model_name) # if the model is not implemented in MTEB it will be eq. to SentenceTransformer(model_name)

tasks = mteb.get_tasks(tasks=["Banking77Classification"])

evaluation = mteb.MTEB(tasks=tasks)

results = evaluation.run(model, output_folder=f"results/{model_name}")

mteb available_tasks # list _all_ available tasks

mteb run -m sentence-transformers/all-MiniLM-L6-v2 \

-t Banking77Classification \

--verbosity 3

# if nothing is specified default to saving the results in the results/{model_name} folder

Note that using multiple GPUs in parallel can be done by just having a custom encode function that distributes the inputs to multiple GPUs like e.g. here or here. See custom models for more information.

The following links to the main sections in the usage documentation.

| Section | |

|---|---|

| General | |

| Evaluating a Model | How to evaluate a model |

| Evaluating on different Modalities | How to evaluate image and image-text tasks |

| MIEB | How to run the Massive Image Embedding Benchmark |

| Selecting Tasks | |

| Selecting a benchmark | How to select benchmarks |

| Task selection | How to select and filter tasks |

| Selecting Split and Subsets | How to select evaluation splits or subsets |

| Using a Custom Task | How to evaluate on a custom task |

| Selecting a Model | |

| Using a Pre-defined Model | How to run a pre-defined model |

| Using a SentenceTransformer Model | How to run a model loaded using sentence-transformers |

| Using a Custom Model | How to run and implement a custom model |

| Running Evaluation | |

| Passing Arguments to the model | How to pass encode arguments to the model |

| Running Cross Encoders | How to run cross encoders for reranking |

| Running Late Interaction (ColBERT) | How to run late interaction models |

| Saving Retrieval Predictions | How to save prediction for later analysis |

| Caching Embeddings | How to cache and re-use embeddings |

| Leaderboard | |

| Running the Leaderboard Locally | How to run the leaderboard locally |

| Report Data Contamination | How to report data contamination for a model |

| Loading and working with Results | How to load and working with the raw results from the leaderboard, including making result dataframes |

| Overview | |

|---|---|

| 📈 Leaderboard | The interactive leaderboard of the benchmark |

| 📋 Tasks | Overview of available tasks |

| 📐 Benchmarks | Overview of available benchmarks |

| Contributing | |

| 🤖 Adding a model | How to submit a model to MTEB and to the leaderboard |

| 👩🔬 Reproducible workflows | How to create reproducible workflows with MTEB |

| 👩💻 Adding a dataset | How to add a new task/dataset to MTEB |

| 👩💻 Adding a benchmark | How to add a new benchmark to MTEB and to the leaderboard |

| 🤝 Contributing | How to contribute to MTEB and set it up for development |

MTEB was introduced in "MTEB: Massive Text Embedding Benchmark", and heavily expanded in "MMTEB: Massive Multilingual Text Embedding Benchmark". When using mteb, we recommend that you cite both articles.

@article{muennighoff2022mteb,

author = {Muennighoff, Niklas and Tazi, Nouamane and Magne, Lo{\"\i}c and Reimers, Nils},

title = {MTEB: Massive Text Embedding Benchmark},

publisher = {arXiv},

journal={arXiv preprint arXiv:2210.07316},

year = {2022}

url = {https://arxiv.org/abs/2210.07316},

doi = {10.48550/ARXIV.2210.07316},

}

@article{enevoldsen2025mmtebmassivemultilingualtext,

title={MMTEB: Massive Multilingual Text Embedding Benchmark},

author={Kenneth Enevoldsen and Isaac Chung and Imene Kerboua and Márton Kardos and Ashwin Mathur and David Stap and Jay Gala and Wissam Siblini and Dominik Krzemiński and Genta Indra Winata and Saba Sturua and Saiteja Utpala and Mathieu Ciancone and Marion Schaeffer and Gabriel Sequeira and Diganta Misra and Shreeya Dhakal and Jonathan Rystrøm and Roman Solomatin and Ömer Çağatan and Akash Kundu and Martin Bernstorff and Shitao Xiao and Akshita Sukhlecha and Bhavish Pahwa and Rafał Poświata and Kranthi Kiran GV and Shawon Ashraf and Daniel Auras and Björn Plüster and Jan Philipp Harries and Loïc Magne and Isabelle Mohr and Mariya Hendriksen and Dawei Zhu and Hippolyte Gisserot-Boukhlef and Tom Aarsen and Jan Kostkan and Konrad Wojtasik and Taemin Lee and Marek Šuppa and Crystina Zhang and Roberta Rocca and Mohammed Hamdy and Andrianos Michail and John Yang and Manuel Faysse and Aleksei Vatolin and Nandan Thakur and Manan Dey and Dipam Vasani and Pranjal Chitale and Simone Tedeschi and Nguyen Tai and Artem Snegirev and Michael Günther and Mengzhou Xia and Weijia Shi and Xing Han Lù and Jordan Clive and Gayatri Krishnakumar and Anna Maksimova and Silvan Wehrli and Maria Tikhonova and Henil Panchal and Aleksandr Abramov and Malte Ostendorff and Zheng Liu and Simon Clematide and Lester James Miranda and Alena Fenogenova and Guangyu Song and Ruqiya Bin Safi and Wen-Ding Li and Alessia Borghini and Federico Cassano and Hongjin Su and Jimmy Lin and Howard Yen and Lasse Hansen and Sara Hooker and Chenghao Xiao and Vaibhav Adlakha and Orion Weller and Siva Reddy and Niklas Muennighoff},

publisher = {arXiv},

journal={arXiv preprint arXiv:2502.13595},

year={2025},

url={https://arxiv.org/abs/2502.13595},

doi = {10.48550/arXiv.2502.13595},

}

If you use any of the specific benchmarks, we also recommend that you cite the authors.

benchmark = mteb.get_benchmark("MTEB(eng, v2)")

benchmark.citation # get citation for a specific benchmark

# you can also create a table of the task for the appendix using:

benchmark.tasks.to_latex()

Some of these amazing publications include (ordered chronologically):

FAQs

Massive Text Embedding Benchmark

We found that mteb demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 4 open source maintainers collaborating on the project.

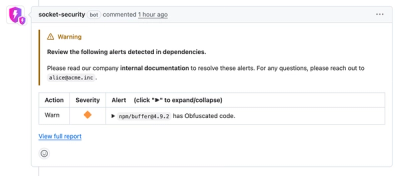

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

The Rust Security Response WG is warning of phishing emails from rustfoundation.dev targeting crates.io users.

Product

Socket now lets you customize pull request alert headers, helping security teams share clear guidance right in PRs to speed reviews and reduce back-and-forth.

Product

Socket's Rust support is moving to Beta: all users can scan Cargo projects and generate SBOMs, including Cargo.toml-only crates, with Rust-aware supply chain checks.