Research

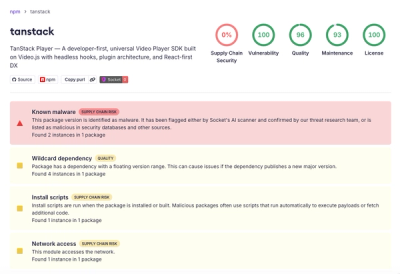

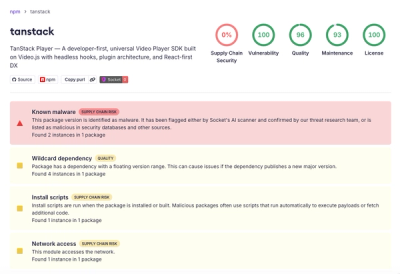

Malicious npm Package Brand-Squats TanStack to Exfiltrate Environment Variables

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

tldextract

Advanced tools

Accurately separates a URL's subdomain, domain, and public suffix, using the Public Suffix List (PSL). By default, this includes the public ICANN TLDs and their exceptions. You can optionally support the Public Suffix List's private domains as well.

tldextract accurately separates a URL's subdomain, domain, and public suffix,

using the Public Suffix List (PSL).

Why? Naive URL parsing like splitting on dots fails for domains like

forums.bbc.co.uk (gives "co" instead of "bbc"). tldextract handles the edge

cases, so you don't have to.

>>> import tldextract

>>> tldextract.extract('http://forums.news.cnn.com/')

ExtractResult(subdomain='forums.news', domain='cnn', suffix='com', is_private=False)

>>> tldextract.extract('http://forums.bbc.co.uk/')

ExtractResult(subdomain='forums', domain='bbc', suffix='co.uk', is_private=False)

>>> # Access the parts you need

>>> ext = tldextract.extract('http://forums.bbc.co.uk')

>>> ext.domain

'bbc'

>>> ext.top_domain_under_public_suffix

'bbc.co.uk'

>>> ext.fqdn

'forums.bbc.co.uk'

pip install tldextract

no_fetch_extract = tldextract.TLDExtract(suffix_list_urls=())

no_fetch_extract('http://www.google.com')

Via environment variable:

export TLDEXTRACT_CACHE="/path/to/cache"

Or in code:

custom_cache_extract = tldextract.TLDExtract(cache_dir='/path/to/cache/')

Command line:

tldextract --update

Or delete the cache folder:

rm -rf $HOME/.cache/python-tldextract

extract = tldextract.TLDExtract(include_psl_private_domains=True)

extract('waiterrant.blogspot.com')

# ExtractResult(subdomain='', domain='waiterrant', suffix='blogspot.com', is_private=True)

extract = tldextract.TLDExtract(

suffix_list_urls=["file:///path/to/your/list.dat"],

cache_dir='/path/to/cache/',

fallback_to_snapshot=False)

extract = tldextract.TLDExtract(

suffix_list_urls=["https://myserver.com/suffix-list.dat"])

extract = tldextract.TLDExtract(

extra_suffixes=["foo", "bar.baz"])

from urllib.parse import urlsplit

split_url = urlsplit("https://example.com:8080/path")

result = tldextract.extract_urllib(split_url)

$ tldextract http://forums.bbc.co.uk

forums bbc co.uk

$ tldextract --update # Update cached suffix list

$ tldextract --help # See all options

tldextract uses the Public Suffix List, a

community-maintained list of domain suffixes. The PSL contains both:

.com, .co.uk,

.org.kg)blogspot.com, github.io)Web browsers use this same list for security decisions like cookie scoping.

While .com is a top-level domain (TLD), many suffixes like .co.uk are

technically second-level. The PSL uses "public suffix" to cover both.

By default, tldextract treats private suffixes as regular domains:

>>> tldextract.extract('waiterrant.blogspot.com')

ExtractResult(subdomain='waiterrant', domain='blogspot', suffix='com', is_private=False)

To treat them as suffixes instead, see How to treat private domains as suffixes.

By default, tldextract fetches the latest Public Suffix List on first use and

caches it indefinitely in $HOME/.cache/python-tldextract.

tldextract accepts any string and is very lenient. It prioritizes ease of use

over strict validation, extracting domains from any string, even partial URLs or

non-URLs.

tldextract doesn't maintain the suffix list. Submit changes to

the Public Suffix List.

Meanwhile, use the extra_suffixes parameter, or fork the PSL and pass it to

this library with the suffix_list_urls parameter.

Check if it's in the "PRIVATE" section. See How to treat private domains as suffixes.

See URL validation and How to validate URLs before extraction.

git clone this repository.pip install --upgrade --editable '.[testing]'tox --parallel # Test all Python versions

tox -e py311 # Test specific Python version

ruff format . # Format code

This package started from a StackOverflow answer about regex-based domain extraction. The regex approach fails for many domains, so this library switched to the Public Suffix List for accuracy.

FAQs

Accurately separates a URL's subdomain, domain, and public suffix, using the Public Suffix List (PSL). By default, this includes the public ICANN TLDs and their exceptions. You can optionally support the Public Suffix List's private domains as well.

We found that tldextract demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

A brand-squatted TanStack npm package used postinstall scripts to steal .env files and exfiltrate developer secrets to an attacker-controlled endpoint.

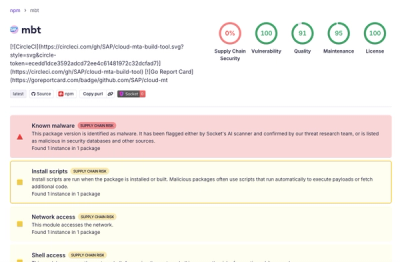

Research

Compromised SAP CAP npm packages download and execute unverified binaries, creating urgent supply chain risk for affected developers and CI/CD environments.

Company News

Socket has acquired Secure Annex to expand extension security across browsers, IDEs, and AI tools.