Product

Announcing Socket Fix 2.0

Socket Fix 2.0 brings targeted CVE remediation, smarter upgrade planning, and broader ecosystem support to help developers get to zero alerts.

By Joe Norton

RubyRetriever is a Web Crawler, Scraper & File Harvester. Available as a command-line executable and as a crawling framework.

RubyRetriever (RR) uses asynchronous HTTP requests via Eventmachine & Synchrony to crawl webpages very quickly. RR also uses a Ruby implementation of the bloomfilter in order to keep track of pages it has already crawled in a memory efficient manner.

v1.4.3 Update (3/24/2016) - Fixes problem with file downloads that had query strings, the filename was being saved with the querystrings still attached. No more.

v1.4.2 Update (3/24/2016) - Fixes problem with named anchors (divs) being counted as links.

v1.4.1 Update (3/24/2016) - Update gemfile & external dependency versioning

v1.4.0 Update (3/24/2016) - Several bug fixes.

RubyRetriever aims to be the best command-line crawling and scraping package written in Ruby and a replacement for paid software such as Screaming Frog SEO Spider.

Roadmap?

Not sure. Feel free to offer your thoughts.

Some Potential Ideas:

As an Executable

With a single command at the terminal, RR can:

Used in Custom scripts

As of version 1.3.0, with the PageIterator class you can pass a custom block that will get run against each page during a crawl, and collect the results in an array. This means you can define for yourself whatever it is you want to collect from each page during the crawl.

Help & Forks Welcome!

Install the gem

$ gem install rubyretriever

Example: Sitemap mode

$ rr --sitemap CSV --progress --limit 10 http://www.cnet.com

OR -- SAME COMMAND

$ rr -s csv -p -l 10 http://www.cnet.com

This would map http://www.cnet.com until it crawled a max of 10 pages, then write the results to a CSV named cnet. Optionally, you could also use the format XML and RR would output the same URL list into a valid XML sitemap that could be submitted to Google.

Example: File Harvesting mode

$ rr --files txt --verbose --limit 1 http://textfiles.com/programming/

OR -- SAME COMMAND

$ rr -f txt -v -l 1 http://textfiles.com/programming/

This would crawl http://textfiles.com/programming/ looking for txt files for only a single page, then write out a list of filepaths to txt files to the terminal. Optionally, you could have the script autodownload all the files by adding the -a/--auto flag.

Example: SEO mode

$ rr --seo --progress --limit 10 --out cnet-seo http://www.cnet.com

OR -- SAME COMMAND

$ rr -e -p -l 10 -o cnet-seo http://www.cnet.com

This would go to http://www.cnet.com and crawl a max of 10 pages, during which it would collect the SEO fields on those pages - this currently means [url, page title, meta description, h1 text, h2 text]. It would then write the fields to a csv named cnet-seo.

Usage: rr [MODE FLAG] [OPTIONS] Target_URL

Where MODE FLAG is required, and is either:

-s, --sitemap FORMAT (only accepts CSV or XML atm)

-f, --files FILETYPE

-e, --seo

and OPTIONS is the applicable:

-o, --out FILENAME Dump fetch data as CSV

-p, --progress Outputs a progressbar

-v, --verbose Output more information

-l, --limit PAGE_LIMIT_# set a max on the total number of crawled pages

-h, --help Display this screen

If you want to collect something, other than that which the executable allows, on a 'per page' basis then you want to use the PageIterator class. Then you can run whatever block you want against each individual page's source code located during the crawl.

Sample Script using PageIterator

require 'retriever'

opts = {

'maxpages' => 1

}

t = Retriever::PageIterator.new('http://www.basecamp.com', opts) do |page|

[page.url, page.title]

end

puts t.result.to_s

>> [["http://www.basecamp.com", "Basecamp is everyone’s favorite project management app."]]

Available methods on the page iterator:

em-synchrony

ruby-progressbar

bloomfilter-rb

addressable

htmlentities

See included 'LICENSE' file. It's the MIT license.

FAQs

Unknown package

We found that rubyretriever demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket Fix 2.0 brings targeted CVE remediation, smarter upgrade planning, and broader ecosystem support to help developers get to zero alerts.

Security News

Socket CEO Feross Aboukhadijeh joins Risky Business Weekly to unpack recent npm phishing attacks, their limited impact, and the risks if attackers get smarter.

Product

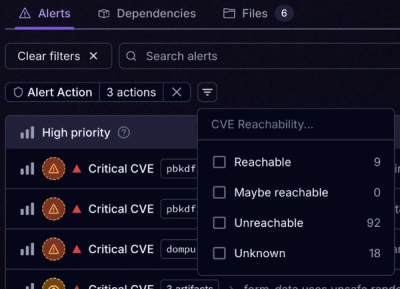

Socket’s new Tier 1 Reachability filters out up to 80% of irrelevant CVEs, so security teams can focus on the vulnerabilities that matter.