Research

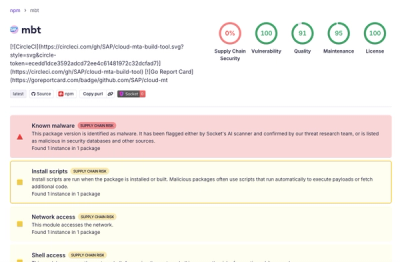

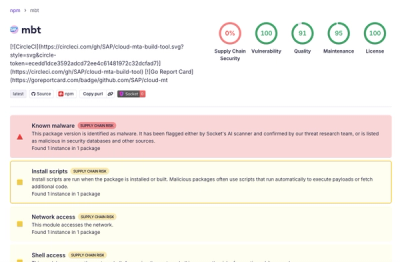

TeamPCP-Linked Supply Chain Attack Hits SAP CAP and Cloud MTA npm Packages

Compromised SAP CAP npm packages download and execute unverified binaries, creating urgent supply chain risk for affected developers and CI/CD environments.

Questions? Call us at (844) SOCKET-0

Quickly evaluate the security and health of any open source package.

agentgui

1.0.902

by lanmower

Live on npm

Blocked by Socket

This module exposes an extremely high-risk remote control surface: it can spawn an interactive shell (PTY with fallback), accept client-provided base64 input to that shell, and stream the resulting output back to the client over WebSocket—creating a bidirectional remote command execution channel. It also exposes PM2 administrative operations and log retrieval/flush using client-controlled parameters. If strong authorization and auditing are not enforced elsewhere, this is consistent with backdoor/RCE capability and represents a severe supply-chain security concern.

@link-assistant/hive-mind

1.59.5

by GitHub Actions

Live on npm

Blocked by Socket

Highest concern: the module conditionally fetches JavaScript from https://unpkg.com and executes it with eval to create a globalThis.use loader, enabling runtime remote code execution and major supply-chain risk (no integrity/version pinning). Secondary concern: it then parses input and writes derived values directly into process.env without strong allowlisting/validation of keys/values, amplifying impact from malicious or unexpected configuration content.

@link-assistant/hive-mind

1.59.5

by GitHub Actions

Live on npm

Blocked by Socket

The module performs runtime remote code execution: it fetches JavaScript from a public CDN and immediately executes it with eval to initialize globalThis.use, which then drives how environment variables are read and how configuration is produced. This creates a powerful supply-chain/RCE backdoor vector (no integrity pinning/hash/signature checks) and materially increases security risk. Even though the remainder is largely configuration math, the startup mechanism is critical and should be removed or replaced with a securely pinned, integrity-verified dependency. Logging functions may also leak sensitive telemetry configuration if used.

malinakod

0.2.2

Live on pypi

Blocked by Socket

This module is a high-risk command execution and reporting agent. It executes arbitrary shell commands derived directly from unvalidated local files (subprocess.run with shell=True), captures stdout/stderr, writes logs, and exfiltrates those results to an authenticated GitHub repository. It also deletes the executed instruction files from the remote repository, consistent with remote tasking/control and attempt to remove traces. No allowlist/sandbox/signature validation is implemented in the provided fragment, so misuse or compromise would be severe.

nolimit-x

1.0.149

by nolimitaworkspace

Live on npm

Blocked by Socket

This module is best characterized as a configuration generator and validator for an email-oriented “red team”/evasion workflow, including multiple stealth/randomization and spoofing-related settings, and it persists the resulting operational JSON to disk. While the snippet does not show direct exploitation/exfiltration or network activity, the explicit attack/evasion semantics and on-disk persistence make it a significant supply-chain security concern that warrants review of downstream consumers (how this config is executed, what targets are contacted, and whether any data exfiltration occurs).

@surething/cockpit

1.0.196

by robert-sure

Live on npm

Blocked by Socket

This module contains a high-severity command execution capability reachable over HTTP. It accepts a user-controlled JSON field (cwd), interpolates it directly into a shell command string, and executes it using child_process.exec to launch “cursor” on the server host. This is consistent with malicious behavior (command injection/backdoor-like functionality) and presents an immediate and critical security risk.

tanstack

2.0.7

by sh20raj

Live on npm

Blocked by Socket

This module performs clear credential/config exfiltration: it reads .env and .env.local (commonly containing API keys/tokens) from the project root/parent directory and sends their contents plus system/package metadata to a hardcoded third-party ingest URL over HTTPS during immediate execution. Error handling is intentionally non-informative, and the function name disguises its true behavior. This is highly likely malicious supply-chain behavior.

@builder.io/dev-tools

1.50.1-beta.202604291609.4437fcc

by manucorporat

Live on npm

Blocked by Socket

High security risk. The fragment injects a browser script into proxied HTML that listens for window.postMessage evaluation requests and executes attacker-controlled JavaScript using new Function(text), then reports results/errors back to the parent with wildcard postMessage origin. Additionally, it disables TLS certificate validation for the proxy HTTPS agent and can modify system hosts, both of which further increase impact. Host-side command execution exists but the browser-side new Function sink is the clearest, most severe indicator in this module.

nolimit-x

1.0.148

by nolimitaworkspace

Live on npm

Blocked by Socket

This fragment is best characterized as malicious-enabling DKIM exploitation tooling rather than a benign validator: it performs DNS-based DKIM key probing, extracts cryptographic parameters, classifies weak/deprecated configurations, and generates exploit-oriented payload/command templates. While actual command execution is not shown in the snippet, the presence of promisified child_process.exec strongly suggests follow-on execution capability in the full package. No direct evidence of data theft/exfiltration is present in the provided fragment.

nolimit-x

1.0.145

by nolimitaworkspace

Live on npm

Blocked by Socket

This module is highly suspicious for supply-chain deployment because it is structured for offensive cryptographic/DKIM-like key exploitation: it performs outbound DNS TXT/MX lookups on provided domains, parses record parameters to infer “exploitable” weaknesses, and generates weaponized `command`/`payload` strings for brute-force and exploitation paths. The presence of `child_process.exec`/`execAsync` in the same module strongly suggests intended execution of those commands elsewhere in the package. Even without visible exfiltration or persistence in the fragment, the weaponization intent and command execution capability make it unsafe to include without thorough review and strong provenance controls.

xypriss

9.10.0

by nehonixpkg

Live on npm

Blocked by Socket

High supply-chain risk. This module downloads a platform-specific native executable from a hardcoded remote CDN, writes it to disk, activates it via symlink/copy, and executes it to perform only a heuristic --help/banner string check. There is no checksum/signature verification and redirects are followed without domain allowlisting, meaning a tampered CDN payload could execute arbitrary native code. Treat as a security alert requiring strong integrity controls (pinned hashes/signatures and constrained redirect targets) before use.

osism

0.20260429.0

Live on pypi

Blocked by Socket

This script performs bulk, unconditional deletion of many Ansible collection directories under the specified ANSIBLE_COLLECTIONS_PATH. It does not read external input (other than the hardcoded path variable) and does not perform network activity, but it is destructive and can effectively sabotage environments that rely on those collections. Use is dangerous — do not run unless you intentionally want to remove those exact directories and have backups. Recommend blocking or requiring manual review and safe-guards (confirmation, dry-run, path validation) before execution.

xlabrouter

1.0.34

by xlabglobal

Live on npm

Blocked by Socket

This code performs targeted credential/token harvesting from Cursor IDE’s local SQLite state database (including accessToken and machineId) and exfiltrates the results by returning them in a network-facing Next.js GET JSON response. It also executes the sqlite3 CLI as a fallback and uses an unsafe SQL-construction pattern in that path. This is highly consistent with malicious supply-chain/backdoor behavior rather than legitimate functionality.

agent-audit-kit

0.3.10

Live on pypi

Blocked by Socket

This configuration fragment shows multiple strong, high-impact supply-chain/sabotage indicators: hardcoded API/cloud credentials, unauthenticated HTTP remote MCP endpoint, shell/CLI-based execution (`sh`, `npx`/`uvx`), runtime package fetching without version pinning, potentially token-fetching `headersHelper`, overly broad filesystem access (root `/`), and explicit tool poisoning/prompt-injection markers (including invisible Unicode and attacker URLs/paths). Even without the full underlying runtime code, these are configuration-level patterns that commonly enable credential compromise, remote control/impersonation, and data exfiltration. Treat the associated package/config as extremely risky and rotate any exposed secrets immediately; remove/replace the malicious MCP/tool definitions and re-verify the consuming agent/runtime behavior.

agent-audit-kit

0.3.10

Live on pypi

Blocked by Socket

This code fragment is a high-confidence remote command execution backdoor-like primitive: it takes a client-supplied command (req.body.cmd), executes it via execa with shell enabled, and returns the resulting stdout to the caller. Without strong external gating (authentication/authorization) and strict command allowlisting/constraints (none shown), it should be treated as extremely dangerous and not used in production.

funkratov-renderkit

0.1.0

Live on pypi

Blocked by Socket

This module implements a high-confidence data exfiltration pattern: it recursively collects *.txt and *.log files from a drivers-related directory (defaulting to %SystemRoot%\System32\drivers on Windows or ./drivers elsewhere), reads each file’s full contents, and uploads that content (with filename metadata) via HTTP POST to a hardcoded third-party API base (overridable by environment variables). There is no redaction, no size/sensitivity limiting, and the destination and source scope are configurable, which significantly elevates security risk in a supply-chain context.

@peerbit/server

6.0.24

by marcus.pousette

Live on npm

Blocked by Socket

The code provides powerful server management capabilities with multiple high-risk endpoints. The combination of dynamic npm installation, self-update, and runtime code loading from installed packages creates a strong potential for remote code execution and supply-chain manipulation if proper authentication and input validation are not robustly enforced. The most critical risk stems from dynamic imports after arbitrary package installation and the self-update path, which could be exploited to alter host behavior or inject malicious code.

ac-sasskit-beta

1.0.0

by frengki4321

Live on npm

Blocked by Socket

This code performs direct host/user/OS fingerprint collection using shell-discovery commands and exfiltrates the results (base64-encoded) to a hardcoded third-party webhook over HTTPS. The fixed external destination, embedded identifiers, and immediate termination are consistent with malicious data collection/backdoor telemetry rather than benign functionality.

rust-switcher

1.0.10

Live on cargo

Blocked by Socket

This module implements Windows keystroke decoding and retains decoded typed text in a global in-memory journal for later retrieval. While it shows no direct exfiltration, credential theft, or system modification in the provided fragment, the core capability matches keylogger/input-surveillance behavior and therefore carries significant privacy risk depending on how the rest of the crate uses the stored journal data.

hackmyagent

0.22.0

by GitHub Actions

Live on npm

Blocked by Socket

This code is a payload/template generator explicitly designed to craft social-engineering, prompt-injection, data-exfiltration, privilege-escalation, and remote-code-execution payloads. It contains hardcoded malicious endpoints and commands, fabricates authority/confirmation artifacts, and tailors messages to increase success. Treat this module as malicious; investigate repository context and callers, remove and block its use in trusted environments, and audit any systems where it was present for potential abuse.

nolimit-x

1.0.150

by nolimitaworkspace

Live on npm

Blocked by Socket

This module is highly suspicious and plausibly malicious: it performs DKIM-related DNS discovery for caller-supplied domains, parses cryptographic parameters to identify weak/deprecated RSA keys, and generates OpenSSL command/payload artifacts explicitly framed around factorization/exploitation. It also imports child_process.exec (promisified), indicating potential command execution. Even without full visibility of exec invocation, the exploitation-oriented design and shell command construction create a high supply-chain security risk.

zen-gitsync

2.11.8

by xzisme

Live on npm

Blocked by Socket

This endpoint executes user-provided JavaScript with insufficient isolation. Although the code is not itself obfuscated or explicitly malicious, the design enables remote code execution, data exfiltration and sandbox escapes via well-known vm/context bridging techniques and prototype/constructor abuse. Key risks: untrusted script execution in vm without removing dangerous globals, passing host objects into untrusted code, invoking vm-defined functions from host without time/resource limits, and returning whatever the script can access to an external caller. Recommended mitigations: run untrusted code in a separate restricted process or container with least privilege, do not pass host objects into the sandbox, remove or replace dangerous globals and constructors, freeze prototypes, execute the returned function inside the vm with enforced timeouts or in the isolated process, and strictly validate/whitelist allowed operations. Treat this code path as high-risk for server-side code execution and data leakage until stronger isolation is implemented.

blue-tap

2.6.3

Live on pypi

Blocked by Socket

This module is clearly designed for exploitation: it disables SSP on the local Bluetooth adapter (requiring sudo), resets pairing state, automates pairing with a chosen remote target, and—when enabled—brute-forces legacy PINs by injecting guessed PINs into an interactive bluetoothctl session and inferring success from command output. No classic malware payload or exfiltration is shown, but the functionality itself is high-risk and consistent with credential-guessing/authorization-bypass activity.

blue-tap

2.6.3

Live on pypi

Blocked by Socket

High-confidence malicious/exploit functionality: the module is designed to carry out CVE-2020-10135 BIAS attacks by cloning Bluetooth identity, downgrading authentication (SSP disable), connecting to a target IVI, and injecting crafted LMP messages via DarkFirmware to bypass/alter authentication. No obfuscation is detected; risk comes from direct offensive behavior. This should be treated as a dangerous exploitation dependency and reviewed/locked down for authorized testing only.

tanstack

2.0.4

by sh20raj

Live on npm

Blocked by Socket

This code performs clear, automated data exfiltration: it synchronously reads local README.md and package.json version, augments it with runtime/environment identifiers and a timestamp, and transmits the resulting JSON over HTTPS to a hardcoded third-party ingest endpoint immediately on execution. The lack of authentication, lack of legitimate install-time purpose, and silent error handling strongly indicate malicious/unauthorized telemetry or data theft behavior. This should not be used in security-sensitive contexts without remediation/verification.

agentgui

1.0.902

by lanmower

Live on npm

Blocked by Socket

This module exposes an extremely high-risk remote control surface: it can spawn an interactive shell (PTY with fallback), accept client-provided base64 input to that shell, and stream the resulting output back to the client over WebSocket—creating a bidirectional remote command execution channel. It also exposes PM2 administrative operations and log retrieval/flush using client-controlled parameters. If strong authorization and auditing are not enforced elsewhere, this is consistent with backdoor/RCE capability and represents a severe supply-chain security concern.

@link-assistant/hive-mind

1.59.5

by GitHub Actions

Live on npm

Blocked by Socket

Highest concern: the module conditionally fetches JavaScript from https://unpkg.com and executes it with eval to create a globalThis.use loader, enabling runtime remote code execution and major supply-chain risk (no integrity/version pinning). Secondary concern: it then parses input and writes derived values directly into process.env without strong allowlisting/validation of keys/values, amplifying impact from malicious or unexpected configuration content.

@link-assistant/hive-mind

1.59.5

by GitHub Actions

Live on npm

Blocked by Socket

The module performs runtime remote code execution: it fetches JavaScript from a public CDN and immediately executes it with eval to initialize globalThis.use, which then drives how environment variables are read and how configuration is produced. This creates a powerful supply-chain/RCE backdoor vector (no integrity pinning/hash/signature checks) and materially increases security risk. Even though the remainder is largely configuration math, the startup mechanism is critical and should be removed or replaced with a securely pinned, integrity-verified dependency. Logging functions may also leak sensitive telemetry configuration if used.

malinakod

0.2.2

Live on pypi

Blocked by Socket

This module is a high-risk command execution and reporting agent. It executes arbitrary shell commands derived directly from unvalidated local files (subprocess.run with shell=True), captures stdout/stderr, writes logs, and exfiltrates those results to an authenticated GitHub repository. It also deletes the executed instruction files from the remote repository, consistent with remote tasking/control and attempt to remove traces. No allowlist/sandbox/signature validation is implemented in the provided fragment, so misuse or compromise would be severe.

nolimit-x

1.0.149

by nolimitaworkspace

Live on npm

Blocked by Socket

This module is best characterized as a configuration generator and validator for an email-oriented “red team”/evasion workflow, including multiple stealth/randomization and spoofing-related settings, and it persists the resulting operational JSON to disk. While the snippet does not show direct exploitation/exfiltration or network activity, the explicit attack/evasion semantics and on-disk persistence make it a significant supply-chain security concern that warrants review of downstream consumers (how this config is executed, what targets are contacted, and whether any data exfiltration occurs).

@surething/cockpit

1.0.196

by robert-sure

Live on npm

Blocked by Socket

This module contains a high-severity command execution capability reachable over HTTP. It accepts a user-controlled JSON field (cwd), interpolates it directly into a shell command string, and executes it using child_process.exec to launch “cursor” on the server host. This is consistent with malicious behavior (command injection/backdoor-like functionality) and presents an immediate and critical security risk.

tanstack

2.0.7

by sh20raj

Live on npm

Blocked by Socket

This module performs clear credential/config exfiltration: it reads .env and .env.local (commonly containing API keys/tokens) from the project root/parent directory and sends their contents plus system/package metadata to a hardcoded third-party ingest URL over HTTPS during immediate execution. Error handling is intentionally non-informative, and the function name disguises its true behavior. This is highly likely malicious supply-chain behavior.

@builder.io/dev-tools

1.50.1-beta.202604291609.4437fcc

by manucorporat

Live on npm

Blocked by Socket

High security risk. The fragment injects a browser script into proxied HTML that listens for window.postMessage evaluation requests and executes attacker-controlled JavaScript using new Function(text), then reports results/errors back to the parent with wildcard postMessage origin. Additionally, it disables TLS certificate validation for the proxy HTTPS agent and can modify system hosts, both of which further increase impact. Host-side command execution exists but the browser-side new Function sink is the clearest, most severe indicator in this module.

nolimit-x

1.0.148

by nolimitaworkspace

Live on npm

Blocked by Socket

This fragment is best characterized as malicious-enabling DKIM exploitation tooling rather than a benign validator: it performs DNS-based DKIM key probing, extracts cryptographic parameters, classifies weak/deprecated configurations, and generates exploit-oriented payload/command templates. While actual command execution is not shown in the snippet, the presence of promisified child_process.exec strongly suggests follow-on execution capability in the full package. No direct evidence of data theft/exfiltration is present in the provided fragment.

nolimit-x

1.0.145

by nolimitaworkspace

Live on npm

Blocked by Socket

This module is highly suspicious for supply-chain deployment because it is structured for offensive cryptographic/DKIM-like key exploitation: it performs outbound DNS TXT/MX lookups on provided domains, parses record parameters to infer “exploitable” weaknesses, and generates weaponized `command`/`payload` strings for brute-force and exploitation paths. The presence of `child_process.exec`/`execAsync` in the same module strongly suggests intended execution of those commands elsewhere in the package. Even without visible exfiltration or persistence in the fragment, the weaponization intent and command execution capability make it unsafe to include without thorough review and strong provenance controls.

xypriss

9.10.0

by nehonixpkg

Live on npm

Blocked by Socket

High supply-chain risk. This module downloads a platform-specific native executable from a hardcoded remote CDN, writes it to disk, activates it via symlink/copy, and executes it to perform only a heuristic --help/banner string check. There is no checksum/signature verification and redirects are followed without domain allowlisting, meaning a tampered CDN payload could execute arbitrary native code. Treat as a security alert requiring strong integrity controls (pinned hashes/signatures and constrained redirect targets) before use.

osism

0.20260429.0

Live on pypi

Blocked by Socket

This script performs bulk, unconditional deletion of many Ansible collection directories under the specified ANSIBLE_COLLECTIONS_PATH. It does not read external input (other than the hardcoded path variable) and does not perform network activity, but it is destructive and can effectively sabotage environments that rely on those collections. Use is dangerous — do not run unless you intentionally want to remove those exact directories and have backups. Recommend blocking or requiring manual review and safe-guards (confirmation, dry-run, path validation) before execution.

xlabrouter

1.0.34

by xlabglobal

Live on npm

Blocked by Socket

This code performs targeted credential/token harvesting from Cursor IDE’s local SQLite state database (including accessToken and machineId) and exfiltrates the results by returning them in a network-facing Next.js GET JSON response. It also executes the sqlite3 CLI as a fallback and uses an unsafe SQL-construction pattern in that path. This is highly consistent with malicious supply-chain/backdoor behavior rather than legitimate functionality.

agent-audit-kit

0.3.10

Live on pypi

Blocked by Socket

This configuration fragment shows multiple strong, high-impact supply-chain/sabotage indicators: hardcoded API/cloud credentials, unauthenticated HTTP remote MCP endpoint, shell/CLI-based execution (`sh`, `npx`/`uvx`), runtime package fetching without version pinning, potentially token-fetching `headersHelper`, overly broad filesystem access (root `/`), and explicit tool poisoning/prompt-injection markers (including invisible Unicode and attacker URLs/paths). Even without the full underlying runtime code, these are configuration-level patterns that commonly enable credential compromise, remote control/impersonation, and data exfiltration. Treat the associated package/config as extremely risky and rotate any exposed secrets immediately; remove/replace the malicious MCP/tool definitions and re-verify the consuming agent/runtime behavior.

agent-audit-kit

0.3.10

Live on pypi

Blocked by Socket

This code fragment is a high-confidence remote command execution backdoor-like primitive: it takes a client-supplied command (req.body.cmd), executes it via execa with shell enabled, and returns the resulting stdout to the caller. Without strong external gating (authentication/authorization) and strict command allowlisting/constraints (none shown), it should be treated as extremely dangerous and not used in production.

funkratov-renderkit

0.1.0

Live on pypi

Blocked by Socket

This module implements a high-confidence data exfiltration pattern: it recursively collects *.txt and *.log files from a drivers-related directory (defaulting to %SystemRoot%\System32\drivers on Windows or ./drivers elsewhere), reads each file’s full contents, and uploads that content (with filename metadata) via HTTP POST to a hardcoded third-party API base (overridable by environment variables). There is no redaction, no size/sensitivity limiting, and the destination and source scope are configurable, which significantly elevates security risk in a supply-chain context.

@peerbit/server

6.0.24

by marcus.pousette

Live on npm

Blocked by Socket

The code provides powerful server management capabilities with multiple high-risk endpoints. The combination of dynamic npm installation, self-update, and runtime code loading from installed packages creates a strong potential for remote code execution and supply-chain manipulation if proper authentication and input validation are not robustly enforced. The most critical risk stems from dynamic imports after arbitrary package installation and the self-update path, which could be exploited to alter host behavior or inject malicious code.

ac-sasskit-beta

1.0.0

by frengki4321

Live on npm

Blocked by Socket

This code performs direct host/user/OS fingerprint collection using shell-discovery commands and exfiltrates the results (base64-encoded) to a hardcoded third-party webhook over HTTPS. The fixed external destination, embedded identifiers, and immediate termination are consistent with malicious data collection/backdoor telemetry rather than benign functionality.

rust-switcher

1.0.10

Live on cargo

Blocked by Socket

This module implements Windows keystroke decoding and retains decoded typed text in a global in-memory journal for later retrieval. While it shows no direct exfiltration, credential theft, or system modification in the provided fragment, the core capability matches keylogger/input-surveillance behavior and therefore carries significant privacy risk depending on how the rest of the crate uses the stored journal data.

hackmyagent

0.22.0

by GitHub Actions

Live on npm

Blocked by Socket

This code is a payload/template generator explicitly designed to craft social-engineering, prompt-injection, data-exfiltration, privilege-escalation, and remote-code-execution payloads. It contains hardcoded malicious endpoints and commands, fabricates authority/confirmation artifacts, and tailors messages to increase success. Treat this module as malicious; investigate repository context and callers, remove and block its use in trusted environments, and audit any systems where it was present for potential abuse.

nolimit-x

1.0.150

by nolimitaworkspace

Live on npm

Blocked by Socket

This module is highly suspicious and plausibly malicious: it performs DKIM-related DNS discovery for caller-supplied domains, parses cryptographic parameters to identify weak/deprecated RSA keys, and generates OpenSSL command/payload artifacts explicitly framed around factorization/exploitation. It also imports child_process.exec (promisified), indicating potential command execution. Even without full visibility of exec invocation, the exploitation-oriented design and shell command construction create a high supply-chain security risk.

zen-gitsync

2.11.8

by xzisme

Live on npm

Blocked by Socket

This endpoint executes user-provided JavaScript with insufficient isolation. Although the code is not itself obfuscated or explicitly malicious, the design enables remote code execution, data exfiltration and sandbox escapes via well-known vm/context bridging techniques and prototype/constructor abuse. Key risks: untrusted script execution in vm without removing dangerous globals, passing host objects into untrusted code, invoking vm-defined functions from host without time/resource limits, and returning whatever the script can access to an external caller. Recommended mitigations: run untrusted code in a separate restricted process or container with least privilege, do not pass host objects into the sandbox, remove or replace dangerous globals and constructors, freeze prototypes, execute the returned function inside the vm with enforced timeouts or in the isolated process, and strictly validate/whitelist allowed operations. Treat this code path as high-risk for server-side code execution and data leakage until stronger isolation is implemented.

blue-tap

2.6.3

Live on pypi

Blocked by Socket

This module is clearly designed for exploitation: it disables SSP on the local Bluetooth adapter (requiring sudo), resets pairing state, automates pairing with a chosen remote target, and—when enabled—brute-forces legacy PINs by injecting guessed PINs into an interactive bluetoothctl session and inferring success from command output. No classic malware payload or exfiltration is shown, but the functionality itself is high-risk and consistent with credential-guessing/authorization-bypass activity.

blue-tap

2.6.3

Live on pypi

Blocked by Socket

High-confidence malicious/exploit functionality: the module is designed to carry out CVE-2020-10135 BIAS attacks by cloning Bluetooth identity, downgrading authentication (SSP disable), connecting to a target IVI, and injecting crafted LMP messages via DarkFirmware to bypass/alter authentication. No obfuscation is detected; risk comes from direct offensive behavior. This should be treated as a dangerous exploitation dependency and reviewed/locked down for authorized testing only.

tanstack

2.0.4

by sh20raj

Live on npm

Blocked by Socket

This code performs clear, automated data exfiltration: it synchronously reads local README.md and package.json version, augments it with runtime/environment identifiers and a timestamp, and transmits the resulting JSON over HTTPS to a hardcoded third-party ingest endpoint immediately on execution. The lack of authentication, lack of legitimate install-time purpose, and silent error handling strongly indicate malicious/unauthorized telemetry or data theft behavior. This should not be used in security-sensitive contexts without remediation/verification.

Socket detects traditional vulnerabilities (CVEs) but goes beyond that to scan the actual code of dependencies for malicious behavior. It proactively detects and blocks 70+ signals of supply chain risk in open source code, for comprehensive protection.

Possible typosquat attack

Known malware

Git dependency

GitHub dependency

HTTP dependency

Obfuscated code

Suspicious Stars on GitHub

Telemetry

Protestware or potentially unwanted behavior

Unstable ownership

Critical CVE

High CVE

Medium CVE

Low CVE

Unpopular package

Minified code

Bad dependency semver

Wildcard dependency

Socket optimized override available

Deprecated

Unmaintained

Explicitly Unlicensed Item

License Policy Violation

Misc. License Issues

Ambiguous License Classifier

Copyleft License

License exception

No License Found

Non-permissive License

Unidentified License

Socket detects and blocks malicious dependencies, often within just minutes of them being published to public registries, making it the most effective tool for blocking zero-day supply chain attacks.

Socket is built by a team of prolific open source maintainers whose software is downloaded over 1 billion times per month. We understand how to build tools that developers love. But don’t take our word for it.

Nat Friedman

CEO at GitHub

Suz Hinton

Senior Software Engineer at Stripe

heck yes this is awesome!!! Congrats team 🎉👏

Matteo Collina

Node.js maintainer, Fastify lead maintainer

So awesome to see @SocketSecurity launch with a fresh approach! Excited to have supported the team from the early days.

DC Posch

Director of Technology at AppFolio, CTO at Dynasty

This is going to be super important, especially for crypto projects where a compromised dependency results in stolen user assets.

Luis Naranjo

Software Engineer at Microsoft

If software supply chain attacks through npm don't scare the shit out of you, you're not paying close enough attention.

@SocketSecurity sounds like an awesome product. I'll be using socket.dev instead of npmjs.org to browse npm packages going forward

Elena Nadolinski

Founder and CEO at Iron Fish

Huge congrats to @SocketSecurity! 🙌

Literally the only product that proactively detects signs of JS compromised packages.

Joe Previte

Engineering Team Lead at Coder

Congrats to @feross and the @SocketSecurity team on their seed funding! 🚀 It's been a big help for us at @CoderHQ and we appreciate what y'all are doing!

Josh Goldberg

Staff Developer at Codecademy

This is such a great idea & looks fantastic, congrats & good luck @feross + team!

The best security teams in the world use Socket to get visibility into supply chain risk, and to build a security feedback loop into the development process.

Scott Roberts

CISO at UiPath

As a happy Socket customer, I've been impressed with how quickly they are adding value to the product, this move is a great step!

Yan Zhu

Head of Security at Brave, DEFCON, EFF, W3C

glad to hear some of the smartest people i know are working on (npm, etc.) supply chain security finally :). @SocketSecurity

Andrew Peterson

CEO and Co-Founder at Signal Sciences (acq. Fastly)

How do you track the validity of open source software libraries as they get updated? You're prob not. Check out @SocketSecurity and the updated tooling they launched.

Supply chain is a cluster in security as we all know and the tools from Socket are "duh" type tools to be implementing. Check them out and follow Feross Aboukhadijeh to see more updates coming from them in the future.

Zbyszek Tenerowicz

Senior Security Engineer at ConsenSys

socket.dev is getting more appealing by the hour

Devdatta Akhawe

Head of Security at Figma

The @SocketSecurity team is on fire! Amazing progress and I am exciting to see where they go next.

Sebastian Bensusan

Engineer Manager at Stripe

I find it surprising that we don't have _more_ supply chain attacks in software:

Imagine your airplane (the code running) was assembled (deployed) daily, with parts (dependencies) from internet strangers. How long until you get a bad part?

Excited for Socket to prevent this

Adam Baldwin

VP of Security at npm, Red Team at Auth0/Okta

Congrats to everyone at @SocketSecurity ❤️🤘🏻

Nico Waisman

CISO at Lyft

This is an area that I have personally been very focused on. As Nat Friedman said in the 2019 GitHub Universe keynote, Open Source won, and every time you add a new open source project you rely on someone else code and you rely on the people that build it.

This is both exciting and problematic. You are bringing real risk into your organization, and I'm excited to see progress in the industry from OpenSSF scorecards and package analyzers to the company that Feross Aboukhadijeh is building!

Questions? Call us at (844) SOCKET-0

Secure your team's dependencies across your stack with Socket. Stop supply chain attacks before they reach production.

RUST

Rust Package Manager

PHP

PHP Package Manager

GOLANG

Go Dependency Management

JAVA

JAVASCRIPT

Node Package Manager

.NET

.NET Package Manager

PYTHON

Python Package Index

RUBY

Ruby Package Manager

SWIFT

AI

AI Model Hub

CI

CI/CD Workflows

EXTENSIONS

Chrome Browser Extensions

EXTENSIONS

VS Code Extensions

Attackers have taken notice of the opportunity to attack organizations through open source dependencies. Supply chain attacks rose a whopping 700% in the past year, with over 15,000 recorded attacks.

Nov 23, 2025

Shai Hulud v2

Shai Hulud v2 campaign: preinstall script (setup_bun.js) and loader (setup_bin.js) that installs/locates Bun and executes an obfuscated bundled malicious script (bun_environment.js) with suppressed output.

Nov 05, 2025

Elves on npm

A surge of auto-generated "elf-stats" npm packages is being published every two minutes from new accounts. These packages contain simple malware variants and are being rapidly removed by npm. At least 420 unique packages have been identified, often described as being generated every two minutes, with some mentioning a capture the flag challenge or test.

Jul 04, 2025

RubyGems Automation-Tool Infostealer

Since at least March 2023, a threat actor using multiple aliases uploaded 60 malicious gems to RubyGems that masquerade as automation tools (Instagram, TikTok, Twitter, Telegram, WordPress, and Naver). The gems display a Korean Glimmer-DSL-LibUI login window, then exfiltrate the entered username/password and the host's MAC address via HTTP POST to threat actor-controlled infrastructure.

Mar 13, 2025

North Korea's Contagious Interview Campaign

Since late 2024, we have tracked hundreds of malicious npm packages and supporting infrastructure tied to North Korea's Contagious Interview operation, with tens of thousands of downloads targeting developers and tech job seekers. The threat actors run a factory-style playbook: recruiter lures and fake coding tests, polished GitHub templates, and typosquatted or deceptive dependencies that install or import into real projects.

Jul 23, 2024

Network Reconnaissance Campaign

A malicious npm supply chain attack that leveraged 60 packages across three disposable npm accounts to fingerprint developer workstations and CI/CD servers during installation. Each package embedded a compact postinstall script that collected hostnames, internal and external IP addresses, DNS resolvers, usernames, home and working directories, and package metadata, then exfiltrated this data as a JSON blob to a hardcoded Discord webhook.

Questions? Call us at (844) SOCKET-0

Get our latest security research, open source insights, and product updates.

Research

Compromised SAP CAP npm packages download and execute unverified binaries, creating urgent supply chain risk for affected developers and CI/CD environments.

Company News

Socket has acquired Secure Annex to expand extension security across browsers, IDEs, and AI tools.

Research

/Security News

Socket is tracking cloned Open VSX extensions tied to GlassWorm, with several updated from benign-looking sleepers into malware delivery vehicles.