Product

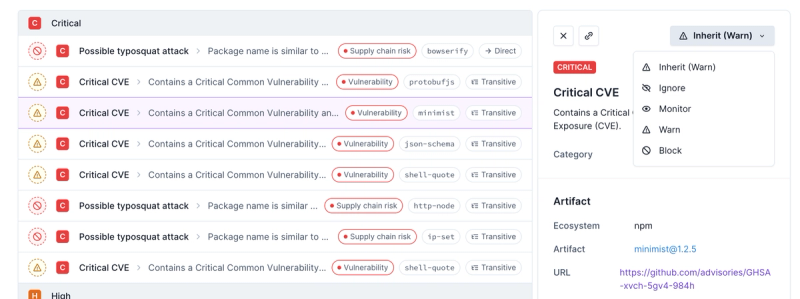

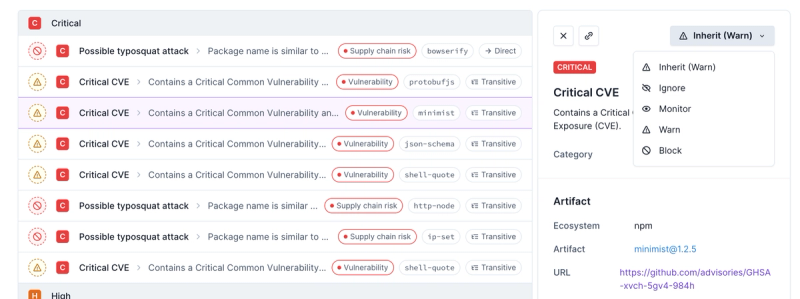

Introducing Enhanced Alert Actions and Triage Functionality

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

@aws-cdk/aws-applicationautoscaling

Advanced tools

Changelog

1.72.0 (2020-11-06)

enableHttpEndpoint renamed to enableDataApioutputLocation in the experimental Athena StartQueryExecution has been changed to s3.Location from stringEnvironment from attributes (#10932) (d395b5e), closes #10931--no-previous-parameters incorrectly skips updates (#11288) (1bfc649)Readme

Application AutoScaling is used to configure autoscaling for all services other than scaling EC2 instances. For example, you will use this to scale ECS tasks, DynamoDB capacity, Spot Fleet sizes, Comprehend document classification endpoints, Lambda function provisioned concurrency and more.

As a CDK user, you will probably not have to interact with this library directly; instead, it will be used by other construct libraries to offer AutoScaling features for their own constructs.

This document will describe the general autoscaling features and concepts; your particular service may offer only a subset of these.

Resources can offer one or more attributes to autoscale, typically representing some capacity dimension of the underlying service. For example, a DynamoDB Table offers autoscaling of the read and write capacity of the table proper and its Global Secondary Indexes, an ECS Service offers autoscaling of its task count, an RDS Aurora cluster offers scaling of its replica count, and so on.

When you enable autoscaling for an attribute, you specify a minimum and a maximum value for the capacity. AutoScaling policies that respond to metrics will never go higher or lower than the indicated capacity (but scheduled scaling actions might, see below).

There are three ways to scale your capacity:

The general pattern of autoscaling will look like this:

const capacity = resource.autoScaleCapacity({

minCapacity: 5,

maxCapacity: 100

});

// Enable a type of metric scaling and/or schedule scaling

capacity.scaleOnMetric(...);

capacity.scaleToTrackMetric(...);

capacity.scaleOnSchedule(...);

This type of scaling scales in and out in deterministic steps that you configure, in response to metric values. For example, your scaling strategy to scale in response to CPU usage might look like this:

Scaling -1 (no change) +1 +3

│ │ │ │ │

├────────┼───────────────────────┼────────┼────────┤

│ │ │ │ │

CPU usage 0% 10% 50% 70% 100%

(Note that this is not necessarily a recommended scaling strategy, but it's a possible one. You will have to determine what thresholds are right for you).

You would configure it like this:

capacity.scaleOnMetric('ScaleToCPU', {

metric: service.metricCpuUtilization(),

scalingSteps: [

{ upper: 10, change: -1 },

{ lower: 50, change: +1 },

{ lower: 70, change: +3 },

],

// Change this to AdjustmentType.PercentChangeInCapacity to interpret the

// 'change' numbers before as percentages instead of capacity counts.

adjustmentType: autoscaling.AdjustmentType.CHANGE_IN_CAPACITY,

});

The AutoScaling construct library will create the required CloudWatch alarms and AutoScaling policies for you.

This type of scaling scales in and out in order to keep a metric (typically representing utilization) around a value you prefer. This type of scaling is typically heavily service-dependent in what metric you can use, and so different services will have different methods here to set up target tracking scaling.

The following example configures the read capacity of a DynamoDB table to be around 60% utilization:

const readCapacity = table.autoScaleReadCapacity({

minCapacity: 10,

maxCapacity: 1000

});

readCapacity.scaleOnUtilization({

targetUtilizationPercent: 60

});

This type of scaling is used to change capacities based on time. It works

by changing the minCapacity and maxCapacity of the attribute, and so

can be used for two purposes:

minCapacity high or

the maxCapacity low.minCapacity and

maxCapacity but changing their range over time).The following schedule expressions can be used:

at(yyyy-mm-ddThh:mm:ss) -- scale at a particular moment in timerate(value unit) -- scale every minute/hour/daycron(mm hh dd mm dow) -- scale on arbitrary schedulesOf these, the cron expression is the most useful but also the most

complicated. A schedule is expressed as a cron expression. The Schedule class has a cron method to help build cron expressions.

The following example scales the fleet out in the morning, and lets natural scaling take over at night:

const capacity = resource.autoScaleCapacity({

minCapacity: 1,

maxCapacity: 50,

});

capacity.scaleOnSchedule('PrescaleInTheMorning', {

schedule: autoscaling.Schedule.cron({ hour: '8', minute: '0' }),

minCapacity: 20,

});

capacity.scaleOnSchedule('AllowDownscalingAtNight', {

schedule: autoscaling.Schedule.cron({ hour: '20', minute: '0' }),

minCapacity: 1

});

const handler = new lambda.Function(this, 'MyFunction', {

runtime: lambda.Runtime.PYTHON_3_7,

handler: 'index.handler',

code: new lambda.InlineCode(`

import json, time

def handler(event, context):

time.sleep(1)

return {

'statusCode': 200,

'body': json.dumps('Hello CDK from Lambda!')

}`),

reservedConcurrentExecutions: 2,

});

const fnVer = handler.addVersion('CDKLambdaVersion', undefined, 'demo alias', 10);

new apigateway.LambdaRestApi(this, 'API', { handler: fnVer })

const target = new applicationautoscaling.ScalableTarget(this, 'ScalableTarget', {

serviceNamespace: applicationautoscaling.ServiceNamespace.LAMBDA,

maxCapacity: 100,

minCapacity: 10,

resourceId: `function:${handler.functionName}:${fnVer.version}`,

scalableDimension: 'lambda:function:ProvisionedConcurrency',

})

s

target.scaleToTrackMetric('PceTracking', {

targetValue: 0.9,

predefinedMetric: applicationautoscaling.PredefinedMetric.LAMBDA_PROVISIONED_CONCURRENCY_UTILIZATION,

})

}

FAQs

The CDK Construct Library for AWS::ApplicationAutoScaling

The npm package @aws-cdk/aws-applicationautoscaling receives a total of 109,724 weekly downloads. As such, @aws-cdk/aws-applicationautoscaling popularity was classified as popular.

We found that @aws-cdk/aws-applicationautoscaling demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

Security News

Polyfill.io has been serving malware for months via its CDN, after the project's open source maintainer sold the service to a company based in China.

Security News

OpenSSF is warning open source maintainers to stay vigilant against reputation farming on GitHub, where users artificially inflate their status by manipulating interactions on closed issues and PRs.