Product

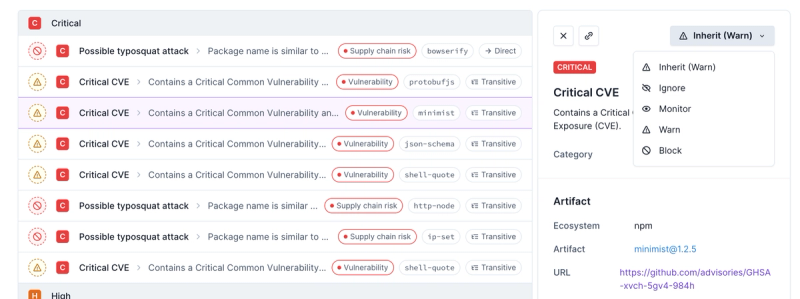

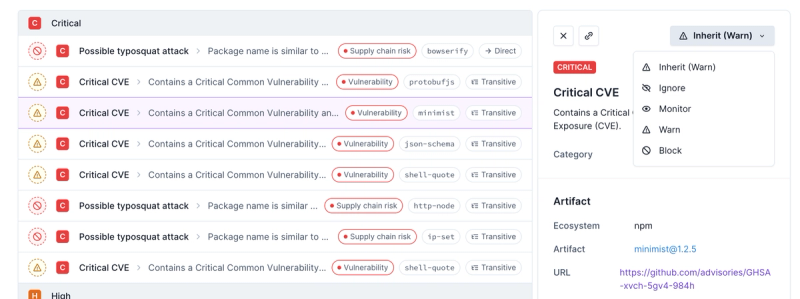

Introducing Enhanced Alert Actions and Triage Functionality

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

@aws-cdk/aws-s3

Advanced tools

Changelog

1.72.0 (2020-11-06)

enableHttpEndpoint renamed to enableDataApioutputLocation in the experimental Athena StartQueryExecution has been changed to s3.Location from stringEnvironment from attributes (#10932) (d395b5e), closes #10931--no-previous-parameters incorrectly skips updates (#11288) (1bfc649)Readme

Define an unencrypted S3 bucket.

new Bucket(this, 'MyFirstBucket');

Bucket constructs expose the following deploy-time attributes:

bucketArn - the ARN of the bucket (i.e. arn:aws:s3:::bucket_name)bucketName - the name of the bucket (i.e. bucket_name)bucketWebsiteUrl - the Website URL of the bucket (i.e.

http://bucket_name.s3-website-us-west-1.amazonaws.com)bucketDomainName - the URL of the bucket (i.e. bucket_name.s3.amazonaws.com)bucketDualStackDomainName - the dual-stack URL of the bucket (i.e.

bucket_name.s3.dualstack.eu-west-1.amazonaws.com)bucketRegionalDomainName - the regional URL of the bucket (i.e.

bucket_name.s3.eu-west-1.amazonaws.com)arnForObjects(pattern) - the ARN of an object or objects within the bucket (i.e.

arn:aws:s3:::bucket_name/exampleobject.png or

arn:aws:s3:::bucket_name/Development/*)urlForObject(key) - the HTTP URL of an object within the bucket (i.e.

https://s3.cn-north-1.amazonaws.com.cn/china-bucket/mykey)virtualHostedUrlForObject(key) - the virtual-hosted style HTTP URL of an object

within the bucket (i.e. https://china-bucket-s3.cn-north-1.amazonaws.com.cn/mykey)s3UrlForObject(key) - the S3 URL of an object within the bucket (i.e.

s3://bucket/mykey)Define a KMS-encrypted bucket:

const bucket = new Bucket(this, 'MyUnencryptedBucket', {

encryption: BucketEncryption.KMS

});

// you can access the encryption key:

assert(bucket.encryptionKey instanceof kms.Key);

You can also supply your own key:

const myKmsKey = new kms.Key(this, 'MyKey');

const bucket = new Bucket(this, 'MyEncryptedBucket', {

encryption: BucketEncryption.KMS,

encryptionKey: myKmsKey

});

assert(bucket.encryptionKey === myKmsKey);

Use BucketEncryption.ManagedKms to use the S3 master KMS key:

const bucket = new Bucket(this, 'Buck', {

encryption: BucketEncryption.KMS_MANAGED

});

assert(bucket.encryptionKey == null);

A bucket policy will be automatically created for the bucket upon the first call to

addToResourcePolicy(statement):

const bucket = new Bucket(this, 'MyBucket');

bucket.addToResourcePolicy(new iam.PolicyStatement({

actions: ['s3:GetObject'],

resources: [bucket.arnForObjects('file.txt')],

principals: [new iam.AccountRootPrincipal()],

}));

The bucket policy can be directly accessed after creation to add statements or adjust the removal policy.

bucket.policy?.applyRemovalPolicy(RemovalPolicy.RETAIN);

Most of the time, you won't have to manipulate the bucket policy directly. Instead, buckets have "grant" methods called to give prepackaged sets of permissions to other resources. For example:

const lambda = new lambda.Function(this, 'Lambda', { /* ... */ });

const bucket = new Bucket(this, 'MyBucket');

bucket.grantReadWrite(lambda);

Will give the Lambda's execution role permissions to read and write from the bucket.

To use a bucket in a different stack in the same CDK application, pass the object to the other stack:

To import an existing bucket into your CDK application, use the Bucket.fromBucketAttributes

factory method. This method accepts BucketAttributes which describes the properties of an already

existing bucket:

const bucket = Bucket.fromBucketAttributes(this, 'ImportedBucket', {

bucketArn: 'arn:aws:s3:::my-bucket'

});

// now you can just call methods on the bucket

bucket.grantReadWrite(user);

Alternatively, short-hand factories are available as Bucket.fromBucketName and

Bucket.fromBucketArn, which will derive all bucket attributes from the bucket

name or ARN respectively:

const byName = Bucket.fromBucketName(this, 'BucketByName', 'my-bucket');

const byArn = Bucket.fromBucketArn(this, 'BucketByArn', 'arn:aws:s3:::my-bucket');

The bucket's region defaults to the current stack's region, but can also be explicitly set in cases where one of the bucket's regional properties needs to contain the correct values.

const myCrossRegionBucket = Bucket.fromBucketAttributes(this, 'CrossRegionImport', {

bucketArn: 'arn:aws:s3:::my-bucket',

region: 'us-east-1',

});

// myCrossRegionBucket.bucketRegionalDomainName === 'my-bucket.s3.us-east-1.amazonaws.com'

The Amazon S3 notification feature enables you to receive notifications when certain events happen in your bucket as described under S3 Bucket Notifications of the S3 Developer Guide.

To subscribe for bucket notifications, use the bucket.addEventNotification method. The

bucket.addObjectCreatedNotification and bucket.addObjectRemovedNotification can also be used for

these common use cases.

The following example will subscribe an SNS topic to be notified of all s3:ObjectCreated:* events:

import * as s3n from '@aws-cdk/aws-s3-notifications';

const myTopic = new sns.Topic(this, 'MyTopic');

bucket.addEventNotification(s3.EventType.OBJECT_CREATED, new s3n.SnsDestination(topic));

This call will also ensure that the topic policy can accept notifications for this specific bucket.

Supported S3 notification targets are exposed by the @aws-cdk/aws-s3-notifications package.

It is also possible to specify S3 object key filters when subscribing. The

following example will notify myQueue when objects prefixed with foo/ and

have the .jpg suffix are removed from the bucket.

bucket.addEventNotification(s3.EventType.OBJECT_REMOVED,

new s3n.SqsDestination(myQueue),

{ prefix: 'foo/', suffix: '.jpg' });

Use blockPublicAccess to specify block public access settings on the bucket.

Enable all block public access settings:

const bucket = new Bucket(this, 'MyBlockedBucket', {

blockPublicAccess: BlockPublicAccess.BLOCK_ALL

});

Block and ignore public ACLs:

const bucket = new Bucket(this, 'MyBlockedBucket', {

blockPublicAccess: BlockPublicAccess.BLOCK_ACLS

});

Alternatively, specify the settings manually:

const bucket = new Bucket(this, 'MyBlockedBucket', {

blockPublicAccess: new BlockPublicAccess({ blockPublicPolicy: true })

});

When blockPublicPolicy is set to true, grantPublicRead() throws an error.

Use serverAccessLogsBucket to describe where server access logs are to be stored.

const accessLogsBucket = new Bucket(this, 'AccessLogsBucket');

const bucket = new Bucket(this, 'MyBucket', {

serverAccessLogsBucket: accessLogsBucket,

});

It's also possible to specify a prefix for Amazon S3 to assign to all log object keys.

const bucket = new Bucket(this, 'MyBucket', {

serverAccessLogsBucket: accessLogsBucket,

serverAccessLogsPrefix: 'logs'

});

An inventory contains a list of the objects in the source bucket and metadata for each object. The inventory lists are stored in the destination bucket as a CSV file compressed with GZIP, as an Apache optimized row columnar (ORC) file compressed with ZLIB, or as an Apache Parquet (Parquet) file compressed with Snappy.

You can configure multiple inventory lists for a bucket. You can configure what object metadata to include in the inventory, whether to list all object versions or only current versions, where to store the inventory list file output, and whether to generate the inventory on a daily or weekly basis.

const inventoryBucket = new s3.Bucket(this, 'InventoryBucket');

const dataBucket = new s3.Bucket(this, 'DataBucket', {

inventories: [

{

frequency: s3.InventoryFrequency.DAILY,

includeObjectVersions: s3.InventoryObjectVersion.CURRENT,

destination: {

bucket: inventoryBucket,

},

},

{

frequency: s3.InventoryFrequency.WEEKLY,

includeObjectVersions: s3.InventoryObjectVersion.ALL,

destination: {

bucket: inventoryBucket,

prefix: 'with-all-versions',

},

}

]

});

If the destination bucket is created as part of the same CDK application, the necessary permissions will be automatically added to the bucket policy.

However, if you use an imported bucket (i.e Bucket.fromXXX()), you'll have to make sure it contains the following policy document:

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "InventoryAndAnalyticsExamplePolicy",

"Effect": "Allow",

"Principal": { "Service": "s3.amazonaws.com" },

"Action": "s3:PutObject",

"Resource": ["arn:aws:s3:::destinationBucket/*"]

}

]

}

You can use the two following properties to specify the bucket redirection policy. Please note that these methods cannot both be applied to the same bucket.

You can statically redirect a to a given Bucket URL or any other host name with websiteRedirect:

const bucket = new Bucket(this, 'MyRedirectedBucket', {

websiteRedirect: { hostName: 'www.example.com' }

});

Alternatively, you can also define multiple websiteRoutingRules, to define complex, conditional redirections:

const bucket = new Bucket(this, 'MyRedirectedBucket', {

websiteRoutingRules: [{

hostName: 'www.example.com',

httpRedirectCode: '302',

protocol: RedirectProtocol.HTTPS,

replaceKey: ReplaceKey.prefixWith('test/'),

condition: {

httpErrorCodeReturnedEquals: '200',

keyPrefixEquals: 'prefix',

}

}]

});

To put files into a bucket as part of a deployment (for example, to host a

website), see the @aws-cdk/aws-s3-deployment package, which provides a

resource that can do just that.

S3 provides two types of URLs for accessing objects via HTTP(S). Path-Style and Virtual Hosted-Style URL. Path-Style is a classic way and will be deprecated. We recommend to use Virtual Hosted-Style URL for newly made bucket.

You can generate both of them.

bucket.urlForObject('objectname'); // Path-Style URL

bucket.virtualHostedUrlForObject('objectname'); // Virtual Hosted-Style URL

bucket.virtualHostedUrlForObject('objectname', { regional: false }); // Virtual Hosted-Style URL but non-regional

FAQs

The CDK Construct Library for AWS::S3

The npm package @aws-cdk/aws-s3 receives a total of 128,319 weekly downloads. As such, @aws-cdk/aws-s3 popularity was classified as popular.

We found that @aws-cdk/aws-s3 demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

Security News

Polyfill.io has been serving malware for months via its CDN, after the project's open source maintainer sold the service to a company based in China.

Security News

OpenSSF is warning open source maintainers to stay vigilant against reputation farming on GitHub, where users artificially inflate their status by manipulating interactions on closed issues and PRs.