Research

/Security News

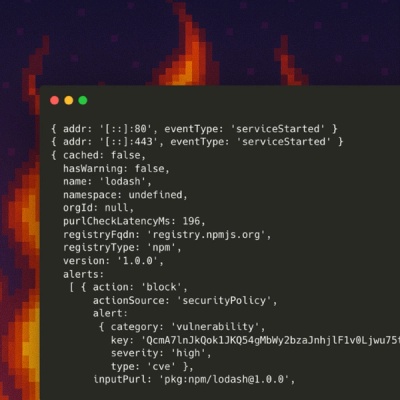

10 npm Typosquatted Packages Deploy Multi-Stage Credential Harvester

Socket researchers found 10 typosquatted npm packages that auto-run on install, show fake CAPTCHAs, fingerprint by IP, and deploy a credential stealer.

@json2csv/plainjs

Advanced tools

Fast and highly configurable JSON to CSV converter. It fully support conversion following the RFC4180 specification as well as other similar text delimited formats as TSV.

@json2csv/plainjs exposes plain JavasScript modules of json2csv which can be used in Node.js, the browser or Deno.

You can install json2csv as a dependency using NPM.

$ npm install --save @json2csv/plainjs

You can install json2csv as a dependency using Yarn.

$ yarn add --save @json2csv/plainjs

json2csv plainjs modules is packaged as an ES6 modules. If your browser supports modules, you can load json2csv plainjs modules directly on the browser from the CDN.

You can import the latest version:

<script type="module">

import { Parser } from 'https://cdn.jsdelivr.net/npm/@json2csv/plainjs/src/Parser.js';

import { StreamParser } from 'https://cdn.jsdelivr.net/npm/@json2csv/plainjs/src/StreamParser.js';

</script>

You can also select a specific version:

<script type="module">

import { Parser } from 'https://cdn.jsdelivr.net/npm/@json2csv/plainjs@6.0.0/src/Parser.js';

import { StreamParser } from 'https://cdn.jsdelivr.net/npm/@json2csv/plainjs@6.0.0/src/StreamParser.js';

</script>

json2csv can be used programmatically as a synchronous converter.

This loads the entire JSON in memory and do the whole processing in-memory while blocking Javascript event loop. For that reason is rarely a good reason to use it until your data is very small or your application doesn't do anything else.

import { Parser } from '@json2csv/plainjs';

try {

const opts = {};

const parser = new Parser(opts);

const csv = parser.parse(myData);

console.log(csv);

} catch (err) {

console.error(err);

}

fields <DataSelector[]>) Defaults to toplevel JSON attributes. Seetransforms <Transform[]> Array of transforms to apply to the data. A transform is a function that receives a data recod and returns a transformed record. Transforms are executed in order.formatters <Formatters> Object where the each key is a Javascript data type and its associated value is a formatters for the given type.defaultValue <Any> value to use when missing data. Defaults to <empty> if not specified. (Overridden by fields[].default)delimiter <String> delimiter of columns. Defaults to , if not specified.eol <String> overrides the default OS line ending (i.e. \n on Unix and \r\n on Windows).header <Boolean> determines whether or not CSV file will contain a title column. Defaults to true if not specified.includeEmptyRows <Boolean> includes empty rows. Defaults to false.withBOM <Boolean> with BOM character. Defaults to false.See https://juanjodiaz.github.io/json2csv/#/parsers/parser.

The synchronous API has the downside of loading the entire JSON array in memory and blocking JavaScript's event loop while processing the data. This means that your server won't be able to process more request or your UI will become irresponsive while data is being processed. For those reasons, it is rarely a good reason to use it unless your data is very small or your application doesn't do anything else.

The async parser processes the data as a it comes in so you don't need the entire input data set loaded in memory and you can avoid blocking the event loop for too long. Thus, it's better suited for large datasets or system with high concurrency.

The streaming API takes a second options argument to configure objectMode and ndjson mode. These options also support fine-tunning the underlying JSON parser.

The streaming API support multiple callbacks to get the resulting CSV, errors, etc.

import { StreamParser } from '@json2csv/plainjs';

const opts = {};

const asyncOpts = {};

const parser = new StreamParser(opts, asyncOpts);

let csv = '';

parser.onData = (chunk) => (csv += chunk.toString()));

parser.onEnd = () => console.log(csv));

parser.onError = (err) => console.error(err));

// You can also listen for events on the conversion and see how the header or the lines are coming out.

parser.onHeader = (header) => console.log(header));

parser.onLine = (line) => console.log(line));

ndjson <Boolean> indicates that the data is in NDJSON format. Only effective when using the streaming API and not in object mode.fields <DataSelector[]>) Defaults to toplevel JSON attributes.transforms <Transform[]> Array of transforms to apply to the data. A transform is a function that receives a data recod and returns a transformed record. Transforms are executed in order.formatters <Formatters> Object where the each key is a Javascript data type and its associated value is a formatters for the given type.defaultValue <Any> value to use when missing data. Defaults to <empty> if not specified. (Overridden by fields[].default)delimiter <String> delimiter of columns. Defaults to , if not specified.eol <String> overrides the default OS line ending (i.e. \n on Unix and \r\n on Windows).header <Boolean> determines whether or not CSV file will contain a title column. Defaults to true if not specified.includeEmptyRows <Boolean> includes empty rows. Defaults to false.withBOM <Boolean> with BOM character. Defaults to false.Options used by the underlying parsing library to process the binary or text stream.

Not relevant when running in objectMode.

Buffering is only relevant if you expect very large strings/numbers in your JSON.

See @streamparser/json for more details about buffering.

stringBufferSize <number> Size of the buffer used to parse strings. Defaults to 0 which means to don't buffer. Min valid value is 4.numberBufferSize <number> Size of the buffer used to parse numbers. Defaults to 0 to don't buffer.See https://juanjodiaz.github.io/json2csv/#/parsers/stream-parser.

See LICENSE.md.

FAQs

Pure Javascript JSON to CSV converter.

The npm package @json2csv/plainjs receives a total of 296,705 weekly downloads. As such, @json2csv/plainjs popularity was classified as popular.

We found that @json2csv/plainjs demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

/Security News

Socket researchers found 10 typosquatted npm packages that auto-run on install, show fake CAPTCHAs, fingerprint by IP, and deploy a credential stealer.

Product

Socket Firewall Enterprise is now available with flexible deployment, configurable policies, and expanded language support.

Security News

Open source dashboard CNAPulse tracks CVE Numbering Authorities’ publishing activity, highlighting trends and transparency across the CVE ecosystem.