Product

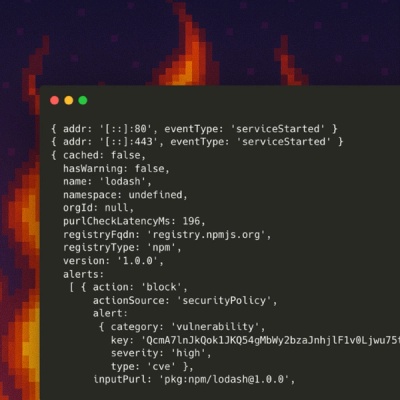

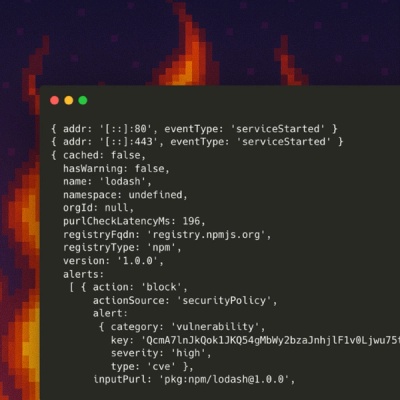

Introducing Socket Firewall Enterprise: Flexible, Configurable Protection for Modern Package Ecosystems

Socket Firewall Enterprise is now available with flexible deployment, configurable policies, and expanded language support.

@llumiverse/core

Advanced tools

Provide an universal API to LLMs. Support for existing LLMs can be added by writing a driver.

LLumiverse is a universal interface for interacting with Large Language Models, for the Typescript/Javascript ecosystem. It provides a lightweight modular library for interacting with various LLM models and execution platforms.

It solely focuses on abstracting LLMs and their execution platforms, and does not provide prompt templating, or RAG, or chains, letting you pick the best tool for the job.

The following LLM platforms are supported in the current version:

| Provider | Completion | Chat | Model Listing | Multimodal | Fine-Tuning |

|---|---|---|---|---|---|

| AWS Bedrock | ✅ | ✅ | ✅ | ✅ | ✅ |

| Azure OpenAI | ✅ | ✅ | ✅ | ✅ | ✅ |

| Google Vertex AI | ✅ | ✅ | ✅ | ✅ | By Request |

| Groq | ✅ | ✅ | ✅ | N/A | N/A |

| HuggingFace Inference Endpoints | ✅ | ✅ | N/A | N/A | N/A |

| IBM WatsonX | ✅ | ✅ | ✅ | N/A | By Request |

| Mistral AI | ✅ | ✅ | ✅ | N/A | By Request |

| OpenAI | ✅ | ✅ | ✅ | ✅ | ✅ |

| Replicate | ✅ | ✅ | ✅ | N/A | ✅ |

| Together AI | ✅ | ✅ | ✅ | N/A | By Request |

New capabilities and platform can easily be added by creating a new driver for the platform.

@llumiverse/core and @llumiverse/driversnpm install @llumiverse/core @llumiverse/drivers

@llumiverse/corenpm install @llumiverse/core

@llumiverse/corenpm install @llumiverse/core

First, you need to instantiate a driver instance for the target LLM platform you want to interact too. Each driver accepts its own set of parameters when instantiating.

import { OpenAIDriver } from "@llumiverse/drivers";

// create an instance of the OpenAI driver

const driver = new OpenAIDriver({

apiKey: "YOUR_OPENAI_API_KEY"

});

In this example, we will instantiate the Bedrock driver using credentials from the Shared Credentials File (i.e. ~/.aws/credentials). Learn more on how to setup AWS credentials in node.

import { BedrockDriver } from "@llumiverse/drivers";

const driver = new BedrockDriver({

region: 'us-west-2'

});

For the following example to work you need to define a GOOGLE_APPLICATION_CREDENTIALS environment variable.

import { VertexAIDriver } from "@llumiverse/drivers";

const driver = new VertexAIDriver({

project: 'YOUR_GCLOUD_PROJECT',

region: 'us-central1'

});

import { ReplicateDriver } from "@llumiverse/drivers";

const driver = new ReplicateDriver({

apiKey: "YOUR_REPLICATE_API_KEY"

});

import { TogetherAIDriver } from "@llumiverse/drivers";

const driver = new TogetherAIDriver({

apiKey: "YOUR_TOGETHER_AI_API_KEY"

});

import { HuggingFaceIEDriver } from "@llumiverse/drivers";

const driver = new HuggingFaceIEDriver({

apiKey: "YOUR_HUGGINGFACE_API_KEY",

endpoint_url: "YOUR_HUGGINGFACE_ENDPOINT",

});

Once you instantiated a driver you can list the available models. Some drivers accept an argument for the listModel method to search for matching models. Some drivers like for example replicate are listing a preselected set of models. To list other models you need to perform a search by giving a text query as an argument.

In the following example, we are assuming that we have already instantiated a driver, which is available as the driver variable.

import { AIModel } from "@llumiverse/core";

// instantiate the desired driver

const driver = createDriverInstance();

// list available models on the target LLM. (some drivers may require a search parameter to discover more models)

const models: AIModel[] = await driver.listModels();

console.log('# Available Models:');

for (const model of models) {

console.log(`${model.name} [${model.id}]`);

}

To execute a prompt we need to create a prompt in the LLumiverse format and pass it to the driver execute method.

The prompt format is very similar to the OpenAI prompt format. It is an array of messages with a content and a role property. The roles can be any of "user" | "system" | "assistant" | "safety".

The safety role is similar to system but has a greater precedence over the other messages. Thus, it will override any user or system message that is saying something contrary to the safety message.

In order to execute a prompt we also need to specify a target model, given a model ID which is known by the target LLM. We may also specify execution options like temperature, max_tokens etc.

In the following example, we are again assuming that we have already instantiated a driver, which is available as the driver variable.

Also, we are assuming the model ID we want to target is available as the model variable. To get a list of the existing models (and their IDs) you can list the model as we shown in the previous example

Here is an example of model IDs depending on the driver type:

gpt-3.5-turboarn:aws:bedrock:us-west-2::foundation-model/cohere.command-text-v14text-bisonmeta/llama-2-70b-chat:02e509c789964a7ea8736978a43525956ef40397be9033abf9fd2badfe68c9e3mistralai/Mistral-7B-instruct-v0.1aws-mistral-7b-instruct-v0-1-015import { PromptRole, PromptSegment } from "@llumiverse/core";

// instantiate the desired driver

const driver = createDriverInstance();

const model = "the-model-id"; // change with your desired model ID

// create the prompt.

const prompt: PromptSegment[] = [

{

role: PromptRole.user,

content: 'Please, write a short story about winter in Paris, in no more than 512 characters.'

}

]

// execute a model and wait for the response

console.log(`\n# Executing prompt on ${model} model: ${prompt}`);

const response = await driver.execute(prompt, {

model,

temperature: 0.6,

max_tokens: 1024

});

console.log('\n# LLM response:', response.result)

console.log('# Response took', response.execution_time, 'ms')

console.log('# Token usage:', response.token_usage);

In this example, we will execute a prompt and will stream the result to display it on the console as it is returned by the target LLM platform.

Note that some models don't support streaming. In that case, the driver will simulate a streaming using a single chunk of text corresponding to the entire response.

import { PromptRole, PromptSegment } from "@llumiverse/core";

// instantiate the desired driver

const driver = createDriverInstance();

const model = "the-model-id"; // change with your desired model ID

// create the prompt.

const prompt: PromptSegment[] = [

{

role: PromptRole.user,

content: 'Please, write a short story about winter in Paris, in no more than 512 characters.'

}

]

// execute the prompt in streaming mode

console.log(`\n# Executing prompt on model ${model} in streaming mode: ${prompt}`);

const stream = await driver.stream(prompt, {

model,

temperature: 0.6,

max_tokens: 1024

});

// print the streaming response as it comes

for await (const chunk of stream) {

process.stdout.write(chunk);

}

// when the response stream is consumed we can get the final response using stream.completion field.

const streamingResponse = stream.completion!;

console.log('\n# LLM response:', streamingResponse.result)

console.log('# Response took', streamingResponse.execution_time, 'ms')

console.log('# Token usage:', streamingResponse.token_usage);

LLumiverse drivers expose a method to generate vector embeddings for a given text.

Drivers supporting embeddings as of v0.10.0 are bedrock, openai, vertexai.

If embeddings are not yet supported the generateEmbeddings method will throws an error.

Here is an example on using the vertexai driver. For the example to work you need to define a GOOGLE_APPLICATION_CREDENTIALS env variable to be able to access your gcloud project

import { VertexAIDriver } from "@llumiverse/drivers";

const driver = new VertexAIDriver({

project: 'your-project-id',

region: 'us-central1' // your zone

});

const r = await vertex.generateEmbeddings({ content: "Hello world!" });

// print the vector

console.log('Embeddings: ', v.values);

The result object contains the vector as the values property, the model used to generate the embeddings and an optional token_count which if defined is the token count of the input text.

Depending on the driver, the result may contain additional properties.

Also you can specify a specific model to be used or pass other driver supported parameter.

Example:

import { VertexAIDriver } from "@llumiverse/drivers";

const driver = new VertexAIDriver({

project: 'your-project-id',

region: 'us-central1' // your zone

});

const r = await vertex.generateEmbeddings({

content: "Hello world!",

model: "textembedding-gecko@002",

task_type: "SEMANTIC_SIMILARITY"

});

// print the vector

console.log('Embeddings: ', v.values);

The task_type parameter is specific to the textembedding-gecko model.

Contributions are welcome! Please see CONTRIBUTING.md for more details.

Llumiverse is licensed under the Apache License 2.0. Feel free to use it accordingly.

FAQs

Provide an universal API to LLMs. Support for existing LLMs can be added by writing a driver.

The npm package @llumiverse/core receives a total of 134 weekly downloads. As such, @llumiverse/core popularity was classified as not popular.

We found that @llumiverse/core demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket Firewall Enterprise is now available with flexible deployment, configurable policies, and expanded language support.

Security News

Open source dashboard CNAPulse tracks CVE Numbering Authorities’ publishing activity, highlighting trends and transparency across the CVE ecosystem.

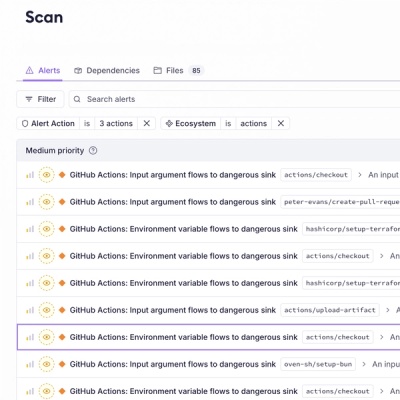

Product

Detect malware, unsafe data flows, and license issues in GitHub Actions with Socket’s new workflow scanning support.