Research

/Security News

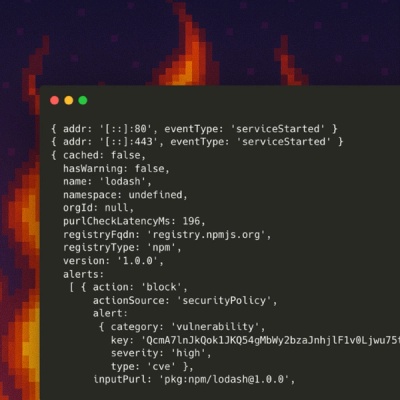

10 npm Typosquatted Packages Deploy Multi-Stage Credential Harvester

Socket researchers found 10 typosquatted npm packages that auto-run on install, show fake CAPTCHAs, fingerprint by IP, and deploy a credential stealer.

@meilisearch/scrapix

Advanced tools

This project is an API that will allow you to scrap any website and send the data to Meilisearch.

This server have only one endpoint.

This endpoint will crawl the website and send the data to Meilisearch. data:

{

"start_urls": ["https://www.google.com"],

"urls_to_exclude": ["https://www.google.com"],

"urls_to_index": ["https://www.google.com"],

"urls_to_not_index": ["https://www.google.com"],

"meilisearch_url": "http://localhost:7700",

"meilisearch_api_key": "masterKey",

"meilisearch_index_uid": "google",

"stategy": "default", // docssearch, schema*, custom or default

"headless": true, // Open browser or not

"batch_size": 1000, //null with send documents one by one

"primary_key": null,

"meilisearch_settings": {

"searchableAttributes": [

"h1",

"h2",

"h3",

"h4",

"h5",

"h6",

"p",

"title",

"meta.description"

],

"filterableAttributes": ["urls_tags"],

"distinctAttribute": "url"

},

"schema_settings": {

"only_type": "Product", // Product, Article, etc...

"convert_dates": true // default false

}

}

While the server receive a crawling request it will add it to the queue. When the data is added to the queue it will return a response to the user. The queue is handle by redis (Bull). The queue will dispatch the job to the worker.

The worker will crawl the website by keeping only the page that have the same domain as urls given in parameters. It will not try to scrap the external links or files. It will also not try to scrape when pages are paginated pages (like /page/1).

For each scrappable page it will scrape the data by trying to create blocks of titles and text. Each block will contains:

The worker will crawl the website by keeping only the page that have the same domain as urls given in parameters. It will not try to scrap the external links or files. It will also not try to scrape when pages are paginated pages (like /page/1).

For each scrappable page it will scrape the data by trying to create blocks of titles and text. Each block will contains:

While the worker is scraping the website it will send the data to Meilisearch by batch.

Before sending the data to Meilisearch, it will create a new index called {index_uid}_crawler_tmp, apply the settings and add the data to it. Then it will use the index swap method to replace the old index by the new one. It will finish properly by deleting the tmp index.

The setting applied:

{

"searchableAttributes": [

"h1",

"h2",

"h3",

"h4",

"h5",

"h6",

"p",

"title",

"meta.description"

],

"filterableAttributes": ["urls_tags"],

"distinctAttribute": "url"

}

start_urls mandatory

This array contains the list of URLs that will be used to start scraping your website. The scraper will recursively follow any links ( tags) from those pages. It will not follow links that are on another domain.

urls_to_exclude

List of the URL's to ignore

urls_to_not_index

List of the URLS to index

urls_to_not_index

List of the URLS that should not be indexes

meilisearch_url mandatory

The URL to your Meilisearch instance

meilisearch_api_key

The API key to your Meilisearch instance. It has to have at least write and read access on the specified index.

meilisearch_index_uid mandatory

Name of the index on which the content is indexed.

stategy

default: default

Scraping strategy: - default Scraps the content of webpages, it suits most use cases. It indexes the content in this format (show example) - docssearch Scraps the content of webpages, it suits most use cases. The difference with the default strategy is that it indexes the content in a format compatible with docs-search bar - schema Scraps the schema information of your web app.

headless

default: true

Wether or not the javascript should be loaded before scraping starts.

primary_key

The key name in your documents containing their unique identifier.

meilisearch_settings

Your custom Meilisearch settings

schema_settings

If you strategy is schema:

only_type: Which types of schema should be parsed

convert_dates: If dates should be converted to timestamp. This is usefull to be able to order by date.

user_agents

An array of user agents that are append at the end of the current user agents.

In this case, if your user_agents value is ['My Thing (vx.x.x)'] the final user_agent becomes

Meilisearch JS (vx.x.x); Meilisearch Crawler (vx.x.x); My Thing (vx.x.x)

webhook_payload

In the case webhooks are enabled, the webhook_payload option gives the possibility to provide information that will be added in the webhook payload.

webhook_url

The URL on which the webhook calls are made.

To be able to receive updates on the state of the crawler, you need to create a webhook. To do so, you absolutely need to have a public URL that can be reached by the crawler. This URL will be called by the crawler to send you updates.

To enable webhooks, you need add the following env vars.

WEBHOOK_URL=https://mywebsite.com/webhook

WEBHOOK_TOKEN=mytoken

WEBHOOK_INTERVAL=1000

WEBHOOK_URL is the URL that will be called by the crawler. The calls will be made with the POST method.WEBHOOK_TOKEN is a token string that will be used to sign the request. It will be used if present in the Authorization header of the request in the format Authorization: Bearer ${token}.WEBHOOK_INTERVAL is a way to change the frequency you want to receive updated from the scraper. The value is in milliseconds. The default value is 5000ms.Here is the Webhook payload:

{

"date": "2022-01-01T12:34:56.000Z",

"meilisearch_url": "https://myproject.meilisearch.com",

"meilisearch_index_uid": "myindex",

"status": "active", // "added", "completed", "failed", "active", "wait", "delayed"

"nb_page_crawled": 20,

"nb_page_indexed": 15

}

It is possible to add additional information in the webhook payload through the webhook_payload configuration

FAQs

Automatic scraper and indexer to Meilisearch of any website.

We found that @meilisearch/scrapix demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 6 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

/Security News

Socket researchers found 10 typosquatted npm packages that auto-run on install, show fake CAPTCHAs, fingerprint by IP, and deploy a credential stealer.

Product

Socket Firewall Enterprise is now available with flexible deployment, configurable policies, and expanded language support.

Security News

Open source dashboard CNAPulse tracks CVE Numbering Authorities’ publishing activity, highlighting trends and transparency across the CVE ecosystem.