Product

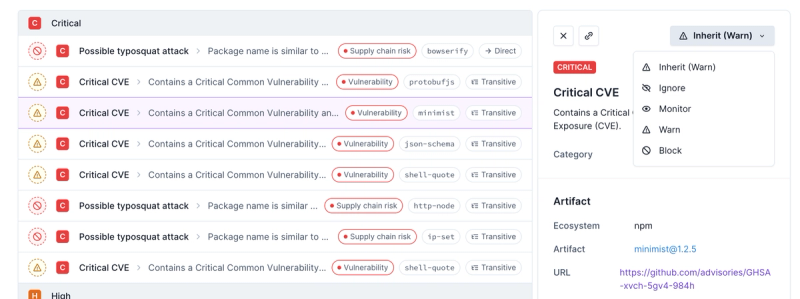

Introducing Enhanced Alert Actions and Triage Functionality

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

@mux/upchunk

Advanced tools

Readme

UpChunk uploads chunks of files! It's a JavaScript module for handling large file uploads via chunking and making a put request for each chunk with the correct range request headers. Uploads can be paused and resumed, they're fault tolerant,

and it should work just about anywhere.

UpChunk is designed to be used with Mux direct uploads, but should work with any server that supports resumable uploads in the same manner. This library will:

PUT request for each chunk, specifying the correct Content-Length and Content-Range headers for each one.npm install --save @mux/upchunk

yarn add @mux/upchunk

<script src="https://unpkg.com/@mux/upchunk@2"></script>

You'll need to have a route in your application that returns an upload URL from Mux. If you're using the Mux Node SDK, you might do something that looks like this.

const { Video } = new Mux();

module.exports = async (req, res) => {

// This ultimately just makes a POST request to https://api.mux.com/video/v1/uploads with the supplied options.

const upload = await Video.Uploads.create({

cors_origin: 'https://your-app.com',

new_asset_settings: {

playback_policy: 'public',

},

});

// Save the Upload ID in your own DB somewhere, then

// return the upload URL to the end-user.

res.end(upload.url);

};

import * as UpChunk from '@mux/upchunk';

// Pretend you have an HTML page with an input like: <input id="picker" type="file" />

const picker = document.getElementById('picker');

picker.onchange = () => {

const getUploadUrl = () =>

fetch('/the-endpoint-above').then(res =>

res.ok ? res.text() : throw new Error('Error getting an upload URL :(')

);

const upload = UpChunk.createUpload({

endpoint: getUploadUrl,

file: picker.files[0],

chunkSize: 5120, // Uploads the file in ~5mb chunks

});

// subscribe to events

upload.on('error', err => {

console.error('💥 🙀', err.detail);

});

upload.on('progress', progress => {

console.log(`So far we've uploaded ${progress.detail}% of this file.`);

});

upload.on('success', () => {

console.log("Wrap it up, we're done here. 👋");

});

};

createUpload(options)Returns an instance of UpChunk and begins uploading the specified File.

options object parametersendpoint type: string | function (required)

URL to upload the file to. This can be either a string of the authenticated URL to upload to, or a function that returns a promise that resolves that URL string. The function will be passed the file as a parameter.

file type: File (required)

The file you'd like to upload. For example, you might just want to use the file from an input with a type of "file".

headers type: Object

An object with any headers you'd like included with the PUT request for each chunk.

chunkSize type: integer, default:5120

The size in kb of the chunks to split the file into, with the exception of the final chunk which may be smaller. This parameter should be in multiples of 256.

maxFileSize type: integer

The maximum size of the file in kb of the input file to be uploaded. The maximum size can technically be smaller than the chunk size, and in that case there would be exactly one chunk.

retries type: integer, default: 5

The number of times to retry any given chunk.

delayBeforeRetry type: integer, default: 1

The time in seconds to wait before attempting to upload a chunk again.

method type: "PUT" | "PATCH" | "POST", default: PUT

The HTTP method to use when uploading each chunk.

pause()

Pauses an upload after the current in-flight chunk is finished uploading.

resume()

Resumes an upload that was previously paused.

Events are fired with a CustomEvent object. The detail key is null if an interface isn't specified.

attempt { detail: { chunkNumber: Integer, chunkSize: Integer } }

Fired immediately before a chunk upload is attempted. chunkNumber is the number of the current chunk being attempted, and chunkSize is the size (in bytes) of that chunk.

attemptFailure { detail: { message: String, chunkNumber: Integer, attemptsLeft: Integer } }

Fired when an attempt to upload a chunk fails.

chunkSuccess { detail: { chunk: Integer, attempts: Integer, response: XhrResponse } }

Fired when an indvidual chunk is successfully uploaded.

error { detail: { message: String, chunkNumber: Integer, attempts: Integer } }

Fired when a chunk has reached the max number of retries or the response code is fatal and implies that retries should not be attempted.

offline

Fired when the client has gone offline.

online

Fired when the client has gone online.

progress { detail: [0..100] }

Fired continuously with incremental upload progress. This returns the current percentage of the file that's been uploaded.

success

Fired when the upload is finished successfully.

The original idea and base for this came from the awesome huge uploader project, which is what you need if you're looking to do multipart form data uploads. 👏

FAQs

Dead simple chunked file uploads using Fetch

The npm package @mux/upchunk receives a total of 22,230 weekly downloads. As such, @mux/upchunk popularity was classified as popular.

We found that @mux/upchunk demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

Security News

Polyfill.io has been serving malware for months via its CDN, after the project's open source maintainer sold the service to a company based in China.

Security News

OpenSSF is warning open source maintainers to stay vigilant against reputation farming on GitHub, where users artificially inflate their status by manipulating interactions on closed issues and PRs.