Product

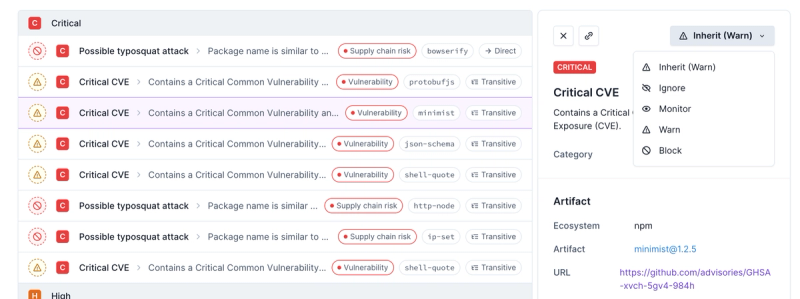

Introducing Enhanced Alert Actions and Triage Functionality

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

aswh

Advanced tools

Readme

This is a simple yet powerful component to perform asynchronous delivery of webhooks, or other HTTP calls. In a nutshell, it works as a store-and-forward http proxy: you call the proxy and get a HTTP 201, and the proxy then sees to deliver your HTTP call

The store part uses keuss as a job-queue middleware; your HTTP calls would be stored in MongoDB collections; you get the following storage options:

simple: the HTTP calls are stored in a collection, one object per request, and are removed after being confirmed

tape: the HTTP calls are stored in a collection, one object per request, and are marked as consumed after being confirmed. Consumed objects are removed at a later time using a TTL index

bucket: the HTTP calls are stored in a collection, but they are packed, several in a single object. This increases performance by an order of magnitude without taxing durability much

Generally speaking, aswh works for any HTTP request, not just webhooks, with the following limitations:

The HTTP requests need to be completed at the time they're passed to aswh. Any auth header, for example, must be added beforehand, so no reactive auth is allowed

For the same reason, no body streaming is performed. aswh will read the request bodies completely before adding them to the store. There is, in fact, a size limit for bodies (100kb by default)

HTTP response bodies are totally ignored. HTTP responses are used only to decide whether to retry or not, and the HTTP status is all that's needed. HTTP responses are properly read, completely

http://localhost:6677/wh. The whole HTTP request will be queued for later. You'll receive a HTTP 201 Created response, immediately after successful queuingx-dest-url header. The original uri, querystring included, is not used in the forwarding

tries^2 * c2 + tries * c1 + c0 seconds (those c0, c1, c2 default to 3 but are configurable).__failed__ inside the same keuss QM (that is, in the same mongodb database)__deadletter__Also, you can specify an initial delay in seconds in the header x-delay, which is not passed along either

For example, you can issue a POST webhook which would be retried if needed like this:

curl -X POST -i \

--data-bin @wh-payload.json \

-H 'x-dest-url: https://the-rea-location.api/ai/callback' \

-H 'content-type: text/plain' \

-H 'x-delay: 1' http://localhost:6677/wh

You would need to first create a file wh-payload.json with the webhook payload or content. Also, it will be issued with an initial delay of 1 second.

Aswh uses cascade-config as configuration engine; so, configuration can come from:

Environment variables

CLI args

etc/config.js file

etc/config-${NODE_ENV:-development}.js file (optional)

See cascade-config documentation for more details on how to pass extra configuration (or override it) using environment variables or CLI args

listen_port(defaults to 6677): port to listen to for incoming http

defaults.: global defaults for aswh. They, in turn, default to:

defaults: {

retry: {

max: 5,

delay: {

c0: 3,

c1: 3,

c2: 3

}

}

}

Aswh supports the use of many queues; queues are organized in queue groups , which are implemented as a keuss queue factory (plus keuss stats and keuss signaller); in turn, each queue-group/keuss-factory can use its own mongodb database (although they all share the same mongodb cluster/server)

Queue groups are declared using the following configuration schema:

keuss: {

// base mongodb url. All queue groups share the same server/cluster

// but each one goes to a separated database whose name is created

// by suffixing the db in base_url with '_$queue_group_name'

base_url: 'mongodb://localhost/aswh',

queue_groups: {

qg_1: {

// queue group named 'qg_1'. It will use the following databases:

// mongodb://localhost/aswh_qg_1 : main data, queues

// mongodb://localhost/aswh_qg_1_signal : keuss signaller

// mongodb://localhost/aswh_qg_1_stats : keuss stats

// optional, defaults to 'default'

mq: 'default' | 'tape' | 'bucket',

// maximum number of retries before moving elements to

// __deadletter__ queue. defaults to defaults.retry.max or 5

max_retries: <int>,

// queue definitions go here

queues: {

default: { // default queue, items for the group go here if no queue is specified

<opts>

},

q1: { // queue 'q1'

window: 3, // consumer uses a window size of 3

retry: {

delay: { // c0, c1 and c2 values for retry delay

c0: 1,

c1: 1,

c2: 1

}

},

<opts>

},

q2: {

<opts>

},

...

}

},

qg_2: {...},

...

qg_n: {...}

}

}

Each queue has its own consumer to relay http requests; each consumer consists basically in a http client plus a loop with reserves elements from the queue, sends them and commits or rollbacks the elements on the queue depending on the http response

The consumer can keep more than one http request sent and awaiting for response; by default, only one is kept (which amounts to one-request-at-a-time), but a different value can be specified at <queue>.window option. window=10 would allow the cosnumer to keep up to 10 requests sent and awaiting for response (and thus up to 10 elements reserved and waiting for commit/rollback at the queue)

Queue consumers can use http(s) agents, which allow for connection pooling. To do so, you need 2 steps: first, configure one or more HTTP agents

agents: {

http: {

// standard node.js http agents

agent_a : {

keepAlive: true,

keepAliveMsecs: 10000,

maxSockets: 10,

maxFreeSockets: 2,

timeout: 12000

},

agent_b: {

...

}

},

https: {

// standard node.js https agents

agent_z : {

keepAlive: true,

keepAliveMsecs: 10000,

maxSockets: 10,

maxFreeSockets: 2,

timeout: 12000

},

agent_other: {

...

},

agent_other_one: {

...

}

},

},

Both take the standard node.js http and https agents specified at here and here. agents.http specify http agents to be used on http:// target urls, agents.https specify agents for https:// targets.

The use of an agent is specified on a per-request basis, using the x-http-agent header; with the above config a request like:

curl -v \

-H 'x-dest-url: https://alpha.omega/a/b' \

-H 'x-http-agent: agent_z' \

http://location_of_aswh/

would end up calling https://alpha.omega/a/b using the https agent configured at agents.https.agent_z

If no agent is specified, no agent will be used; this would force connection: close upstream

Easiest way is to use the docker image and mount your configuration:

docker run \

--rm \

-d \

--name aswh \

-v /path/to/configuration/dir:/usr/src/app/etc \

- e NODE_ENV=development \

pepmartinez/aswh:1.1.2

The configuration dir should contain:

A base config file, config.js. This would contain common configuration

Zero or more per-env files, config-${NODE_ENV}.js, which would contain configuration specific for each $NODE_ENV

Also, configuration can be added or overriden using env vars:

docker run \

--rm \

-d \

--name aswh \

-v /path/to/configuration/dir:/usr/src/app/etc \

-e NODE_ENV=development \

-e defaults__retry__max=11 \ # this sets the default for max retries to 11

pepmartinez/aswh:1.1.2

aswh uses promster to maintain and provide prometheus metrics; along with the standard metrics provided by promster, the following metrics are also provided:

http_request_client: histogram of client http requests, labelled with protocol, http method, destination (host:port) and http statusFAQs

Asynchronous WebHook delivery, or generic store-and-forward HTTP proxy

The npm package aswh receives a total of 438 weekly downloads. As such, aswh popularity was classified as not popular.

We found that aswh demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

Security News

Polyfill.io has been serving malware for months via its CDN, after the project's open source maintainer sold the service to a company based in China.

Security News

OpenSSF is warning open source maintainers to stay vigilant against reputation farming on GitHub, where users artificially inflate their status by manipulating interactions on closed issues and PRs.