Product

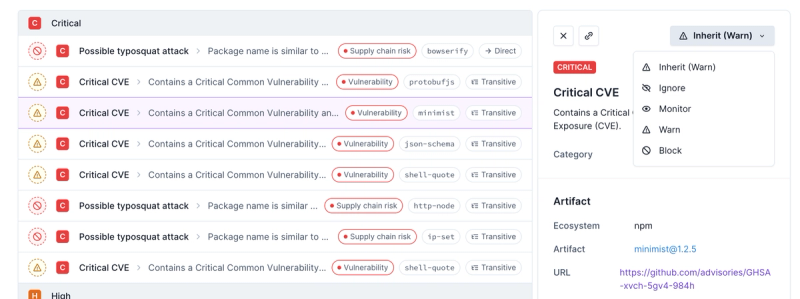

Introducing Enhanced Alert Actions and Triage Functionality

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

async-cache

Advanced tools

Package description

The async-cache npm package provides a simple and efficient way to cache asynchronous function results. It is particularly useful for caching the results of expensive or frequently called asynchronous operations, such as API requests or database queries.

Basic Caching

This feature demonstrates how to create a basic cache using async-cache. The cache is configured with a load function that simulates an asynchronous operation. When the cache's get method is called, it either returns the cached value or invokes the load function to fetch and cache the value.

const AsyncCache = require('async-cache');

const cache = new AsyncCache({

load: function (key, callback) {

// Simulate an async operation

setTimeout(() => {

callback(null, `Value for ${key}`);

}, 1000);

}

});

cache.get('testKey', (err, result) => {

if (err) throw err;

console.log(result); // Output: Value for testKey

});Cache Expiration

This feature demonstrates how to set an expiration time for cache entries using the maxAge option. In this example, cache entries expire after 5 seconds. After the expiration time, the load function is called again to reload the value.

const AsyncCache = require('async-cache');

const cache = new AsyncCache({

maxAge: 5000, // Cache entries expire after 5 seconds

load: function (key, callback) {

// Simulate an async operation

setTimeout(() => {

callback(null, `Value for ${key}`);

}, 1000);

}

});

cache.get('testKey', (err, result) => {

if (err) throw err;

console.log(result); // Output: Value for testKey

});

setTimeout(() => {

cache.get('testKey', (err, result) => {

if (err) throw err;

console.log(result); // Output: Value for testKey (reloaded after expiration)

});

}, 6000);Cache Size Limit

This feature demonstrates how to set a maximum number of cache entries using the max option. In this example, the cache can hold a maximum of 2 entries. When a new entry is added beyond this limit, the least recently used entry is evicted.

const AsyncCache = require('async-cache');

const cache = new AsyncCache({

max: 2, // Maximum number of cache entries

load: function (key, callback) {

// Simulate an async operation

setTimeout(() => {

callback(null, `Value for ${key}`);

}, 1000);

}

});

cache.get('key1', (err, result) => {

if (err) throw err;

console.log(result); // Output: Value for key1

});

cache.get('key2', (err, result) => {

if (err) throw err;

console.log(result); // Output: Value for key2

});

cache.get('key3', (err, result) => {

if (err) throw err;

console.log(result); // Output: Value for key3

});

cache.get('key1', (err, result) => {

if (err) throw err;

console.log(result); // Output: Value for key1 (reloaded because it was evicted)

});The lru-cache package provides a simple and efficient in-memory cache with a Least Recently Used (LRU) eviction policy. It is similar to async-cache but focuses on synchronous operations and does not provide built-in support for asynchronous loading of cache entries.

The node-cache package is a simple in-memory caching module with support for time-to-live (TTL) expiration. It is similar to async-cache but is designed for synchronous operations and does not include built-in support for asynchronous loading of cache entries.

The memory-cache package provides a simple in-memory cache with support for TTL expiration and event notifications. It is similar to async-cache but focuses on synchronous operations and does not provide built-in support for asynchronous loading of cache entries.

Readme

Cache your async lookups and don't fetch the same thing more than necessary.

Let's say you have to look up stat info from paths. But you are ok with only looking up the stat info once every 10 minutes (since it doesn't change that often), and you want to limit your cache size to 1000 objects, and never have two stat calls for the same file happening at the same time (since that's silly and unnecessary).

You can do this:

var stats = new AsyncCache({

// options passed directly to the internal lru cache

max: 1000,

maxAge: 1000 * 60 * 10,

// method to load a thing if it's not in the cache.

// key must be unique in the context of this cache.

load: function (key, cb) {

// the key can be something like the path, or fd+path, or whatever.

// something that will be unique.

// this method will only be called if it's not already in cache, and will

// cache the result in the lru.

getTheStatFromTheKey(key, cb)

}

})

// then later..

stats.get(fd + ':' + path, function (er, stat) {

// maybe loaded from cache, maybe just fetched

})

Except for the load method, all the options are passed unmolested to

the internal lru-cache.

Since values are fetched asynchronously, the get method takes a

callback, rather than returning the value synchronously.

While there is a set(k,v) method to manually seed the cache,

typically you'll just call get and let the load function fetch the

key for you.

Keys must uniquely identify a single object, and must contain all the information required to fetch an object, and must be strings.

maxAgeIf load callback is called with 3 arguments, the 3rd is passed to

the internal lru-cache as a maxAge for

the retrieved key.

function load (key, cb) {

getValueFromTheKey(key, function (err, item) {

cb(err, item.value, item.maxAge)

})

}

get(key, cb) If the key is in the cache, then calls cb(null, cached) on nextTick. Otherwise, calls the load function that was

supplied in the options object. If it doesn't return an error, then

cache the result. Multiple get calls with the same key will only

ever have a single load call at the same time.

set(key, val, maxAge) Seed the cache. This doesn't have to be done, but

can be convenient if you know that something will be fetched soon.

maxAge is optional - it is passed to internal LRU cache

reset() Drop all the items in the cache.

FAQs

Cache your async lookups and don't fetch the same thing more than necessary.

The npm package async-cache receives a total of 266,334 weekly downloads. As such, async-cache popularity was classified as popular.

We found that async-cache demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

Security News

Polyfill.io has been serving malware for months via its CDN, after the project's open source maintainer sold the service to a company based in China.

Security News

OpenSSF is warning open source maintainers to stay vigilant against reputation farming on GitHub, where users artificially inflate their status by manipulating interactions on closed issues and PRs.