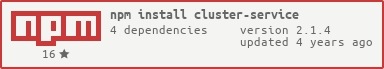

cluster-service

Advanced tools

cluster-service - npm Package Compare versions

Comparing version 0.10.0 to 1.0.0

@@ -0,1 +1,33 @@ | ||

| ## v1.0.0 - 5/8/2014 | ||

| Features: | ||

| * #56. Ability to restart web server gracefully under load with no code | ||

| * No code required to enable net statistics | ||

| Fixes: | ||

| * Prevent additional commands in CLI while in progress to resolve state issues | ||

| * Handle failed `start` command gracefully | ||

| * Handle failed `upgrade` command gracefully | ||

| * Fixed `start` command from preventing failed workers to auto-restart | ||

| * Fixed `restart` command from preventing failed workers to auto-restart | ||

| * Further streamlined management of state across multiple cservice instances | ||

| ## v0.10.0 - 5/2/2014 | ||

| Legacy: | ||

| * Dropped support for 'ready' flag, was causing support/complexity issues | ||

| Features: | ||

| * Ability to start without worker | ||

| * Ability to support multiple versions of cservice through shared state | ||

| * Added example workers to `examples/` | ||

| ## v0.9.0 - 2/17/2014 | ||

@@ -2,0 +34,0 @@ |

| var cluster = require("cluster"); | ||

| var colors = require("colors"); | ||

| var locals = require("./lib/defaults"); | ||

| if (!('cservice' in global)) { | ||

| global.cservice = { locals: require("./lib/defaults") }; | ||

| } | ||

@@ -32,3 +34,3 @@ module.exports = exports; | ||

| get: function() { | ||

| return locals.options; | ||

| return global.cservice.locals.options; | ||

| } | ||

@@ -39,3 +41,3 @@ }); | ||

| get: function() { | ||

| return locals; | ||

| return global.cservice.locals; | ||

| } | ||

@@ -57,2 +59,3 @@ }); | ||

| exports.start = require("./lib/start"); | ||

| exports.netServers = require("./lib/net-servers"); | ||

| exports.netStats = require("./lib/net-stats"); | ||

@@ -71,6 +74,9 @@ | ||

| cluster.worker.module = require(process.env.worker); | ||

| if (locals.workerReady === undefined | ||

| if (global.cservice.locals.workerReady === undefined | ||

| && process.env.ready.toString() === "false") { | ||

| // if workerReady not invoked explicitly, inform master worker is ready | ||

| exports.workerReady(); | ||

| // if workerReady not invoked explicitly, we'll track it automatically | ||

| exports.workerReady(false); | ||

| exports.netServers.waitForReady(function() { | ||

| exports.workerReady(); // NOW we're ready | ||

| }); | ||

| } | ||

@@ -77,0 +83,0 @@ }, 10); |

| var cservice = require("../cluster-service"), | ||

| util = require("util"), | ||

| locals = null, | ||

| options = null; | ||

| exports.init = function(l, o) { | ||

| locals = l; | ||

| exports.init = function(o) { | ||

| options = o; | ||

@@ -35,2 +33,9 @@ | ||

| function onCommand(question) { | ||

| if (cservice.locals.isBusy === true) { | ||

| cservice.error("Busy... Try again after previous command returns."); | ||

| return; | ||

| } | ||

| cservice.locals.isBusy = true; | ||

| var args; | ||

@@ -41,3 +46,3 @@ question = question.replace(/[\r\n]/g, ""); | ||

| if (!locals.events[args[0]]) { | ||

| if (!cservice.locals.events[args[0]]) { | ||

| onCallback("Command " + args[0] + " not found. Try 'help'."); | ||

@@ -60,4 +65,6 @@ | ||

| function onCallback(err, result) { | ||

| delete locals.reason; | ||

| delete cservice.locals.reason; | ||

| cservice.locals.isBusy = false; | ||

| if (err) { | ||

@@ -64,0 +71,0 @@ cservice.error( |

@@ -38,3 +38,3 @@ var async = require("async"), | ||

| async.series(tasks, function(err) { | ||

| evt.locals.restartOnFailure = originalAutoRestart; | ||

| evt.locals.restartOnFailure = originalAutoRestart; // restore | ||

@@ -127,10 +127,5 @@ if (err) { | ||

| if (worker.cservice.onStop === true) { | ||

| worker.send({cservice: {cmd: "onWorkerStop"}}); | ||

| } else { | ||

| worker.kill("SIGTERM"); // exit worker | ||

| } | ||

| require("../workers").exitGracefully(worker); | ||

| }); | ||

| }; | ||

| } |

@@ -54,7 +54,4 @@ /* jshint loopfunc:true */ | ||

| ); | ||

| if (worker.onWorkerStop === true) { // try the nice way first | ||

| worker.send({cservice: {cmd: "onWorkerStop"}}); | ||

| } else { | ||

| worker.kill("SIGTERM"); // exit worker | ||

| } | ||

| require("../workers").exitGracefully(worker); | ||

| }); | ||

@@ -61,0 +58,0 @@ |

@@ -20,2 +20,3 @@ var async = require("async"), | ||

| evt.locals.reason = "start"; | ||

| var originalAutoRestart = evt.locals.restartOnFailure; | ||

| evt.locals.restartOnFailure = false; | ||

@@ -32,2 +33,4 @@ | ||

| async.series(tasks, function(err) { | ||

| evt.locals.restartOnFailure = originalAutoRestart; // restore | ||

| if (err) { | ||

@@ -77,2 +80,4 @@ cb(err); | ||

| newKiller = setTimeout(function() { | ||

| if (!newWorker) | ||

| return; | ||

| newWorker.removeListener("exit", exitListener); // remove temp listener | ||

@@ -82,2 +87,3 @@ newWorker.kill("SIGKILL"); // go get'em, killer | ||

| }, options.timeout); | ||

| newKiller.unref(); | ||

| } | ||

@@ -87,5 +93,8 @@ | ||

| newWorker = evt.service.newWorker(options, function(err, newWorker) { | ||

| newWorker.removeListener("exit", exitListener); // remove temp listener | ||

| if (newWorker) { // won't exist if failure | ||

| newWorker.removeListener("exit", exitListener); // remove temp listener | ||

| } | ||

| if (newKiller) { // timeout no longer needed | ||

| clearTimeout(newKiller); | ||

| newKiller = null; | ||

| } | ||

@@ -92,0 +101,0 @@ |

@@ -101,6 +101,12 @@ var async = require("async"), | ||

| newWorker = evt.service.newWorker(options, function(err, newWorker) { | ||

| newWorker.removeListener("exit", exitListener); // remove temp listener | ||

| if (newWorker) { // won't exist if failure | ||

| newWorker.removeListener("exit", exitListener); // remove temp listener | ||

| } | ||

| if (newKiller) { // timeout no longer needed | ||

| clearTimeout(newKiller); | ||

| } | ||

| if (err) { | ||

| cb(err); | ||

| return; | ||

| } | ||

@@ -124,10 +130,5 @@ // ok, lets stop old worker | ||

| if (worker.cservice.onStop === true) { | ||

| worker.send({cservice: {cmd: "onWorkerStop"}}); | ||

| } else { | ||

| worker.kill("SIGTERM"); // exit worker | ||

| } | ||

| require("../workers").exitGracefully(worker); | ||

| }); | ||

| }; | ||

| } |

@@ -8,5 +8,7 @@ var os = require("os"); | ||

| state: 0, // 0-not running, 1-starting, 2-running | ||

| isBusy: false, | ||

| isAttached: false, // attached to CLI over REST | ||

| workerReady: undefined, | ||

| restartOnFailure: true, | ||

| net: { servers: {} }, | ||

| options: { | ||

@@ -13,0 +15,0 @@ host: "localhost", |

| var util = require("util"), | ||

| http = require("http"), | ||

| querystring = require("querystring"), | ||

| locals = null, | ||

| options = null, | ||

| cservice = require("../cluster-service"); | ||

| exports.init = function(l, o) { | ||

| locals = l; | ||

| exports.init = function(o) { | ||

| options = o; | ||

@@ -11,0 +9,0 @@ |

@@ -7,14 +7,16 @@ var cservice = require("../cluster-service"), | ||

| control = require("./control"), | ||

| locals = {}, | ||

| options = null, | ||

| server = null; | ||

| exports.init = function(l, o, cb) { | ||

| locals = l; | ||

| exports.init = function(o, cb) { | ||

| options = o; | ||

| if (options.ssl) { // HTTPS | ||

| server = locals.http = https.createServer(options.ssl, processRequest); | ||

| server = cservice.locals.http = | ||

| https.createServer(options.ssl, processRequest) | ||

| ; | ||

| } else { // HTTP | ||

| server = locals.http = http.createServer(processRequest); | ||

| server = cservice.locals.http = | ||

| http.createServer(processRequest) | ||

| ; | ||

| } | ||

@@ -89,3 +91,3 @@ | ||

| if (!locals.events[args[0]]) { | ||

| if (!cservice.locals.events[args[0]]) { | ||

| cservice.error("Command " + args[0].cyan + " not found".error); | ||

@@ -127,3 +129,3 @@ res.writeHead(404); | ||

| try { | ||

| delete locals.reason; | ||

| delete cservice.locals.reason; | ||

@@ -130,0 +132,0 @@ if (err) { // should do nothing if response already sent |

@@ -45,3 +45,3 @@ /* jshint loopfunc:true */ | ||

| // start the http client | ||

| require("./http-client").init(cservice.locals, options); | ||

| require("./http-client").init(options); | ||

| } else { // we're the single-master | ||

@@ -55,6 +55,4 @@ cservice.locals.isAttached = false; | ||

| }); | ||

| cluster.on("exit", function(worker, code, signal) { | ||

| cservice.trigger("workerExit", worker); | ||

| // do not restart if there is a reason, or disabled | ||

@@ -78,3 +76,3 @@ if ( | ||

| // wire-up CLI | ||

| require("./cli").init(cservice.locals, options); | ||

| require("./cli").init(options); | ||

| } | ||

@@ -96,33 +94,38 @@ } | ||

| // if we're NOT attached, we can spawn the workers now | ||

| } else if (cservice.locals.isAttached === false && options.workers !== null) { | ||

| } else if (cservice.locals.isAttached === false) { | ||

| // fork it, i'm out of here | ||

| workersRemaining = 0; | ||

| workersForked = 0; | ||

| workers = typeof options.workers === "string" | ||

| ? {main: {worker: options.workers}} | ||

| : options.workers; | ||

| for (workerName in workers) { | ||

| worker = workers[workerName]; | ||

| workerCount = worker.count || options.workerCount; | ||

| workersRemaining += workerCount; | ||

| workersForked += workerCount; | ||

| for (i = 0; i < workerCount; i++) { | ||

| cservice.newWorker(worker, function(err) { | ||

| workersRemaining--; | ||

| if (err) { | ||

| workersRemaining = 0; // callback now | ||

| } | ||

| if (workersRemaining === 0) { | ||

| if (typeof options.master === "string") { | ||

| require(path.resolve(options.master)); | ||

| if (options.workers !== null) { | ||

| workers = typeof options.workers === "string" | ||

| ? {main: {worker: options.workers}} | ||

| : options.workers; | ||

| for (workerName in workers) { | ||

| worker = workers[workerName]; | ||

| workerCount = worker.count || options.workerCount; | ||

| workersRemaining += workerCount; | ||

| workersForked += workerCount; | ||

| for (i = 0; i < workerCount; i++) { | ||

| cservice.newWorker(worker, function(err) { | ||

| workersRemaining--; | ||

| if (err) { | ||

| workersRemaining = 0; // callback now | ||

| } | ||

| if(cb){ | ||

| cb(err); | ||

| if (workersRemaining === 0) { | ||

| if (typeof options.master === "string") { | ||

| require(path.resolve(options.master)); | ||

| } | ||

| if(cb){ | ||

| cb(err); | ||

| } | ||

| } | ||

| } | ||

| }); | ||

| }); | ||

| } | ||

| } | ||

| } | ||

| // if no forking took place, make sure cb is invoked | ||

| if (workersForked === 0) { | ||

| cservice.log("No workers running. Try 'start server.js'.".info); | ||

| if(cb){ | ||

@@ -155,3 +158,3 @@ cb(); | ||

| httpserver.init(cservice.locals, options, function(err) { | ||

| httpserver.init(options, function(err) { | ||

| if (!err) { | ||

@@ -158,0 +161,0 @@ cservice.log( |

@@ -41,7 +41,2 @@ var cservice = require("../cluster-service"), | ||

| options = cservice.locals.options; | ||

| if (typeof options.workers === "undefined") { | ||

| // only define default worker if worker is undefined (null is reserved | ||

| // for "no worker") | ||

| options.workers = "./worker.js"; // default worker to execute | ||

| } | ||

| if (typeof options.workers === "string") { | ||

@@ -48,0 +43,0 @@ options.workers = { |

@@ -28,8 +28,3 @@ var cservice = require("../cluster-service"), | ||

| if (options.servers) { | ||

| if (util.isArray(options.servers) === false) { | ||

| options.servers = [options.servers]; | ||

| } | ||

| for (var i = 0; i < options.servers.length; i++) { | ||

| require("./net-stats")(options.servers[i]); | ||

| } | ||

| require("./net-servers").netServersAdd(options.servers); | ||

| } | ||

@@ -57,7 +52,14 @@ | ||

| case "onWorkerStop": | ||

| if (typeof onWorkerStop === "function") { | ||

| onWorkerStop(); | ||

| } | ||

| cservice.netServers.close(function() { | ||

| if (typeof onWorkerStop === "function") { | ||

| // if custom handler is provided rely on that to | ||

| // cleanup and exit process | ||

| onWorkerStop(); | ||

| } else { | ||

| // otherwise we can exit now that net servers have exited gracefully | ||

| process.exit(); | ||

| } | ||

| }); | ||

| break; | ||

| } | ||

| } |

@@ -7,2 +7,3 @@ var cservice = require("../cluster-service"), | ||

| exports.getByPID = getByPID; | ||

| exports.exitGracefully = exitGracefully; | ||

@@ -74,1 +75,6 @@ function get() { | ||

| } | ||

| function exitGracefully(worker) { | ||

| // inform the worker to exit gracefully | ||

| worker.send({cservice: {cmd: "onWorkerStop"}}); | ||

| } |

| { | ||

| "name": "cluster-service", | ||

| "version": "0.10.0", | ||

| "version": "1.0.0", | ||

| "author": { | ||

@@ -11,11 +11,12 @@ "name": "Aaron Silvas", | ||

| "scripts": { | ||

| "lint" : "npm run-script lint-src && npm run-script lint-test", | ||

| "start": "node scripts/start.js", | ||

| "lint": "npm run-script lint-src && npm run-script lint-test", | ||

| "lint-src": "jshint bin lib cluster-service.js", | ||

| "lint-test": "jshint --config .test-jshintrc test", | ||

| "cover":"istanbul cover ./node_modules/mocha/bin/_mocha -- --ui bdd -R spec -t 5000 -d", | ||

| "test-devel":"./node_modules/.bin/mocha bdd -R spec -t 5000 test/*.js test/workers/*.js", | ||

| "cover": "istanbul cover ./node_modules/mocha/bin/_mocha -- --ui bdd -R spec -t 5000 -d", | ||

| "test-devel": "./node_modules/.bin/mocha bdd -R spec -t 5000 test/*.js test/workers/*.js", | ||

| "test": "npm run-script lint && npm run-script cover" | ||

| }, | ||

| "dependencies": { | ||

| "async": ">=0.2.x", | ||

| "async": "~0.8.0", | ||

| "optimist": ">=0.6.0", | ||

@@ -22,0 +23,0 @@ "colors": ">=0.6.2", |

117

README.md

@@ -5,4 +5,4 @@ # cluster-service | ||

| [](https://www.npmjs.org/package/cluster-service) | ||

| ## Install | ||

@@ -18,14 +18,6 @@ | ||

| The short answer: | ||

| Turn your single process code into a fault-resilient, multi-process service with | ||

| built-in REST & CLI support. Restart or hot upgrade your web servers with zero | ||

| downtime or impact to clients. | ||

| Turns your single process code into a fault-resilient multi-process service with | ||

| built-in REST & CLI support. | ||

| The long answer: | ||

| Adds the ability to execute worker processes over N cores for extra service resilience, | ||

| includes worker process monitoring and restart on failure, continuous deployment, | ||

| as well as HTTP & command-line interfaces for health checks, cluster commands, | ||

| and custom service commands. | ||

| Presentation: | ||

@@ -35,8 +27,2 @@ | ||

| Stability: | ||

| With the v0.5 release we're effectively at an "Alpha" stage, with some semblance | ||

| of interface stability. Any breaking changes from now on should be handled gracefully | ||

| with either a deprecation schedule, or documented as a breaking change if necessary. | ||

@@ -46,4 +32,2 @@ | ||

| Turning your single process node app/service into a fault-resilient multi-process service with all of the bells and whistles has never been easier! | ||

| Your existing application, be it console app or service of some kind: | ||

@@ -58,2 +42,3 @@ | ||

| cservice "server.js" --accessKey "lksjdf982734" | ||

| // cserviced "server.js" --accessKey "lksjdf982734" // daemon | ||

@@ -73,3 +58,3 @@ This can be done without a global install as well, by updating your ```package.json```: | ||

| Or, if you prefer to control ```cluster-service``` within your code: | ||

| Or, if you prefer to control ```cluster-service``` within your code, we've got you covered: | ||

@@ -104,7 +89,7 @@ // server.js | ||

| You can even pipe raw JSON, which can be processed by the caller: | ||

| You can even pipe raw JSON for processing: | ||

| cservice "restart all" --accessKey "my_access_key" --json | ||

| Check out ***Cluster Commands*** for more details. | ||

| Check out ***Cluster Commands*** for more. | ||

@@ -117,2 +102,6 @@ | ||

| cservice "server.js" --accessKey "123" | ||

| Or via JSON config: | ||

| cservice "config.json" | ||

@@ -128,3 +117,5 @@ | ||

| * `workers` (default: "./worker.js") - Path of worker to start. A string indicates a single worker, | ||

| ### Options: | ||

| * `workers` - Path of worker to start. A string indicates a single worker, | ||

| forked based on value of ```workerCount```. An object indicates one or more worker objects: | ||

@@ -134,9 +125,8 @@ ```{ "worker1": { worker: "worker1.js", cwd: process.cwd(), count: 2, restart: true } }```. | ||

| the ```.js``` extension is detected. | ||

| * `accessKey` - A secret key that must be specified if you wish to invoke commands to your service. | ||

| Allows CLI & REST interfaces. | ||

| * `master` - An optional module to execute for the master process only, once ```start``` has been completed. | ||

| * `accessKey` - A secret key that must be specified if you wish to invoke commands from outside | ||

| your process. Allows CLI & REST interfaces. | ||

| * `config` - A filename to the configuration to load. Useful to keep options from having to be inline. | ||

| This option is automatically set if run via command-line ```cservice "config.json"``` if | ||

| the ```.json``` extension is detected. | ||

| * `host` (default: "localhost") - Host to bind to for REST interface. (Will only bind if accessKey | ||

| * `host` (default: "localhost") - Host to bind to for REST interface. (Will only bind if `accessKey` | ||

| is provided) | ||

@@ -146,11 +136,12 @@ * `port` (default: 11987) - Port to bind to. If you leverage more than one cluster-service on a | ||

| * `workerCount` (default: os.cpus().length) - Gives you control over the number of processes to | ||

| run the same worker concurrently. Recommended to be 2 or more for fault resilience. But some | ||

| run the same worker concurrently. Recommended to be 2 or more to improve availability. But some | ||

| workers do not impact availability, such as task queues, and can be run as a single instance. | ||

| * `cli` (default: false) - Enable the command line interface. Can be disabled for background | ||

| services, or test cases. Note: As of v0.7 and later, this defaults to true if run from command-line. | ||

| * `cli` (default: true) - Enable the command line interface. Can be disabled for background | ||

| services, or test cases. Running `cserviced` will automatically disable the CLI. | ||

| * `ssl` - If provided, will bind using HTTPS by passing this object as the | ||

| [TLS options](http://nodejs.org/api/tls.html#tls_tls_createserver_options_secureconnectionlistener). | ||

| * `run` - Ability to run a command, output result in json, and exit. This option is automatically | ||

| * `run` - Ability to run a command, output result, and exit. This option is automatically | ||

| set if run via command-line ```cservice "restart all"``` and no extension is detected. | ||

| * `json` - If specified in conjunction with ```run```, will *only* output the result in JSON for | ||

| * `json` (default: false) - If specified in conjunction with ```run```, | ||

| will *only* output the result in JSON for | ||

| consumption from other tasks/services. No other data will be output. | ||

@@ -169,3 +160,3 @@ * `silent` (default: false) - If true, forked workers will not send their output to parent's stdio. | ||

| paths are provided, they will be resolved from process.cwd(). | ||

| * `servers` - One (object) or more (array of objects) `Net` objects to listen for ***Net Stats***. | ||

| * `master` - An optional module to execute for the master process only, once ```start``` has been completed. | ||

@@ -222,2 +213,6 @@ | ||

| cservice "server.js" --commands "./commands,../some_more_commands" | ||

| Or if you prefer to manually do so via code: | ||

| var cservice = require("cluster-service"); | ||

@@ -237,9 +232,3 @@ cservice.on("custom", function(evt, cb, arg1, arg2) { // "custom" command | ||

| You may optionally register one more directories of commands via the ```commands``` option: | ||

| cservice.start({ commands: "./commands,../some_more_commands" }); | ||

| The above example allows you to skip manually registering each command via ```cservice.on```. | ||

| ## Cluster Events | ||

@@ -256,4 +245,5 @@ | ||

| By default, when a process is started successfully without exiting, it is assumed to be "running". | ||

| This behavior is not always desired however, and may optionally be controlled by: | ||

| While web servers are automatically wired up and do not require async logic (as of v1.0), if | ||

| your service requires any other asynchronous initialization code before being ready, this | ||

| is how it can be done. | ||

@@ -267,3 +257,3 @@ Have the worker inform the master once it is actually ready: | ||

| require("cluster-service").workerReady(); // we're ready! | ||

| }); | ||

| }, 1000); | ||

@@ -274,3 +264,6 @@ Additionally, a worker may optionally perform cleanup tasks prior to exit, via: | ||

| require("cluster-service").workerReady({ | ||

| onWorkerStop: function() { /* lets clean this place up */ } | ||

| onWorkerStop: function() { | ||

| // lets clean this place up | ||

| process.exit(); // we're responsible for exiting if we register this cb | ||

| } | ||

| }); | ||

@@ -299,40 +292,4 @@ | ||

| ## Continuous Deployment | ||

| Combining the Worker Process (Cluster) model with a CLI piped REST API enables the ability | ||

| to command the already-running service to replace existing workers with workers in a | ||

| different location. This capability is still a work in progress, but initial tests are promising. | ||

| * Cluster Service A1 starts | ||

| * N child worker processes started | ||

| * Cluster Service A2 starts | ||

| * A2 pipes CLI to A1 | ||

| * A2 issues "upgrade" command | ||

| * A1 spins up A2 worker | ||

| * New worker is monitored for N seconds for failure, and cancels upgrade if failure occurs | ||

| * Remaining N workers are replaced, one by one, until all are running A2 workers | ||

| * Upgrade reports success | ||

| ## Net Stats | ||

| As of v0.8, you may optionally collect network statistics within cservice. To enable this feature, | ||

| you must provide all Net object servers (tcp, http, https, etc) in one of two ways: | ||

| var server = require("http").createServer(onRequest); | ||

| require("cluster-service").netStats(server); // listen to net stats | ||

| Or you can provide the net server objects via the ```servers``` option: | ||

| var server = require("http").createServer(onRequest); | ||

| require("cluster-service").workerReady({ servers: [server] }); // listen to net stats | ||

| Net statistics summary may be found via the ```info``` command, and individual process | ||

| net statistics may be found via the ```workers``` command. | ||

| ## Tests & Code Coverage | ||

@@ -339,0 +296,0 @@ |

@@ -34,3 +34,2 @@ var cservice = require("../cluster-service"); | ||

| httpclient.init( | ||

| cservice.locals, | ||

| extend(cservice.options, {accessKey: "123", silentMode: true}) | ||

@@ -37,0 +36,0 @@ ); |

New alerts

License Policy Violation

LicenseThis package is not allowed per your license policy. Review the package's license to ensure compliance.

Found 1 instance in 1 package

Network access

Supply chain riskThis module accesses the network.

Found 2 instances in 1 package

Fixed alerts

License Policy Violation

LicenseThis package is not allowed per your license policy. Review the package's license to ensure compliance.

Found 1 instance in 1 package

No v1

QualityPackage is not semver >=1. This means it is not stable and does not support ^ ranges.

Found 1 instance in 1 package

Improved metrics

- Total package byte prevSize

- increased by4.72%

123438

- Number of package files

- increased by10.45%

74

- Lines of code

- increased by6.86%

3286

- Number of low quality alerts

- decreased by-100%

0

Worsened metrics

- Number of lines in readme file

- decreased by-12.43%

303

- Number of medium supply chain risk alerts

- increased by100%

8

Dependency changes

+ Addedasync@0.8.0(transitive)

- Removedasync@3.2.6(transitive)

Updatedasync@~0.8.0