Product

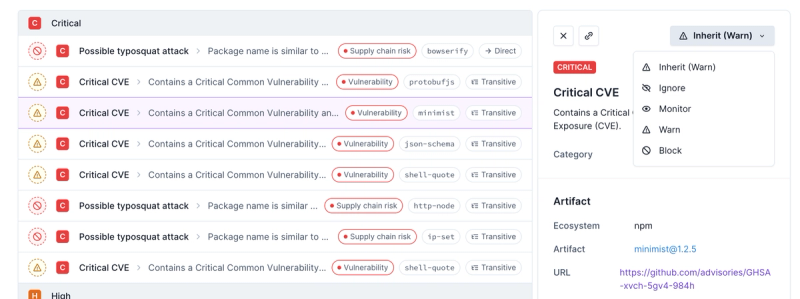

Introducing Enhanced Alert Actions and Triage Functionality

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

get-stream

Advanced tools

Package description

The get-stream npm package is a utility that allows you to get a stream as a string, buffer, or array. It is useful for converting streams into a more usable form in Node.js applications.

Get stream as a string

This feature allows you to convert a readable stream into a string. It is useful when you want to process the contents of a file or any readable stream as a string.

const getStream = require('get-stream');

const fs = require('fs');

(async () => {

const stream = fs.createReadStream('file.txt');

const data = await getStream(stream);

console.log(data);

})();Get stream as a buffer

This feature allows you to convert a readable stream into a buffer. It is useful when you need to handle binary data from streams.

const getStream = require('get-stream');

const fs = require('fs');

(async () => {

const stream = fs.createReadStream('file.txt');

const data = await getStream.buffer(stream);

console.log(data);

})();Get stream as an array

This feature allows you to convert a readable stream into an array of values. It is useful when you want to process data from a stream in chunks or lines.

const getStream = require('get-stream');

const fs = require('fs');

(async () => {

const stream = fs.createReadStream('file.txt');

const data = await getStream.array(stream);

console.log(data);

})();concat-stream is a writable stream that concatenates data and calls a callback with the result. It is similar to get-stream but uses a callback pattern instead of promises.

Buffer List (bl) is a storage object for collections of Node Buffers, which can be easily read and written. It is similar to get-stream's buffer functionality but offers more features for manipulating the buffer list.

stream-to-promise converts a Node.js stream into a promise, which is resolved when the 'finish' event is emitted. It is similar to get-stream but focuses on the 'finish' event rather than collecting the stream's data.

Readme

Get a stream as a string, Buffer, ArrayBuffer or array

npm install get-stream

import fs from 'node:fs';

import getStream from 'get-stream';

const stream = fs.createReadStream('unicorn.txt');

console.log(await getStream(stream));

/*

,,))))))));,

__)))))))))))))),

\|/ -\(((((''''((((((((.

-*-==//////(('' . `)))))),

/|\ ))| o ;-. '((((( ,(,

( `| / ) ;))))' ,_))^;(~

| | | ,))((((_ _____------~~~-. %,;(;(>';'~

o_); ; )))(((` ~---~ `:: \ %%~~)(v;(`('~

; ''''```` `: `:::|\,__,%% );`'; ~

| _ ) / `:|`----' `-'

______/\/~ | / /

/~;;.____/;;' / ___--,-( `;;;/

/ // _;______;'------~~~~~ /;;/\ /

// | | / ; \;;,\

(<_ | ; /',/-----' _>

\_| ||_ //~;~~~~~~~~~

`\_| (,~~

\~\

~~

*/

import getStream from 'get-stream';

const {body: readableStream} = await fetch('https://example.com');

console.log(await getStream(readableStream));

import {opendir} from 'node:fs/promises';

import {getStreamAsArray} from 'get-stream';

const asyncIterable = await opendir(directory);

console.log(await getStreamAsArray(asyncIterable));

The following methods read the stream's contents and return it as a promise.

stream: stream.Readable, ReadableStream, or AsyncIterable<string | Buffer | ArrayBuffer | DataView | TypedArray>

options: Options

Get the given stream as a string.

Get the given stream as a Node.js Buffer.

import {getStreamAsBuffer} from 'get-stream';

const stream = fs.createReadStream('unicorn.png');

console.log(await getStreamAsBuffer(stream));

Get the given stream as an ArrayBuffer.

import {getStreamAsArrayBuffer} from 'get-stream';

const {body: readableStream} = await fetch('https://example.com');

console.log(await getStreamAsArrayBuffer(readableStream));

Get the given stream as an array. Unlike other methods, this supports streams of objects.

import {getStreamAsArray} from 'get-stream';

const {body: readableStream} = await fetch('https://example.com');

console.log(await getStreamAsArray(readableStream));

Type: object

Type: number

Default: Infinity

Maximum length of the stream. If exceeded, the promise will be rejected with a MaxBufferError.

Depending on the method, the length is measured with string.length, buffer.length, arrayBuffer.byteLength or array.length.

If the stream errors, the returned promise will be rejected with the error. Any contents already read from the stream will be set to error.bufferedData, which is a string, a Buffer, an ArrayBuffer or an array depending on the method used.

import getStream from 'get-stream';

try {

await getStream(streamThatErrorsAtTheEnd('unicorn'));

} catch (error) {

console.log(error.bufferedData);

//=> 'unicorn'

}

If you do not need the maxBuffer option, error.bufferedData, nor browser support, you can use the following methods instead of this package.

streamConsumers.text()import fs from 'node:fs';

import {text} from 'node:stream/consumers';

const stream = fs.createReadStream('unicorn.txt', {encoding: 'utf8'});

console.log(await text(stream))

streamConsumers.buffer()import {buffer} from 'node:stream/consumers';

console.log(await buffer(stream))

streamConsumers.arrayBuffer()import {arrayBuffer} from 'node:stream/consumers';

console.log(await arrayBuffer(stream))

readable.toArray()console.log(await stream.toArray())

Array.fromAsync()If your environment supports it:

console.log(await Array.fromAsync(stream))

When all of the following conditions apply:

getStream() is used (as opposed to getStreamAsBuffer() or getStreamAsArrayBuffer())Then the stream must be decoded using a transform stream like TextDecoderStream or b64.

import getStream from 'get-stream';

const textDecoderStream = new TextDecoderStream('utf-16le');

const {body: readableStream} = await fetch('https://example.com');

console.log(await getStream(readableStream.pipeThrough(textDecoderStream)));

getStreamAsArrayBuffer() can be used to create Blobs.

import {getStreamAsArrayBuffer} from 'get-stream';

const stream = fs.createReadStream('unicorn.txt');

console.log(new Blob([await getStreamAsArrayBuffer(stream)]));

getStreamAsArray() can be combined with JSON streaming utilities to parse JSON incrementally.

import fs from 'node:fs';

import {compose as composeStreams} from 'node:stream';

import {getStreamAsArray} from 'get-stream';

import streamJson from 'stream-json';

import streamJsonArray from 'stream-json/streamers/StreamArray.js';

const stream = fs.createReadStream('big-array-of-objects.json');

console.log(await getStreamAsArray(

composeStreams(stream, streamJson.parser(), streamJsonArray.streamArray()),

));

getStream(): 142mstext(): 139msgetStreamAsBuffer(): 106msbuffer(): 83msgetStreamAsArrayBuffer(): 105msarrayBuffer(): 81msgetStreamAsArray(): 24msstream.toArray(): 21msgetStream(): 90mstext(): 89msgetStreamAsBuffer(): 127msbuffer(): 192msgetStreamAsArrayBuffer(): 129msarrayBuffer(): 195msgetStreamAsArray(): 89msstream.toArray(): 90msgetStream(): 223mstext(): 221msgetStreamAsBuffer(): 182msbuffer(): 153msgetStreamAsArrayBuffer(): 171msarrayBuffer(): 155msgetStreamAsArray(): 83msgetStream(): 141mstext(): 139msgetStreamAsBuffer(): 91msbuffer(): 80msgetStreamAsArrayBuffer(): 89msarrayBuffer(): 81msgetStreamAsArray(): 21msconcat-stream?This module accepts a stream instead of being one and returns a promise instead of using a callback. The API is simpler and it only supports returning a string, Buffer, an ArrayBuffer or an array. It doesn't have a fragile type inference. You explicitly choose what you want. And it doesn't depend on the huge readable-stream package.

FAQs

Get a stream as a string, Buffer, ArrayBuffer or array

The npm package get-stream receives a total of 88,539,782 weekly downloads. As such, get-stream popularity was classified as popular.

We found that get-stream demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 2 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

Security News

Polyfill.io has been serving malware for months via its CDN, after the project's open source maintainer sold the service to a company based in China.

Security News

OpenSSF is warning open source maintainers to stay vigilant against reputation farming on GitHub, where users artificially inflate their status by manipulating interactions on closed issues and PRs.