Product

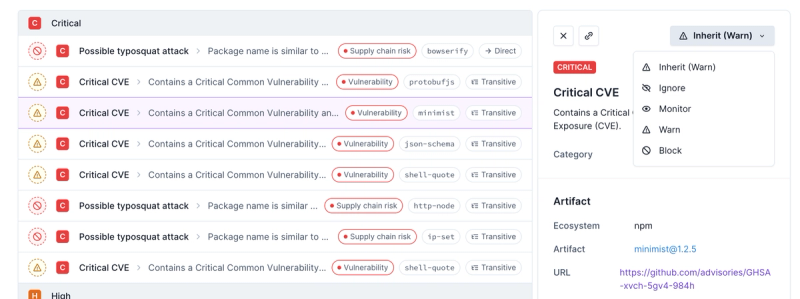

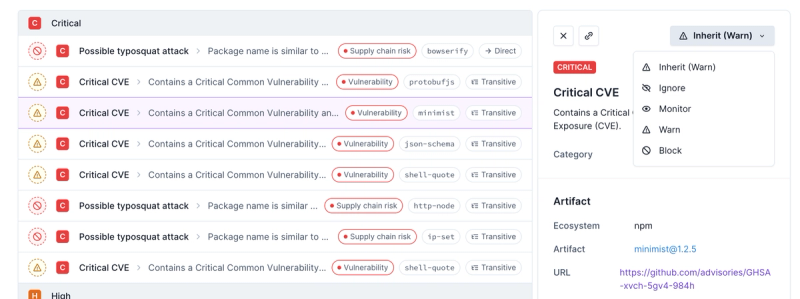

Introducing Enhanced Alert Actions and Triage Functionality

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

lru-cache-for-clusters-as-promised

Advanced tools

Readme

LRU Cache for Clusters as Promised provides a cluster-safe lru-cache via Promises. For environments not using cluster, the class will provide a Promisified interface to a standard lru-cache.

Each time you call cluster.fork(), a new thread is spawned to run your application. When using a load balancer even if a user is assigned a particular IP and port these values are shared between the workers in your cluster, which means there is no guarantee that the user will use the same workers between requests. Caching the same objects in multiple threads is not an efficient use of memory.

LRU Cache for Clusters as Promised stores a single lru-cache on the master thread which is accessed by the workers via IPC messages. The same lru-cache is shared between workers having a common master, so no memory is wasted.

To differentiate caches on the master as instances on the workers, specify a namespace value in the options argument of the new LRUCache(options) constructor.

npm install --save lru-cache-for-clusters-as-promised

const LRUCache = require('lru-cache-for-clusters-as-promised');

const cache = new LRUCache({

max: 50,

stale: false,

namespace: 'users',

});

const user = { name: 'user name' };

const key = 'userKey';

// set a user for a the key

cache.set(key, user)

.then(() => {

console.log('set the user to the cache');

// get the same user back out of the cache

return cache.get(key);

})

.then((cachedUser) => {

console.log('got the user from cache', cachedUser);

// check the number of users in the cache

return cache.length();

})

.then((size) => {

console.log('user cache size/length', size);

// remove all the items from the cache

return cache.reset();

})

.then(() => {

console.log('the user cache is empty');

// return user count, this will return the same value as calling length()

return cache.itemCount();

})

.then((size) => {

console.log('user cache size/itemCount', size);

});

namespace: string

max: number

maxAge: milliseconds

stale: true|false

! note that

lengthanddisposeare missing as it is not possible to passfunctionsvia IPC messages.

set(key, value)

get(key)

peek(key)

del(key)

has(key)

reset()

keys()

values()

dump()

prune()

length()

itemCount()

length().Clustered cache on master thread for clustered environments*

+-----+

+--------+ +---------------+ +---------+ +---------------+ # M T #

| +--> LRU Cache for +--> +--> Worker Sends +--># A H #

| Worker | | Clusters as | | Promise | | IPC Message | # S R #

| <--+ Promised <--+ <--+ to Master <---# T E #

+--------+ +---------------+ +---------+ +---------------+ # E A #

# R D #

v---------------------------------------------------------------+-----+

+-----+

* W T * +--------------+ +--------+ +-----------+

* O H *---> Master +--> +--> LRU Cache |

* R R * | IPC Message | | Master | | by |

* K E *<--+ Listener <--+ <--+ namespace |

* E A * +--------------+ +--------+ +-----------+

* R D *

+-----+

Promisified for non-clustered environments*

+---------------+ +---------------+ +---------+ +-----------+

| +--> LRU Cache for +--> +--> |

| Non-clustered | | Clusters as | | Promise | | LRU Cache |

| <--+ Promised <--+ <--+ |

+---------------+ +---------------+ +---------+ +-----------+

FAQs

LRU Cache that is safe for clusters, based on `lru-cache`. Save memory by only caching items on the main thread via a promisified interface.

The npm package lru-cache-for-clusters-as-promised receives a total of 761 weekly downloads. As such, lru-cache-for-clusters-as-promised popularity was classified as not popular.

We found that lru-cache-for-clusters-as-promised demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

Security News

Polyfill.io has been serving malware for months via its CDN, after the project's open source maintainer sold the service to a company based in China.

Security News

OpenSSF is warning open source maintainers to stay vigilant against reputation farming on GitHub, where users artificially inflate their status by manipulating interactions on closed issues and PRs.