Product

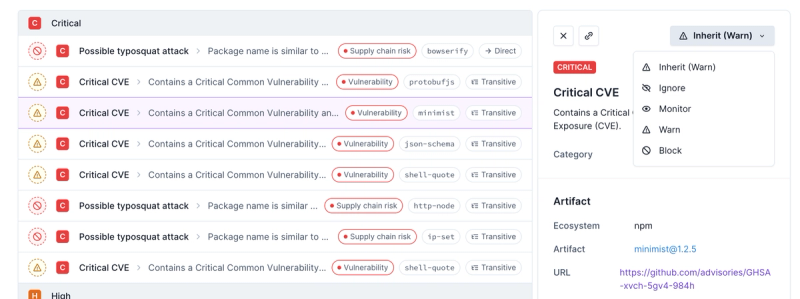

Introducing Enhanced Alert Actions and Triage Functionality

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

rate-limiter-flexible

Advanced tools

Readme

rate-limiter-flexible counts and limits number of actions by key and protects from DDoS and brute force attacks at any scale.

It works with Redis, process Memory, Cluster or PM2, Memcached, MongoDB, MySQL, PostgreSQL and allows to control requests rate in single process or distributed environment.

Atomic increments. All operations in memory or distributed environment use atomic increments against race conditions.

Fast. Average request takes 0.7ms in Cluster and 2.5ms in Distributed application. See benchmarks.

Flexible. Combine limiters, block key for some duration, delay actions, manage failover with insurance options, configure smart key blocking in memory and many others.

Ready for growth. It provides unified API for all limiters. Whenever your application grows, it is ready. Prepare your limiters in minutes.

Friendly. No matter which node package you prefer: redis or ioredis, sequelize or knex, memcached, native driver or mongoose. It works with all of them.

It uses fixed window as it is much faster than rolling window. See comparative benchmarks with other libraries here

npm i --save rate-limiter-flexible

yarn add rate-limiter-flexible

const opts = {

points: 6, // 6 points

duration: 1, // Per second

};

const rateLimiter = new RateLimiterMemory(opts);

rateLimiter.consume(remoteAddress, 2) // consume 2 points

.then((rateLimiterRes) => {

// 2 points consumed

})

.catch((rateLimiterRes) => {

// Not enough points to consume

});

Both Promise resolve and reject return object of RateLimiterRes class if there is no any error.

Object attributes:

RateLimiterRes = {

msBeforeNext: 250, // Number of milliseconds before next action can be done

remainingPoints: 0, // Number of remaining points in current duration

consumedPoints: 5, // Number of consumed points in current duration

isFirstInDuration: false, // action is first in current duration

}

You may want to set next HTTP headers to response:

const headers = {

"Retry-After": rateLimiterRes.msBeforeNext / 1000,

"X-RateLimit-Limit": opts.points,

"X-RateLimit-Remaining": rateLimiterRes.remainingPoints,

"X-RateLimit-Reset": new Date(Date.now() + rateLimiterRes.msBeforeNext)

}

get, block, delete, penalty and reward methodsSome copy/paste examples on Wiki:

points

Default: 4

Maximum number of points can be consumed over duration

duration

Default: 1

Number of seconds before consumed points are reset.

Never reset points, if duration is set to 0.

storeClient

Required for store limiters

Have to be redis, ioredis, memcached, mongodb, pg, mysql2, mysql or any other related pool or connection.

knex, if you use it.Cut off load picks:

Specific:

Read detailed description on Wiki.

RateLimiterRes or null.secDuration seconds.Average latency during test pure NodeJS endpoint in cluster of 4 workers with everything set up on one server.

1000 concurrent clients with maximum 2000 requests per sec during 30 seconds.

1. Memory 0.34 ms

2. Cluster 0.69 ms

3. Redis 2.45 ms

4. Memcached 3.89 ms

5. Mongo 4.75 ms

500 concurrent clients with maximum 1000 req per sec during 30 seconds

6. PostgreSQL 7.48 ms (with connection pool max 100)

7. MySQL 14.59 ms (with connection pool 100)

Appreciated, feel free!

Make sure you've launched npm run eslint before creating PR, all errors have to be fixed.

You can try to run npm run eslint-fix to fix some issues.

Any new limiter with storage have to be extended from RateLimiterStoreAbstract.

It has to implement at least 4 methods:

_getRateLimiterRes parses raw data from store to RateLimiterRes object._upsert inserts or updates limits data by key and returns raw data._get returns raw data by key._delete deletes all key related data and returns true on deleted, false if key is not found.All other methods depends on store. See RateLimiterRedis or RateLimiterPostgres for example.

FAQs

Node.js rate limiter by key and protection from DDoS and Brute-Force attacks in process Memory, Redis, MongoDb, Memcached, MySQL, PostgreSQL, Cluster or PM

We found that rate-limiter-flexible demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.

Security News

Polyfill.io has been serving malware for months via its CDN, after the project's open source maintainer sold the service to a company based in China.

Security News

OpenSSF is warning open source maintainers to stay vigilant against reputation farming on GitHub, where users artificially inflate their status by manipulating interactions on closed issues and PRs.