Product

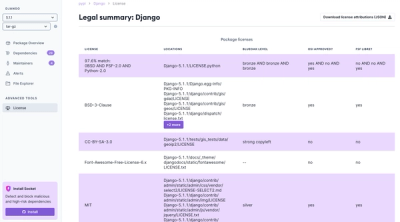

Introducing License Enforcement in Socket

Ensure open-source compliance with Socket’s License Enforcement Beta. Set up your License Policy and secure your software!

This is a utility function to extract semantically distinct keywords. This is an unsupervised method based on word2vec. Current implementation used a word2vec model trained in simplewiki(for English). Other language models and their sources are given below.

Please visit the blog post for more details

conda create -n keyphrases python=3.8 --no-default-packages

conda activate keyphrases

pip install distinct-keywords

python -m spacy download en_core_web_sm

conda install --channel=conda-forge nb_conda_kernels jupyter

Optional multi-lingual support

```

import nltk

nltk.download('omw')

```

jupyter notebook

Clone the repository

Open the examples folder in jupyter notebook. The sub-folders contain the respective language files.

Select the language you wanted to try out

from distinct_keywords.keywords import DistinctKeywords

doc = """

Supervised learning is the machine learning task of learning a function that

maps an input to an output based on example input-output pairs. It infers a

function from labeled training data consisting of a set of training examples.

In supervised learning, each example is a pair consisting of an input object

(typically a vector) and a desired output value (also called the supervisory signal).

A supervised learning algorithm analyzes the training data and produces an inferred function,

which can be used for mapping new examples. An optimal scenario will allow for the

algorithm to correctly determine the class labels for unseen instances. This requires

the learning algorithm to generalize from the training data to unseen situations in a

'reasonable' way (see inductive bias).

"""

distinct_keywords=DistinctKeywords()

distinct_keywords.get_keywords(doc)

['machine learning',

'pairs',

'mapping',

'vector',

'typically',

'supervised',

'bias',

'supervisory',

'task',

'algorithm',

'unseen',

'training']

After creating word2vec, the words are mapped to a hilbert space and the results are stored in a key-value pair (every word has a hilbert hash). Now for a new document, the words and phrases are cleaned, hashed using the dictionary. One word from each different prefix is then selected using wordnet ranking from NLTK (rare words are prioritized). The implementation of grouping and look up is made fast using Trie and SortedDict

Currently this is tested against KPTimes test dataset (20000 articles). A recall score of 31% is achieved when compared to the manual keywords given in the dataset.

Steps to arrive at the score:

Used both algorithms. Keybert was ran with additional parameter top_n=16 as the length of dstinct_keywords at 75% level was around 15.

Results of algorithms and original keywords were cleaned (lower case, space removal, character removal, but no lemmatization)

Take intersection of original keywords and generated keyword word banks (individual words)

For each prediction compare the length of intersecting words with length of total keyword words

Output is given below

Spanish: Spanish Billion Word Corpus and Embeddings by Cristian Cardellino https://crscardellino.ar/SBWCE/

German: @thesis{mueller2015, author = {{Müller}, Andreas}, title = "{Analyse von Wort-Vektoren deutscher Textkorpora}", school = {Technische Universität Berlin}, year = 2015, month = jun, type = {Bachelor's Thesis}, url = {https://devmount.github.io/GermanWordEmbeddings} }

French: @misc{fauconnier_2015, author = {Fauconnier, Jean-Philippe}, title = {French Word Embeddings}, url = {http://fauconnier.github.io}, year = {2015}}

Italian: Nordic Language Processing Laboratory (NLPL) (http://vectors.nlpl.eu/repository/)

Portuguese: NILC - Interinstitutional Nucleus of Computational Linguistics http://nilc.icmc.usp.br/embeddings

FAQs

Unknown package

We found that distinct-keywords demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Ensure open-source compliance with Socket’s License Enforcement Beta. Set up your License Policy and secure your software!

Product

We're launching a new set of license analysis and compliance features for analyzing, managing, and complying with licenses across a range of supported languages and ecosystems.

Product

We're excited to introduce Socket Optimize, a powerful CLI command to secure open source dependencies with tested, optimized package overrides.