Product

Announcing Precomputed Reachability Analysis in Socket

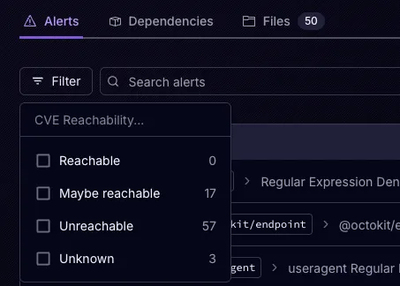

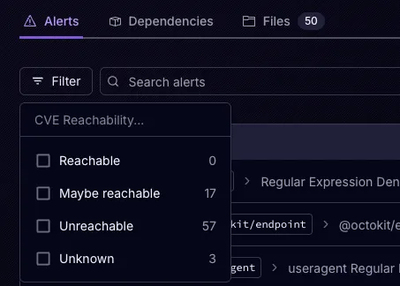

Socket’s precomputed reachability slashes false positives by flagging up to 80% of vulnerabilities as irrelevant, with no setup and instant results.

Gradients provide a self consistency test function to perform gradient checking on your deep learning models. It uses centered finite difference approximation method to check the difference between analytical and numerical gradients and report if the check fails on any parameters of your model. Currently the library supports only PyTorch models built with custom layers, custom loss functions, activation functions and any neural network function subclassing AutoGrad.

pip install gradients

Optimizing deep learning models is a two step process:

Compute gradients with respect to parameters

Update the parameters given the gradients

In PyTorch, step 1 is done by the type-based automatic differentiation system torch.nn.autograd and 2 by the package implementing optimization algorithms torch.optim. Using them, we can develop fully customized deep learning models with torch.nn.Module and test them using Gradient as follows;

class MySigmoid(torch.autograd.Function):

@staticmethod

def forward(ctx, input):

output = 1/(1+torch.exp(-input))

ctx.save_for_backward(output)

return output

@staticmethod

def backward(ctx, grad_output):

input, = ctx.saved_tensors

return grad_output*input*(1-input)

class MSELoss(torch.autograd.Function):

@staticmethod

def forward(ctx, y_pred, y):

ctx.save_for_backward(y_pred, y)

return ((y_pred-y)**2).sum()/y_pred.shape[0]

@staticmethod

def backward(ctx, grad_output):

y_pred, y = ctx.saved_tensors

grad_input = 2 * (y_pred-y)/y_pred.shape[0]

return grad_input, None

class MyModel(torch.nn.Module):

def __init__(self,D_in, D_out):

super(MyModel,self).__init__()

self.w1 = torch.nn.Parameter(torch.randn(D_in, D_out), requires_grad=True)

self.sigmoid = MySigmoid.apply

def forward(self,x):

y_pred = self.sigmoid(x.mm(self.w1))

return y_pred

import torch

from gradients import Gradient

N, D_in, D_out = 10, 4, 3

# Create random Tensors to hold inputs and outputs

x = torch.randn(N, D_in)

y = torch.randn(N, D_out)

# Construct model by instantiating the class defined above

mymodel = MyModel(D_in, D_out)

criterion = MSELoss.apply

# Test custom build model

Gradient(mymodel,x,y,criterion,eps=1e-8)

Gradients@software{nambusubramaniyan_saranraj_2021_5176243,

author = {Nambusubramaniyan, Saranraj},

title = {gradients},

month = aug,

year = 2021,

publisher = {Zenodo},

version = {1.0.0},

doi = {10.5281/zenodo.5176243},

url = {https://doi.org/10.5281/zenodo.5176243}

}```

FAQs

Gradient Checker for Custom built PyTorch Models

We found that gradients demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket’s precomputed reachability slashes false positives by flagging up to 80% of vulnerabilities as irrelevant, with no setup and instant results.

Product

Socket is launching experimental protection for Chrome extensions, scanning for malware and risky permissions to prevent silent supply chain attacks.

Product

Add secure dependency scanning to Claude Desktop with Socket MCP, a one-click extension that keeps your coding conversations safe from malicious packages.