Research

Security News

Malicious npm Package Typosquats react-login-page to Deploy Keylogger

Socket researchers unpack a typosquatting package with malicious code that logs keystrokes and exfiltrates sensitive data to a remote server.

Readme

Call all LLM APIs using the OpenAI format [Bedrock, Huggingface, VertexAI, TogetherAI, Azure, OpenAI, etc.]

LiteLLM manages:

completion, embedding, and image_generation endpoints['choices'][0]['message']['content']Jump to OpenAI Proxy Docs

Jump to Supported LLM Providers

🚨 Stable Release: Use docker images with the -stable tag. These have undergone 12 hour load tests, before being published.

Support for more providers. Missing a provider or LLM Platform, raise a feature request.

[!IMPORTANT] LiteLLM v1.0.0 now requires

openai>=1.0.0. Migration guide here

LiteLLM v1.40.14+ now requirespydantic>=2.0.0. No changes required.

pip install litellm

from litellm import completion

import os

## set ENV variables

os.environ["OPENAI_API_KEY"] = "your-openai-key"

os.environ["COHERE_API_KEY"] = "your-cohere-key"

messages = [{ "content": "Hello, how are you?","role": "user"}]

# openai call

response = completion(model="gpt-3.5-turbo", messages=messages)

# cohere call

response = completion(model="command-nightly", messages=messages)

print(response)

Call any model supported by a provider, with model=<provider_name>/<model_name>. There might be provider-specific details here, so refer to provider docs for more information

from litellm import acompletion

import asyncio

async def test_get_response():

user_message = "Hello, how are you?"

messages = [{"content": user_message, "role": "user"}]

response = await acompletion(model="gpt-3.5-turbo", messages=messages)

return response

response = asyncio.run(test_get_response())

print(response)

liteLLM supports streaming the model response back, pass stream=True to get a streaming iterator in response.

Streaming is supported for all models (Bedrock, Huggingface, TogetherAI, Azure, OpenAI, etc.)

from litellm import completion

response = completion(model="gpt-3.5-turbo", messages=messages, stream=True)

for part in response:

print(part.choices[0].delta.content or "")

# claude 2

response = completion('claude-2', messages, stream=True)

for part in response:

print(part.choices[0].delta.content or "")

LiteLLM exposes pre defined callbacks to send data to Lunary, Langfuse, DynamoDB, s3 Buckets, Helicone, Promptlayer, Traceloop, Athina, Slack

from litellm import completion

## set env variables for logging tools

os.environ["LUNARY_PUBLIC_KEY"] = "your-lunary-public-key"

os.environ["LANGFUSE_PUBLIC_KEY"] = ""

os.environ["LANGFUSE_SECRET_KEY"] = ""

os.environ["ATHINA_API_KEY"] = "your-athina-api-key"

os.environ["OPENAI_API_KEY"]

# set callbacks

litellm.success_callback = ["lunary", "langfuse", "athina"] # log input/output to lunary, langfuse, supabase, athina etc

#openai call

response = completion(model="gpt-3.5-turbo", messages=[{"role": "user", "content": "Hi 👋 - i'm openai"}])

Track spend + Load Balance across multiple projects

The proxy provides:

pip install 'litellm[proxy]'

$ litellm --model huggingface/bigcode/starcoder

#INFO: Proxy running on http://0.0.0.0:4000

import openai # openai v1.0.0+

client = openai.OpenAI(api_key="anything",base_url="http://0.0.0.0:4000") # set proxy to base_url

# request sent to model set on litellm proxy, `litellm --model`

response = client.chat.completions.create(model="gpt-3.5-turbo", messages = [

{

"role": "user",

"content": "this is a test request, write a short poem"

}

])

print(response)

Connect the proxy with a Postgres DB to create proxy keys

# Get the code

git clone https://github.com/BerriAI/litellm

# Go to folder

cd litellm

# Add the master key

echo 'LITELLM_MASTER_KEY="sk-1234"' > .env

source .env

# Start

docker-compose up

UI on /ui on your proxy server

Set budgets and rate limits across multiple projects

POST /key/generate

curl 'http://0.0.0.0:4000/key/generate' \

--header 'Authorization: Bearer sk-1234' \

--header 'Content-Type: application/json' \

--data-raw '{"models": ["gpt-3.5-turbo", "gpt-4", "claude-2"], "duration": "20m","metadata": {"user": "ishaan@berri.ai", "team": "core-infra"}}'

{

"key": "sk-kdEXbIqZRwEeEiHwdg7sFA", # Bearer token

"expires": "2023-11-19T01:38:25.838000+00:00" # datetime object

}

| Provider | Completion | Streaming | Async Completion | Async Streaming | Async Embedding | Async Image Generation |

|---|---|---|---|---|---|---|

| openai | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| azure | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| aws - sagemaker | ✅ | ✅ | ✅ | ✅ | ✅ | |

| aws - bedrock | ✅ | ✅ | ✅ | ✅ | ✅ | |

| google - vertex_ai | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| google - palm | ✅ | ✅ | ✅ | ✅ | ||

| google AI Studio - gemini | ✅ | ✅ | ✅ | ✅ | ||

| mistral ai api | ✅ | ✅ | ✅ | ✅ | ✅ | |

| cloudflare AI Workers | ✅ | ✅ | ✅ | ✅ | ||

| cohere | ✅ | ✅ | ✅ | ✅ | ✅ | |

| anthropic | ✅ | ✅ | ✅ | ✅ | ||

| huggingface | ✅ | ✅ | ✅ | ✅ | ✅ | |

| replicate | ✅ | ✅ | ✅ | ✅ | ||

| together_ai | ✅ | ✅ | ✅ | ✅ | ||

| openrouter | ✅ | ✅ | ✅ | ✅ | ||

| ai21 | ✅ | ✅ | ✅ | ✅ | ||

| baseten | ✅ | ✅ | ✅ | ✅ | ||

| vllm | ✅ | ✅ | ✅ | ✅ | ||

| nlp_cloud | ✅ | ✅ | ✅ | ✅ | ||

| aleph alpha | ✅ | ✅ | ✅ | ✅ | ||

| petals | ✅ | ✅ | ✅ | ✅ | ||

| ollama | ✅ | ✅ | ✅ | ✅ | ✅ | |

| deepinfra | ✅ | ✅ | ✅ | ✅ | ||

| perplexity-ai | ✅ | ✅ | ✅ | ✅ | ||

| Groq AI | ✅ | ✅ | ✅ | ✅ | ||

| Deepseek | ✅ | ✅ | ✅ | ✅ | ||

| anyscale | ✅ | ✅ | ✅ | ✅ | ||

| IBM - watsonx.ai | ✅ | ✅ | ✅ | ✅ | ✅ | |

| voyage ai | ✅ | |||||

| xinference [Xorbits Inference] | ✅ | |||||

| FriendliAI | ✅ | ✅ | ✅ | ✅ |

To contribute: Clone the repo locally -> Make a change -> Submit a PR with the change.

Here's how to modify the repo locally: Step 1: Clone the repo

git clone https://github.com/BerriAI/litellm.git

Step 2: Navigate into the project, and install dependencies:

cd litellm

poetry install -E extra_proxy -E proxy

Step 3: Test your change:

cd litellm/tests # pwd: Documents/litellm/litellm/tests

poetry run flake8

poetry run pytest .

Step 4: Submit a PR with your changes! 🚀

For companies that need better security, user management and professional support

This covers:

FAQs

Library to easily interface with LLM API providers

We found that litellm demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Research

Security News

Socket researchers unpack a typosquatting package with malicious code that logs keystrokes and exfiltrates sensitive data to a remote server.

Security News

The JavaScript community has launched the e18e initiative to improve ecosystem performance by cleaning up dependency trees, speeding up critical parts of the ecosystem, and documenting lighter alternatives to established tools.

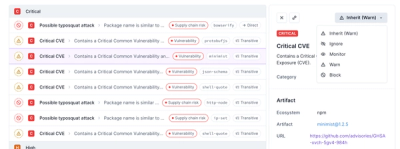

Product

Socket now supports four distinct alert actions instead of the previous two, and alert triaging allows users to override the actions taken for all individual alerts.