Security News

Feross on TBPN: Socket's Series C and the State of Software Supply Chain Security

Feross Aboukhadijeh joins TBPN to discuss Socket's $60M Series C, 500%+ ARR growth, AI's impact on open source, and the rise in supply chain attacks.

Microsoft has released an open source toolkit for enforcing runtime security policies on AI agents as adoption accelerates faster than governance controls.

April 7, 2026

5 min read

Microsoft has published its Agent Governance Toolkit, an open source project that brings runtime policy enforcement to autonomous AI agents. The release lands as the industry grapples with a widening gap between how fast AI agents are being deployed and how little infrastructure exists to govern what they do once they're running.

The toolkit is available under the MIT license at the Microsoft GitHub organization and supports Python, TypeScript, Rust, Go, and .NET.

AI agents are no longer confined to chatbot capabilities. They execute code, call external APIs, write to databases, and spawn other agents. That autonomy is the point, but it also means the consequences of a compromised or misbehaving agent extend far beyond a bad response.

Speaking at RSAC 2026, Socket CEO Feross Aboukhadijeh noted that the problem is being amplified by the speed of AI-generated code adoption: "We are seeing all sorts of attacks. It's not like humans did a good job of vetting code, but now agents are doing it, and they are accelerating."

In December 2025, OWASP published the Top 10 for Agentic Applications, the first formal taxonomy of risks specific to autonomous AI systems. The list covers goal hijacking, tool misuse, identity abuse, memory poisoning, cascading failures, and rogue agents. Regulatory frameworks are also starting to catch up, but most teams deploying agents right now are ahead of any enforceable requirements.

The Model Context Protocol (MCP), which connects AI agents to external tools and data sources, has also become a significant attack surface. Bitsight's TRACE research team found roughly 1,000 exposed MCP servers with no authorization in place. BlueRock Security analyzed more than 7,000 MCP servers and found 36.7% vulnerable to server-side request forgery, a flaw demonstrated to retrieve AWS access keys and session tokens from a cloud instance's metadata endpoint.

The whole landscape of agent security is still being mapped as deployments scale faster than the controls around them.

Microsoft's Agent Governance Toolkit is structured as seven independently installable packages, designed to be layered incrementally onto existing agent frameworks rather than replacing them:

The toolkit hooks is framework-agnostic. It hooks into existing frameworks through their native extension points, including LangChain's callback handlers, CrewAI's task decorators, Google ADK's plugin system, and Microsoft Agent Framework's middleware pipeline. Integrations are already available for the OpenAI Agents SDK, Haystack, LangGraph, and PydanticAI. A TypeScript SDK is published on npm (@microsoft/agentmesh-sdk) and a .NET SDK is available on NuGet.

Installation for the full package:

pip install agent-governance-toolkit[full]

One area worth noting for the open source security community: the toolkit's Agent Marketplace component includes plugin signing with Ed25519 and manifest verification, addressing the OWASP agentic supply chain risk category directly. The build infrastructure follows SLSA-compatible provenance with GitHub's attest-build-provenance action, and all CI dependencies are pinned with cryptographic hashes.

The project also runs OpenSSF Scorecard tracking, CodeQL, and Dependabot, and uses ClusterFuzzLite for continuous fuzzing across more than 9,500 tests.

The hardest component to evaluate is the semantic intent classifier inside the policy engine, the piece responsible for distinguishing a legitimate tool call from a hijacked one. Runtime interception only works if the policy engine understands what an agent is actually trying to do, not just the surface form of the call. Microsoft hasn't published independent validation of how the classifier performs against real attack scenarios, and no third-party audits exist yet.

For many security teams, the credential layer is where the most immediate risk lives. OWASP doesn't treat credentials as a discrete problem. Instead, credential exposure is distributed across identity abuse, supply chain, and rogue agent risks.

ASI03 specifically calls for "short-lived, narrowly scoped tokens per task" as a mitigation, which is exactly the pattern the toolkit's Agent Mesh component begins to support through per-agent cryptographic identity. But there is a difference between issuing short-lived credentials and enforcing what each agent can do with them over time. It is not clear from the current documentation how tightly permissions are scoped to individual tasks or how consistently they are revoked after execution.

Runtime policy enforcement can restrict what an agent does at execution time, but it does not inherently control what an agent is allowed to access across tasks. Agents often hold credentials for the external services they call. Sandboxing limits what an agent can do inside the process, but a compromised agent that holds credentials belonging to a downstream provider exposes that provider regardless of what the sandbox allows.

A CSA survey of 228 IT and security professionals published in March 2026 found that 68% of organizations can't clearly distinguish between human and AI agent activity, and only 18% are confident their IAM systems can manage agent identities effectively. The Tenable Cloud and AI Security Risk Report 2026 found 52% of non-human identities hold critical excessive permissions. The pattern that would help here is least-privilege per task at the credential level: issue temporary scoped credentials, revoke after task completion. Most orchestration frameworks don’t support it natively, and governance layers like this one don’t yet make it a default.

Microsoft has significant commercial stakes in how agentic AI infrastructure develops. Foundry, AutoGen, and Copilot Studio are all part of the broader Microsoft agent ecosystem this toolkit is designed to govern. The announcement says the project will move to a foundation, but Microsoft will be steering that process. The company says it is actively engaging with the OWASP Agent Security Initiative, the LF AI & Data Foundation, and CoSAI working groups toward that end.

Subscribe to our newsletter

Get notified when we publish new security blog posts!

Security News

Feross Aboukhadijeh joins TBPN to discuss Socket's $60M Series C, 500%+ ARR growth, AI's impact on open source, and the rise in supply chain attacks.

Security News

OSV withdrew 157 OSV malware reports after automated false positives incorrectly flagged trusted npm and PyPI packages, sending bad records into tools that rely on OSV data.

Research

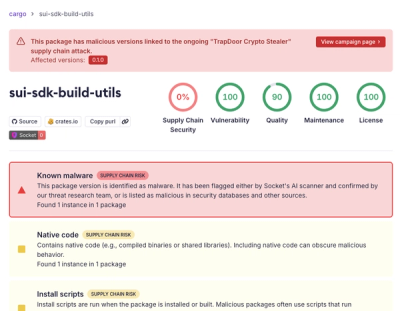

/Security News

TrapDoor crypto stealer hits 36 malicious packages across npm, PyPI, and Crates.io, targeting crypto, DeFi, AI, and security developers.