Product

Introducing Ruby Support in Socket

Socket is launching Ruby support for all users. Enhance your Rails projects with AI-powered security scans for vulnerabilities and supply chain threats. Now in Beta!

@aws-cdk/aws-logs

Advanced tools

@aws-cdk/aws-logs is an AWS Cloud Development Kit (CDK) library that allows you to define and manage AWS CloudWatch Logs resources using code. It provides a high-level, object-oriented abstraction to create and manage log groups, log streams, and metric filters, among other CloudWatch Logs features.

Create a Log Group

This code sample demonstrates how to create a new CloudWatch Log Group with a specified retention period using the @aws-cdk/aws-logs package.

const logs = require('@aws-cdk/aws-logs');

const cdk = require('@aws-cdk/core');

const app = new cdk.App();

const stack = new cdk.Stack(app, 'MyStack');

new logs.LogGroup(stack, 'MyLogGroup', {

logGroupName: '/aws/my-log-group',

retention: logs.RetentionDays.ONE_WEEK

});

app.synth();Create a Log Stream

This code sample demonstrates how to create a new CloudWatch Log Stream within an existing Log Group using the @aws-cdk/aws-logs package.

const logs = require('@aws-cdk/aws-logs');

const cdk = require('@aws-cdk/core');

const app = new cdk.App();

const stack = new cdk.Stack(app, 'MyStack');

const logGroup = new logs.LogGroup(stack, 'MyLogGroup', {

logGroupName: '/aws/my-log-group',

retention: logs.RetentionDays.ONE_WEEK

});

new logs.LogStream(stack, 'MyLogStream', {

logGroup: logGroup,

logStreamName: 'my-log-stream'

});

app.synth();Create a Metric Filter

This code sample demonstrates how to create a Metric Filter for a CloudWatch Log Group that filters log events containing the term 'ERROR' and increments a custom metric in CloudWatch.

const logs = require('@aws-cdk/aws-logs');

const cdk = require('@aws-cdk/core');

const app = new cdk.App();

const stack = new cdk.Stack(app, 'MyStack');

const logGroup = new logs.LogGroup(stack, 'MyLogGroup', {

logGroupName: '/aws/my-log-group',

retention: logs.RetentionDays.ONE_WEEK

});

new logs.MetricFilter(stack, 'MyMetricFilter', {

logGroup: logGroup,

metricNamespace: 'MyNamespace',

metricName: 'MyMetric',

filterPattern: logs.FilterPattern.allTerms('ERROR'),

metricValue: '1'

});

app.synth();winston-cloudwatch is a transport for the winston logging library that allows you to send log messages to AWS CloudWatch Logs. Unlike @aws-cdk/aws-logs, which is used for defining and managing CloudWatch Logs resources, winston-cloudwatch is used for sending log data to CloudWatch Logs from your application.

bunyan-cloudwatch is a stream for the Bunyan logging library that sends log records to AWS CloudWatch Logs. Similar to winston-cloudwatch, it focuses on sending log data to CloudWatch Logs rather than managing the log resources themselves.

log4js-cloudwatch-appender is an appender for the log4js logging library that sends log events to AWS CloudWatch Logs. It is used for integrating log4js with CloudWatch Logs to send log data, in contrast to @aws-cdk/aws-logs, which is used for infrastructure management.

This is a developer preview (public beta) module. Releases might lack important features and might have future breaking changes.

This API is still under active development and subject to non-backward compatible changes or removal in any future version. Use of the API is not recommended in production environments. Experimental APIs are not subject to the Semantic Versioning model.

This library supplies constructs for working with CloudWatch Logs.

The basic unit of CloudWatch is a Log Group. Every log group typically has the same kind of data logged to it, in the same format. If there are multiple applications or services logging into the Log Group, each of them creates a new Log Stream.

Every log operation creates a "log event", which can consist of a simple string or a single-line JSON object. JSON objects have the advantage that they afford more filtering abilities (see below).

The only configurable attribute for log streams is the retention period, which configures after how much time the events in the log stream expire and are deleted.

The default retention period if not supplied is 2 years, but it can be set to

any amount of days, or Infinity to keep the data in the log group forever.

Log events matching a particular filter can be sent to either a Lambda function or a Kinesis stream.

If the Kinesis stream lives in a different account, a CrossAccountDestination

object needs to be added in the destination account which will act as a proxy

for the remote Kinesis stream. This object is automatically created for you

if you use the CDK Kinesis library.

Create a SubscriptionFilter, initialize it with an appropriate Pattern (see

below) and supply the intended destination:

const fn = new lambda.Function(this, 'Lambda', { ... });

const logGroup = new LogGroup(this, 'LogGroup', { ... });

new SubscriptionFilter(this, 'Subscription', {

logGroup,

destination: fn,

filterPattern: FilterPattern.allTerms("ERROR", "MainThread")

});

CloudWatch Logs can extract and emit metrics based on a textual log stream. Depending on your needs, this may be a more convenient way of generating metrics for you application than making calls to CloudWatch Metrics yourself.

A MetricFilter either emits a fixed number every time it sees a log event

matching a particular pattern (see below), or extracts a number from the log

event and uses that as the metric value.

Example:

Remember that if you want to use a value from the log event as the metric value, you must mention it in your pattern somewhere.

A very simple MetricFilter can be created by using the logGroup.extractMetric()

helper function:

logGroup.extractMetric('$.jsonField', 'Namespace', 'MetricName');

Will extract the value of jsonField wherever it occurs in JSON-structed

log records in the LogGroup, and emit them to CloudWatch Metrics under

the name Namespace/MetricName.

Patterns describe which log events match a subscription or metric filter. There are three types of patterns:

All patterns are constructed by using static functions on the FilterPattern

class.

In addition to the patterns above, the following special patterns exist:

FilterPattern.allEvents(): matches all log events.FilterPattern.literal(string): if you already know what pattern expression to

use, this function takes a string and will use that as the log pattern. For

more information, see the Filter and Pattern

Syntax.Text patterns match if the literal strings appear in the text form of the log line.

FilterPattern.allTerms(term, term, ...): matches if all of the given terms

(substrings) appear in the log event.FilterPattern.anyTerm(term, term, ...): matches if all of the given terms

(substrings) appear in the log event.FilterPattern.anyGroup([term, term, ...], [term, term, ...], ...): matches if

all of the terms in any of the groups (specified as arrays) matches. This is

an OR match.Examples:

// Search for lines that contain both "ERROR" and "MainThread"

const pattern1 = FilterPattern.allTerms('ERROR', 'MainThread');

// Search for lines that either contain both "ERROR" and "MainThread", or

// both "WARN" and "Deadlock".

const pattern2 = FilterPattern.anyGroup(

['ERROR', 'MainThread'],

['WARN', 'Deadlock'],

);

JSON patterns apply if the log event is the JSON representation of an object (without any other characters, so it cannot include a prefix such as timestamp or log level). JSON patterns can make comparisons on the values inside the fields.

= and !=.

String values can start or end with a * wildcard.=, !=,

<, <=, >, >=.Fields in the JSON structure are identified by identifier the complete object as $

and then descending into it, such as $.field or $.list[0].field.

FilterPattern.stringValue(field, comparison, string): matches if the given

field compares as indicated with the given string value.FilterPattern.numberValue(field, comparison, number): matches if the given

field compares as indicated with the given numerical value.FilterPattern.isNull(field): matches if the given field exists and has the

value null.FilterPattern.notExists(field): matches if the given field is not in the JSON

structure.FilterPattern.exists(field): matches if the given field is in the JSON

structure.FilterPattern.booleanValue(field, boolean): matches if the given field

is exactly the given boolean value.FilterPattern.all(jsonPattern, jsonPattern, ...): matches if all of the

given JSON patterns match. This makes an AND combination of the given

patterns.FilterPattern.any(jsonPattern, jsonPattern, ...): matches if any of the

given JSON patterns match. This makes an OR combination of the given

patterns.Example:

// Search for all events where the component field is equal to

// "HttpServer" and either error is true or the latency is higher

// than 1000.

const pattern = FilterPattern.all(

FilterPattern.stringValue('$.component', '=', 'HttpServer'),

FilterPattern.any(

FilterPattern.booleanValue('$.error', true),

FilterPattern.numberValue('$.latency', '>', 1000)

));

If the log events are rows of a space-delimited table, this pattern can be used to identify the columns in that structure and add conditions on any of them. The canonical example where you would apply this type of pattern is Apache server logs.

Text that is surrounded by "..." quotes or [...] square brackets will

be treated as one column.

FilterPattern.spaceDelimited(column, column, ...): construct a

SpaceDelimitedTextPattern object with the indicated columns. The columns

map one-by-one the columns found in the log event. The string "..." may

be used to specify an arbitrary number of unnamed columns anywhere in the

name list (but may only be specified once).After constructing a SpaceDelimitedTextPattern, you can use the following

two members to add restrictions:

pattern.whereString(field, comparison, string): add a string condition.

The rules are the same as for JSON patterns.pattern.whereNumber(field, comparison, number): add a numerical condition.

The rules are the same as for JSON patterns.Multiple restrictions can be added on the same column; they must all apply.

Example:

// Search for all events where the component is "HttpServer" and the

// result code is not equal to 200.

const pattern = FilterPattern.spaceDelimited('time', 'component', '...', 'result_code', 'latency')

.whereString('component', '=', 'HttpServer')

.whereNumber('result_code', '!=', 200);

0.35.0 (2019-06-19)

cdk context (#2870) (b8a1c8e), closes #2854name in StageProps to stageName. (#2882) (be574a1)hwType to hardwareType (#2916) (1aa0589), closes #2896aws-sns-subscribers (#2804) (9ef899c)AssetProps.packaging has been removed and is now automatically discovered based on the file type.ZipDirectoryAsset has been removed, use aws-s3-assets.Asset.FileAsset has been removed, use aws-s3-assets.Asset.Code.directory and Code.file have been removed. Use Code.asset.hardwareType from hwType.TableOptions.pitrEnabled renamed to pointInTimeRecovery.TableOptions.sseEnabled renamed to serverSideEncryption.TableOptions.ttlAttributeName renamed to timeToLiveAttribute.TableOptions.streamSpecification renamed stream.ContainerImage.fromAsset() now takes only build directory

directly (no need to pass scope or id anymore).ISecret.secretJsonValue renamed to secretValueFromJson.ParameterStoreString has been removed. Use StringParameter.fromStringParameterAttributes.ParameterStoreSecureString has been removed. Use StringParameter.fromSecureStringParameterAttributes.ParameterOptions.name was renamed to parameterName.newStream renamed to addStream and doesn't need a scopenewSubscriptionFilter renamed to addSubscriptionFilter and doesn't need a scopenewMetricFilter renamed to addMetricFilter and doesn't need a scopeNewSubscriptionFilterProps renamed to SubscriptionPropsNewLogStreamProps renamed to LogStreamOptionsNewMetricFilterProps renamed to MetricFilterOptionsJSONPattern renamed to JsonPatternMethodOptions.authorizerId is now called authorizer and accepts an IAuthorizer which is a placeholder interface for the authorizer resource.restapi.executeApiArn renamed to arnForExecuteApi.restapi.latestDeployment and deploymentStage are now read-only.EventPattern.detail is now a map.scheduleExpression: string is now schedule: Schedule.cdk.RemovalPolicy

to configure the resource's removal policy.applyRemovalPolicy is now CfnResource.applyRemovalPolicy.RemovalPolicy.Orphan has been renamed to Retain.RemovalPolicy.Forbid has been removed, use Retain.RepositoryProps.retain is now removalPolicy, and defaults to Retain instead of remove since ECR is a stateful resourceKeyProps.retain is now removalPolicyLogGroupProps.retainLogGroup is now removalPolicyLogStreamProps.retainLogStream is now removalPolicyDatabaseClusterProps.deleteReplacePolicy is now removalPolicyDatabaseInstanceNewProps.deleteReplacePolicy is now removalPolicyattr instead of the resource type. For example, in S3 bucket.bucketArn is now bucket.attrArn.propertyOverrides has been removed from all "Cfn" resources, instead

users can now read/write resource properties directly on the resource class. For example, instead of lambda.propertyOverrides.runtime just use lambda.runtime.stageName instead of nameFunction.addLayer to addLayers and made it variadicIFunction.handler propertyIVersion.versionArn property (the value is at functionArn)SingletonLayerVersionLogRetentionPolicyStatement no longer has a fluid API, and accepts a

props object to be able to set the important fields.ImportedResourcePrincipal to UnknownPrincipal.managedPolicyArns renamed to managedPolicies, takes

return value from ManagedPolicy.fromAwsManagedPolicyName().PolicyDocument.postProcess() is now removed.PolicyDocument.addStatement() renamed to addStatements.PolicyStatement is no longer IResolvable, call .toStatementJson()

to retrieve the IAM policy statement JSON.AwsPrincipal has been removed, use ArnPrincipal instead.s3.StorageClass is now an enum-like class instead of a regular

enum. This means that you need to call .value in order to obtain it's value.s3.Coordinates renamed to s3.LocationArtifact.s3Coordinates renamed to Artifact.s3Location.BuildSpec object.lambda.Runtime.NodeJS* are now lambda.Runtime.Nodejs*Stack APIstack.name renamed to stack.stackNamestack.stackName will return the concrete stack name. Use Aws.stackName to indicate { Ref: "AWS::StackName" }.stack.account and stack.region will return the concrete account/region only if they are explicitly specified when the stack is defined (under the env prop). Otherwise, they will return a token that resolves to the AWS::AccountId and AWS::Region intrinsic references. Use Context.getDefaultAccount() and Context.getDefaultRegion() to obtain the defaults passed through the toolkit in case those are needed. Use Token.isUnresolved(v) to check if you have a concrete or intrinsic.stack.logicalId has been removed. Use stack.getLogicalId()stack.env has been removed, use stack.account, stack.region and stack.environment insteadstack.accountId renamed to stack.account (to allow treating account more abstractly)AvailabilityZoneProvider can now be accessed through Context.getAvailabilityZones()SSMParameterProvider can now be accessed through Context.getSsmParameter()parseArn is now Arn.parsearnFromComponents is now arn.formatnode.lock and node.unlock are now privatestack.requireRegion and requireAccountId have been removed. Use Token.unresolved(stack.region) insteadstack.parentApp have been removed. Use App.isApp(stack.node.root) instead.stack.missingContext is now privatestack.renameLogical have been renamed to stack.renameLogicalIdIAddressingScheme, HashedAddressingScheme and LogicalIDs are now internal. Override Stack.allocateLogicalId to customize how logical IDs are allocated to resources.--rename, and the stack

names are now immutable on the stack artifact.@aws-cdk/aws-sns-subscribers

package.roleName in RoleProps is now of type PhysicalNamebucketName in BucketProps is now of type PhysicalNameroleName in RoleProps is now of type PhysicalNameFAQs

The CDK Construct Library for AWS::Logs

The npm package @aws-cdk/aws-logs receives a total of 85,520 weekly downloads. As such, @aws-cdk/aws-logs popularity was classified as popular.

We found that @aws-cdk/aws-logs demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket is launching Ruby support for all users. Enhance your Rails projects with AI-powered security scans for vulnerabilities and supply chain threats. Now in Beta!

Product

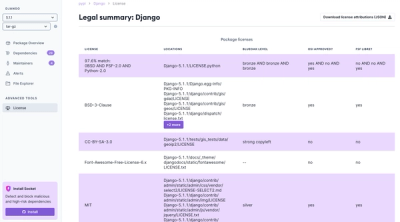

Ensure open-source compliance with Socket’s License Enforcement Beta. Set up your License Policy and secure your software!

Product

We're launching a new set of license analysis and compliance features for analyzing, managing, and complying with licenses across a range of supported languages and ecosystems.