Product

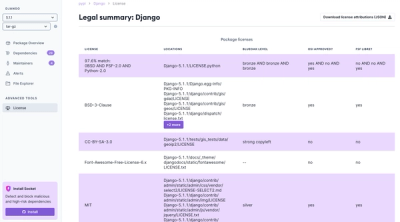

Introducing License Enforcement in Socket

Ensure open-source compliance with Socket’s License Enforcement Beta. Set up your License Policy and secure your software!

@clickhouse/client

Advanced tools

@clickhouse/client is an npm package that provides a client for interacting with ClickHouse, a columnar database management system. It allows you to perform various database operations such as querying, inserting data, and managing database schemas.

Querying Data

This feature allows you to execute SQL queries against a ClickHouse database. The code sample demonstrates how to create a client instance and perform a SELECT query.

const { ClickHouse } = require('@clickhouse/client');

const client = new ClickHouse({

url: 'http://localhost:8123',

basicAuth: {

username: 'default',

password: '',

},

});

async function queryData() {

const rows = await client.query('SELECT * FROM my_table').toPromise();

console.log(rows);

}

queryData();Inserting Data

This feature allows you to insert data into a ClickHouse table. The code sample demonstrates how to create a client instance and perform an INSERT operation.

const { ClickHouse } = require('@clickhouse/client');

const client = new ClickHouse({

url: 'http://localhost:8123',

basicAuth: {

username: 'default',

password: '',

},

});

async function insertData() {

const result = await client.insert('INSERT INTO my_table (column1, column2) VALUES', [

[1, 'value1'],

[2, 'value2'],

]).toPromise();

console.log(result);

}

insertData();Managing Database Schemas

This feature allows you to manage database schemas, such as creating or altering tables. The code sample demonstrates how to create a new table in a ClickHouse database.

const { ClickHouse } = require('@clickhouse/client');

const client = new ClickHouse({

url: 'http://localhost:8123',

basicAuth: {

username: 'default',

password: '',

},

});

async function createTable() {

const result = await client.query(`

CREATE TABLE IF NOT EXISTS my_table (

id UInt32,

name String

) ENGINE = MergeTree()

ORDER BY id

`).toPromise();

console.log(result);

}

createTable();The 'clickhouse' npm package is another client for interacting with ClickHouse databases. It offers similar functionalities such as querying and inserting data, but may have different API conventions and additional features.

The 'node-clickhouse' npm package is a client for ClickHouse that supports both HTTP and native protocols. It offers comprehensive functionalities for interacting with ClickHouse databases, including querying, inserting, and managing schemas.

Official Node.js client for ClickHouse, written purely in TypeScript, thoroughly tested with actual ClickHouse versions.

It is focused on data streaming for both inserts and selects using standard Node.js Streaming API.

The client is tested with the following ClickHouse and Node.js versions:

| Node.js | ClickHouse | Status |

|---|---|---|

| 14.x | 22.8 | ✔ |

| 16.x | 22.8 | ✔ |

| 18.x | 22.8 | ✔ |

| 14.x | 22.9 | ✔ |

| 16.x | 22.9 | ✔ |

| 18.x | 22.9 | ✔ |

npm i @clickhouse/client

Currently, only HTTP(s) protocol is supported.

A very basic connection to a single local ClickHouse instance with default settings (for example, if it is running as a Docker container as described in the contribution guide):

import { createClient } from '@clickhouse/client'

const client = createClient()

Basic HTTPS connection:

import { createClient } from '@clickhouse/client'

const client = createClient({

host: `https://<YOUR_CLICKHOUSE_HOST>:8443`,

password: '<YOUR_CLICKHOUSE_PASSWORD>',

database: '<YOUR_CLICKHOUSE_DATABASE>',

})

Using custom ClickHouse settings and forced HTTP compression (GZIP) for both request and response:

import { createClient } from '@clickhouse/client'

const client = createClient({

host: `https://<YOUR_CLICKHOUSE_HOST>:8443`,

password: '<YOUR_CLICKHOUSE_PASSWORD>',

database: '<YOUR_CLICKHOUSE_DATABASE>',

compression: {

request: true,

response: true,

},

clickhouse_settings: {

insert_quorum: '2',

},

})

Closing the connection:

await client.close()

See ClickHouseClientConfigOptions

export interface ClickHouseClientConfigOptions {

// a valid URL, for example, https://myclickhouseserver.org:8123

// if unset, defaults to http://localhost:8123

host?: string

// milliseconds, default 10_000

connect_timeout?: number

// milliseconds, default 300_000

request_timeout?: number

// For HTTP protocol, the connection pool has infinite size by default

// it can be overriden with this setting

max_open_connections?: number

// HTTP compression settings. Uses GZIP.

// For more details, see https://clickhouse.com/docs/en/interfaces/http/#compression

compression?: {

// enabled by default - the server will compress the data it sends to you in the response

response?: boolean

// disabled by default - the server will decompress the data which you pass in the request

request?: boolean

}

// if not set, 'default' is used

username?: string

// if not set, an empty password is used

password?: string

// used to identify the connection on the server side, if not set, uses 'clickhouse-js'

application?: string

// if not set, 'default' is used

database?: string

// additional settings to send with every query, such as `date_time_input_format` or `insert_quorum`

// see https://clickhouse.com/docs/en/operations/settings/settings/

// typings should support most of the options listed there

clickhouse_settings?: ClickHouseSettings

// logger settings

log?: {

// disabled by default, can be enabled using this setting

enable?: boolean

// use it to override default clickhouse-js logger with your own implementation

LoggerClass?: new (enabled: boolean) => Logger

}

}

See also:

| Format | Input (array) | Input (stream) | Output (JSON) | Output (text) |

|---|---|---|---|---|

| JSON | ❌ | ❌ | ✔️ | ✔️ |

| JSONEachRow | ✔️ | ✔️ | ✔️ | ✔️ |

| JSONStringsEachRow | ✔️ | ✔️ | ✔️ | ✔️ |

| JSONCompactEachRow | ✔️ | ✔️ | ✔️ | ✔️ |

| JSONCompactStringsEachRow | ✔️ | ✔️ | ✔️ | ✔️ |

| JSONCompactEachRowWithNames | ✔️ | ✔️ | ✔️ | ✔️ |

| JSONCompactEachRowWithNamesAndTypes | ✔️ | ✔️ | ✔️ | ✔️ |

| JSONCompactStringsEachRowWithNames | ✔️ | ✔️ | ✔️ | ✔️ |

| JSONCompactStringsEachRowWithNamesAndTypes | ✔️ | ✔️ | ✔️ | ✔️ |

| CSV | ❌ | ✔️ | ❌ | ✔️ |

| CSVWithNames | ❌ | ✔️ | ❌ | ✔️ |

| CSVWithNamesAndTypes | ❌ | ✔️ | ❌ | ✔️ |

| TabSeparated | ❌ | ✔️ | ❌ | ✔️ |

| TabSeparatedRaw | ❌ | ✔️ | ❌ | ✔️ |

| TabSeparatedWithNames | ❌ | ✔️ | ❌ | ✔️ |

| TabSeparatedWithNamesAndTypes | ❌ | ✔️ | ❌ | ✔️ |

| CustomSeparated | ❌ | ✔️ | ❌ | ✔️ |

| CustomSeparatedWithNames | ❌ | ✔️ | ❌ | ✔️ |

| CustomSeparatedWithNamesAndTypes | ❌ | ✔️ | ❌ | ✔️ |

The entire list of ClickHouse input and output formats is available here.

| Type | Status | JS type |

|---|---|---|

| UInt8/16/32 | ✔️ | number |

| UInt64/128/256 | ✔️❗- see below | string |

| Int8/16/32 | ✔️ | number |

| Int64/128/256 | ✔️❗- see below | string |

| Float32/64 | ✔️ | number |

| Decimal | ✔️❗- see below | number |

| Boolean | ✔️ | boolean |

| String | ✔️ | string |

| FixedString | ✔️ | string |

| UUID | ✔️ | string |

| Date32/64 | ✔️❗- see below | string |

| DateTime32/64 | ✔️❗- see below | string |

| Enum | ✔️ | string |

| LowCardinality | ✔️ | string |

| Array(T) | ✔️ | Array<JS type for T> |

| JSON | ✔️ | object |

| Nested | ❌ | - |

| Tuple | ✔️ | Tuple |

| Nullable(T) | ✔️ | JS type for T or null |

| IPv4 | ✔️ | string |

| IPv6 | ✔️ | string |

| Point | ✔️ | [ number, number ] |

| Ring | ✔️ | Array<Point> |

| Polygon | ✔️ | Array<Ring> |

| MultiPolygon | ✔️ | Array<Polygon> |

| Map(K, V) | ✔️ | Record<JS type for K, JS type for V> |

The entire list of supported ClickHouse formats is available here.

Since we use data streaming for inserts without the VALUES clause (which does additional type conversion), Date* type columns can be only inserted as strings and not as Unix time epoch. It can be possibly changed with the future ClickHouse database releases. Please refer to the corresponding integration tests for more examples.

Since we do not use VALUES clause and there is no additional type conversion, it is not possible to insert Decimal* type columns as strings, only as numbers. This is a suboptimal approach as it might end in float precision loss. Thus, it is recommended to avoid JSON* formats when using Decimals as of now. Consider TabSeparated* / CSV* / CustomSeparated* formats families for that kind of workflows. Please refer to the data types tests for more concrete examples on how to avoid precision loss.

Though the server can accept it as a number, it is by default returned as a string in JSON* family output formats to avoid integer overflow as max values for these types are bigger than Number.MAX_SAFE_INTEGER.

This behavior, however, can be modified with output_format_json_quote_64bit_integers setting.

Used for most statements that can have a response, such as SELECT, or for sending DDLs such as CREATE TABLE. For data insertion, please consider using the dedicated method insert which is described next.

interface QueryParams {

// Query to execute that might return some data

// IMPORTANT: do not specify the FORMAT clause here

// use `format` param instead.

query: string

// Desired OUTPUT data format to be appended the query as ` FORMAT $format`

// It is extracted to the separate param

// as we may need to apply some additional request logic

// based on the desired format

format?: DataFormat

// ClickHouse settings that can be applied on query level, such as `date_time_input_format`

clickhouse_settings?: ClickHouseSettings

// See https://clickhouse.com/docs/en/interfaces/http/#cli-queries-with-parameters for more details

// IMPORTANT: that you should not prefix it with `param_` here, client will do that for you

query_params?: Record<string, unknown>

// A query can be aborted using this standard AbortSignal instance

// Please refer to the usage examples for more details

abort_signal?: AbortSignal

}

class ClickHouseClient {

query(params: QueryParams): Promise<Rows> {}

// ...

}

Provides several convenience methods for data processing in your application.

class Rows {

// Consume the entire stream and get the contents as a string

// Can be used with any DataFormat

// Should be called only once

text(): Promise<string> {}

// Consume the entire stream and get the contents as a JS object

// Can be used only with JSON formats

// Should be called only once

json<T>(): Promise<T> {}

// Returns a readable stream of Row instances for responses that can be streamed (i.e. all except JSON)

// Should be called only once

// NB: if called for the second time, the second stream will be just empty

stream(): Stream.Readable {}

}

class Row {

// Get the content of the row as plain string

text(): string {}

// Get the content of the row as a JS object

json<T>(): T {}

}

Primary method for data insertion. It can work with both Stream.Readable (all formats except JSON) and plain Array<T> (JSON* family formats only). It is recommended to avoid arrays in case of large inserts to reduce application memory consumption, and consider streaming for most of the use cases.

Should be awaited, but it does not return anything.

interface InsertParams<T> {

// Table name to insert the data into

table: string

// Stream.Readable will work for all formats except JSON

// Array will work only for JSON* formats

values: ReadonlyArray<T> | Stream.Readable

// Desired INPUT data format to be appended the statement as ` FORMAT $format`

// It is extracted to the separate param

// as we may need to apply some additional request logic

// based on the desired format

format?: DataFormat

// ClickHouse settings that can be applied on statement level, such as `insert_quorum`

clickhouse_settings?: ClickHouseSettings

// See https://clickhouse.com/docs/en/interfaces/http/#cli-queries-with-parameters for more details

// IMPORTANT: that you should not prefix it with `param_` here, client will do that for you

query_params?: Record<string, unknown>

// A query can be aborted using this standard AbortSignal instance

// Please refer to the usage examples for more details

abort_signal?: AbortSignal

}

class ClickHouseClient {

insert(params: InsertParams): Promise<void> {}

// ...

}

Can be used for statements that do not have any output, when format clause is not applicable, or when you are not interested in the response at all. An example of such statement can be CREATE TABLE or ALTER TABLE.

Should be awaited.

Optionally, it returns a readable stream that can be consumed on the application side if you need it for some reason. But in that case you might consider using query instead.

interface ExecParams {

// Statement to execute

query: string

// ClickHouse settings that can be applied on query level, such as `date_time_input_format`

clickhouse_settings?: ClickHouseSettings

// See https://clickhouse.com/docs/en/interfaces/http/#cli-queries-with-parameters for more details

// IMPORTANT: that you should not prefix it with `param_` here, client will do that for you

query_params?: Record<string, unknown>

// A query can be aborted using this standard AbortSignal instance

// Please refer to the usage examples for more details

abort_signal?: AbortSignal

}

class ClickHouseClient {

exec(params: ExecParams): Promise<Stream.Readable> {}

// ...

}

Might be useful to check the connectivity to the ClickHouse server. Returns true if server can be reached. Can throw a standard Node.js Error such as ECONNREFUSED.

class ClickHouseClient {

ping(): Promise<boolean> {}

// ...

}

Use it in your application graceful shutdown handler, as it properly closes all the open connections.

class ClickHouseClient {

close(): Promise<void> {}

// ...

}

await client.exec({

query: `

CREATE TABLE foobar

(id UInt64, name String)

ENGINE MergeTree()

ORDER BY (id)

`,

})

await client.exec({

query: `

CREATE TABLE foobar ON CLUSTER '{cluster}'

(id UInt64, name String)

ENGINE ReplicatedMergeTree(

'/clickhouse/{cluster}/tables/{database}/{table}/{shard}',

'{replica}'

)

ORDER BY (id)

`,

// Recommended for cluster usage to avoid situations

// where a query processing error occurred after the response code

// and HTTP headers were sent to the client.

// See https://clickhouse.com/docs/en/interfaces/http/#response-buffering

clickhouse_settings: {

wait_end_of_query: 1,

},

})

Note that ENGINE and ON CLUSTER clauses can be omitted entirely here.

ClickHouse cloud will automatically use ReplicatedMergeTree with appropriate settings in this case.

await client.exec({

query: `

CREATE TABLE foobar

(id UInt64, name String)

ORDER BY (id)

`,

// Recommended for cluster usage to avoid situations

// where a query processing error occurred after the response code

// and HTTP headers were sent to the client.

// See https://clickhouse.com/docs/en/interfaces/http/#response-buffering

clickhouse_settings: {

wait_end_of_query: 1,

},

})

await client.insert({

table: tableName,

// structure should match the desired format, JSONEachRow in this example

values: [

{ id: 42, name: 'foo' },

{ id: 42, name: 'bar' },

],

format: 'JSONEachRow',

})

await client.insert({

table: tableName,

// structure should match the desired format, JSONCompactEachRow in this example

values: Stream.Readable.from([

[42, 'foo'],

[42, 'bar'],

]),

format: 'JSONCompactEachRow',

})

const stream = new Stream.Readable({

objectMode: true, // required for JSON* family formats

read() {

/* stub */

},

})

// ... your (async) code pushing the values into the stream...

await client.insert({

table: tableName,

values: stream,

format: 'JSONEachRow', // or any other desired JSON* format

})

// close the stream when finished by pushing a null value there

stream.push(null)

await client.insert({

table: tableName,

// structure should match the desired format, TabSeparated in this example

values: Stream.Readable.from(['42,foobar'], {

objectMode: false, // required for "raw" family formats

}),

format: 'TabSeparated', // or any other desired "raw" format

})

const stream = new Stream.Readable({

objectMode: false, // required for "raw" family formats

read() {

/* stub */

},

})

// ... your (async) code pushing the values into the stream...

await client.insert({

table: tableName,

values: stream,

format: 'TabSeparated', // or any other desired "raw" format

})

// close the stream when finished by pushing a null value there

stream.push(null)

const filename = Path.resolve(process.cwd(), 'path/to/file.csv')

await client.insert({

table: tableName,

values: Fs.createReadStream(filename),

format: 'CSVWithNames',

})

See also:

const rows = await client.query({

query: 'SELECT number FROM system.numbers LIMIT 5',

format: 'JSONCompactEachRow',

})

const result = await rows.json()

// result is [['0'], ['1'], ['2'], ['3'], ['4']]

const rows = await client.query({

query: 'SELECT number FROM system.numbers LIMIT 2',

format: 'JSON',

})

const result = await rows.json<ResponseJSON<{ number: string }>>()

/* result will look like

{

"meta": [ { "name": "number", "type": "UInt64" } ],

"data": [ { "number": "0"}, { "number": "1" } ],

"rows": 2,

"rows_before_limit_at_least": 2,

"statistics": {

"elapsed": 0.00013129,

"rows_read": 2,

"bytes_read": 16

}

}

*/

const rows = await client.query({

query: `SELECT number FROM system.numbers LIMIT 2`,

format: 'CSV',

})

const result = await rows.text()

// result is now '0\n1\n'

const rows = await client.query({

query: `SELECT * from ${tableName}`,

format: 'JSONCompactEachRow',

})

for await (const row of rows.stream()) {

const data = (row as Row).json()

// ... your code processing the data here

}

const rows = await client.query({

query: 'SELECT plus({val1: Int32}, {val2: Int32})',

format: 'CSV',

query_params: {

val1: 10,

val2: 20,

},

})

const result = await rows.text()

// result is '30\n'

await client.insert({

table: tableName,

values: [

{ id: 42, name: 'foo' },

{ id: 42, name: 'bar' },

],

format: 'JSONEachRow',

clickhouse_settings: { insert_quorum: '2' },

})

import { AbortController } from 'node-abort-controller'

const controller = new AbortController()

const selectPromise = client.query({

query: 'SELECT sleep(3)',

format: 'CSV',

abort_signal: controller.signal as AbortSignal,

})

controller.abort()

// selectPromise is now rejected with "The request was aborted" message

ClickHouseClientConfigOptions.max_open_connections setting.ClickHouseClientConfigOptions.compression.request for that.Check out our contributing guide.

FAQs

Official JS client for ClickHouse DB - Node.js implementation

The npm package @clickhouse/client receives a total of 597,136 weekly downloads. As such, @clickhouse/client popularity was classified as popular.

We found that @clickhouse/client demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Ensure open-source compliance with Socket’s License Enforcement Beta. Set up your License Policy and secure your software!

Product

We're launching a new set of license analysis and compliance features for analyzing, managing, and complying with licenses across a range of supported languages and ecosystems.

Product

We're excited to introduce Socket Optimize, a powerful CLI command to secure open source dependencies with tested, optimized package overrides.