Security News

Oracle Drags Its Feet in the JavaScript Trademark Dispute

Oracle seeks to dismiss fraud claims in the JavaScript trademark dispute, delaying the case and avoiding questions about its right to the name.

The arehs ensures the best possible large batch processing, which is oriented towards event-driven chunk processing.

The arehs ensures the best possible large batch processing, which is oriented towards event-driven chunk

processing.

It does this by immediately allocating the next asynchronous task call for dense packing, rather than waiting for the

first asynchronous task call to complete.

In that way we can achieve multiple things:

arehs supports both CommonJS and ES Modules.

const { Arehs } = require("arehs");

import { Arehs } from "arehs";

create: The purpose of the create method is to create an Arehs instance from a specific array of data.withConcurrency: Methods that set the value for parallelism and return the current instance.(default: 10)timeoutLimit: The default value is 0. If it's greater than 0, the option works, and an error is thrown if the

operation takes longer than the timeout time(ms).stopOnFailure: If the stopOnFailure option is set to true, the function stops processing and emits appropriate

events.retryLimit: Set a limit on the number of retries on failure.mapAsync: Calling the mapAsync function starts the process of asynchronously processing the input data and returning

the results.

If the stopOnFailure option is set to true, the function stops processing and emits appropriate events.

This can be useful for handling transient errors or ensuring data processing resilience. Also, if the retryLimit

option is greater than 0, you can set a limit on the number of retries on failure.import { Arehs } from "arehs";

const dataArr = [

{ id: 1, name: "John" },

{ id: 2, name: "Alice" },

{ id: 3, name: "Bob" }

];

const result = await Arehs.create(dataArr)

.withConcurrency(10)

.mapAsync(async data => {

return await someAsyncFunction(data);

});

Tests have shown that Arehs can be improved by about 30% over Promise.all.

import { Arehs } from "arehs";

const delay = (i) => {

return new Promise((res, rej) => {

setTimeout(() => {

res(i);

}, 150 + Math.random() * 1000);

});

};

(async () => {

const tasks = Array.from({ length: 1000 }).map((d, i) => i);

const startArehs = performance.now();

await Arehs.create(tasks).withConcurrency(50).mapAsync(delay);

const endArehs = performance.now();

console.log(`Arehs: ${endArehs - startArehs}ms`);

const startPromiseAll = performance.now();

while (tasks.length > 0) {

const chunkedTasks = tasks.splice(0, 50);

await Promise.all(chunkedTasks.map(delay));

}

const endPromiseAll = performance.now();

console.log(`Promise.all: ${endPromiseAll - startPromiseAll}ms`);

})();

promiseAllTime: 19.859867874979972(s)

promisePoolTime: 13.55725229203701(s)

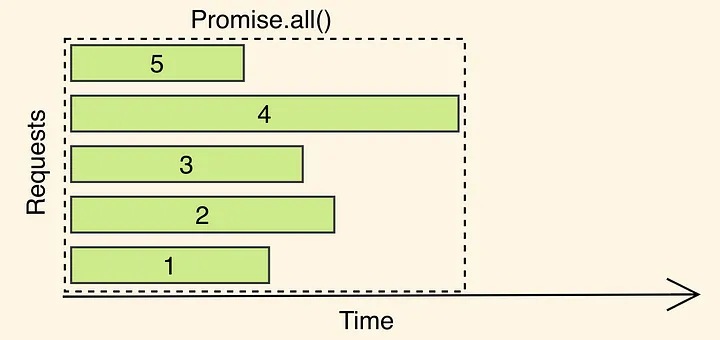

As you can see, Promise.all runs as long as the slowest promise in the batch.

So your main thread is basically “doing nothing” and is waiting for the slowest request to finish.

The longest promise in the Promise array, number 4, will be the chunk's execution time.

This creates an inefficient problem where the next promises don't do any work until the longest promise is finished.

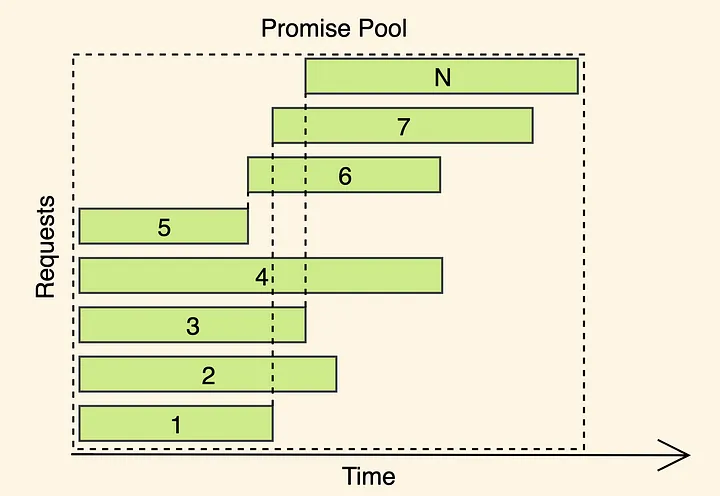

Arehs is all about making the most of Node.js's main thread by running the Promise Pool Pattern.

To achieve better utilization we need densely pack the API calls (or any other async task) so that we do not wait while

the most extended call completes, rather we schedule the next call as soon as the first one finishes.

Is this always better than Promise.all?

No, there is No silver bullet.

This can increase your application's performance when you're making a lot of API calls and asynchronous operations.

Also, it may not make much difference in situations where each promise has roughly the same work time.

If you can't get any further performance improvement with Promise.all in your environment,

you can give it a try, but if you can get by with Promise.all, you don't have to.

Therefore, you should try to use Arehs in your projects that need performance improvements only after thoroughly

testing it.

It will help you. Thank you.

FAQs

The arehs ensures the best possible large batch processing, which is oriented towards event-driven chunk processing.

We found that arehs demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 0 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Oracle seeks to dismiss fraud claims in the JavaScript trademark dispute, delaying the case and avoiding questions about its right to the name.

Security News

The Linux Foundation is warning open source developers that compliance with global sanctions is mandatory, highlighting legal risks and restrictions on contributions.

Security News

Maven Central now validates Sigstore signatures, making it easier for developers to verify the provenance of Java packages.