Crosslingual Coreference

Coreference is amazing but the data required for training a model is very scarce. In our case, the available training for non-English languages also proved to be poorly annotated. Crosslingual Coreference, therefore, uses the assumption a trained model with English data and cross-lingual embeddings should work for languages with similar sentence structures.

Install

pip install crosslingual-coreference

Quickstart

from crosslingual_coreference import Predictor

text = (

"Do not forget about Momofuku Ando! He created instant noodles in Osaka. At"

" that location, Nissin was founded. Many students survived by eating these"

" noodles, but they don't even know him."

)

predictor = Predictor(

language="en_core_web_sm", device=-1, model_name="minilm"

)

print(predictor.predict(text)["resolved_text"])

print(predictor.pipe([text])[0]["resolved_text"])

Models

As of now, there are two models available "spanbert", "info_xlm", "xlm_roberta", "minilm", which scored 83, 77, 74 and 74 on OntoNotes Release 5.0 English data, respectively.

- The "minilm" model is the best quality speed trade-off for both mult-lingual and english texts.

- The "info_xlm" model produces the best quality for multi-lingual texts.

- The AllenNLP "spanbert" model produces the best quality for english texts.

Chunking/batching to resolve memory OOM errors

from crosslingual_coreference import Predictor

predictor = Predictor(

language="en_core_web_sm",

device=0,

model_name="minilm",

chunk_size=2500,

chunk_overlap=2,

)

Use spaCy pipeline

import spacy

text = (

"Do not forget about Momofuku Ando! He created instant noodles in Osaka. At"

" that location, Nissin was founded. Many students survived by eating these"

" noodles, but they don't even know him."

)

nlp = spacy.load("en_core_web_sm")

nlp.add_pipe(

"xx_coref", config={"chunk_size": 2500, "chunk_overlap": 2, "device": 0}

)

doc = nlp(text)

print(doc._.coref_clusters)

print(doc._.resolved_text)

print(doc._.cluster_heads)

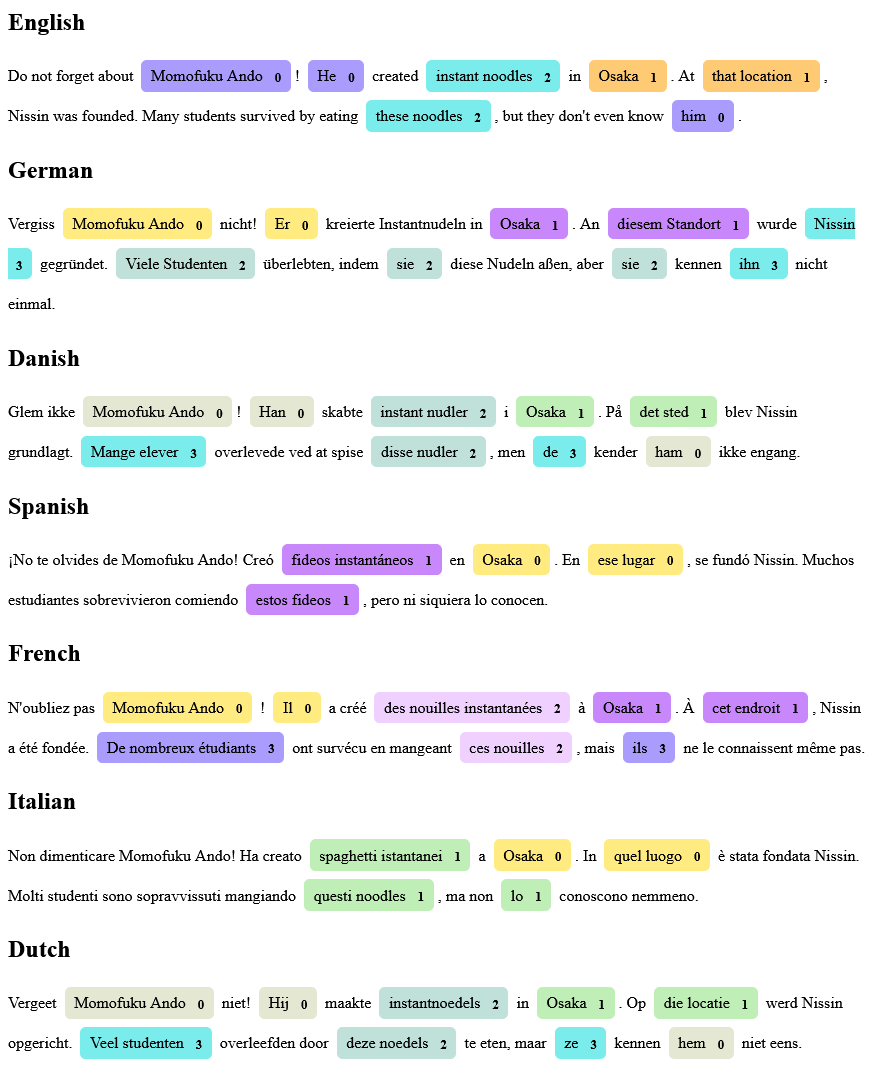

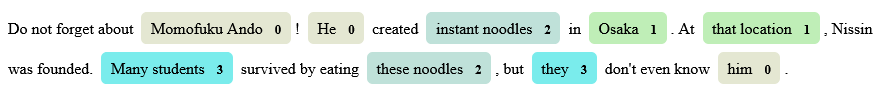

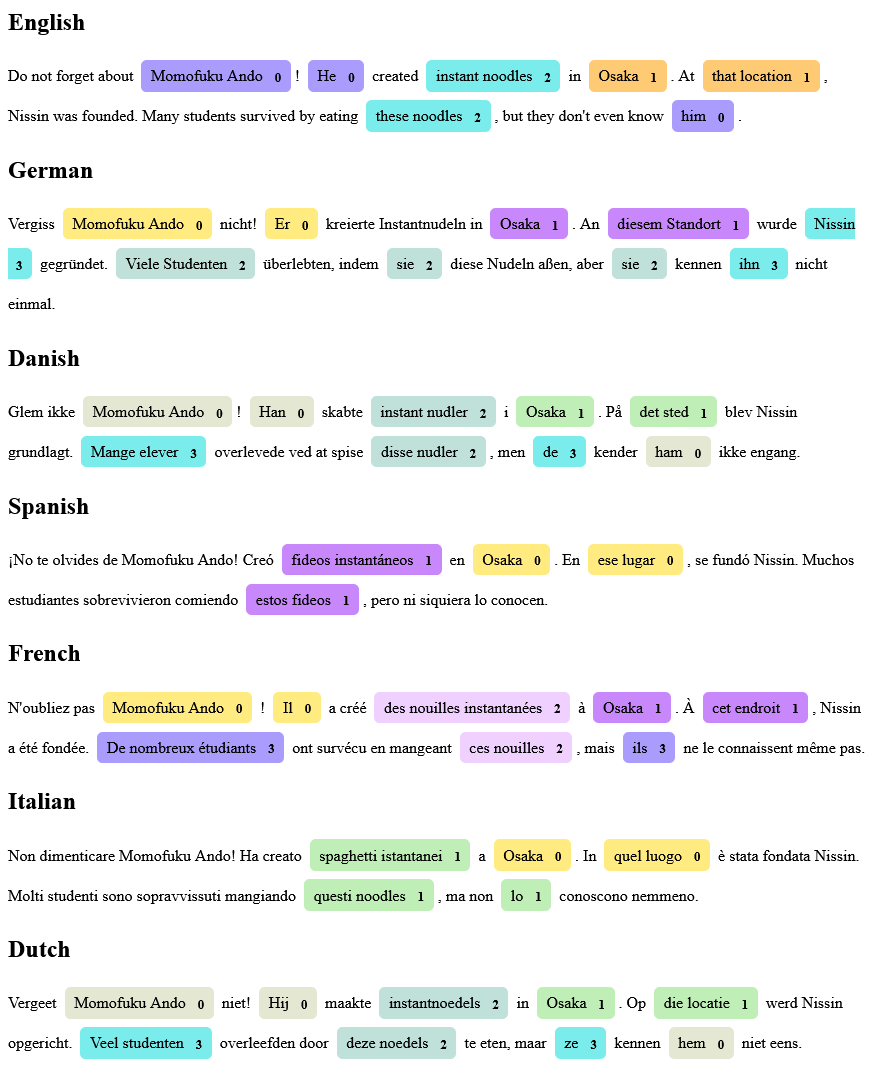

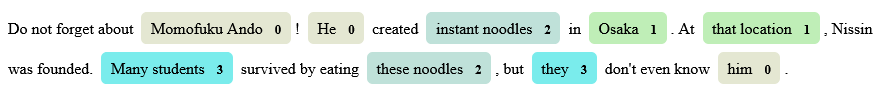

Visualize spacy pipeline

This only works with spacy >= 3.3.

import spacy

from spacy.tokens import Span

from spacy import displacy

text = (

"Do not forget about Momofuku Ando! He created instant noodles in Osaka. At"

" that location, Nissin was founded. Many students survived by eating these"

" noodles, but they don't even know him."

)

nlp = spacy.load("nl_core_news_sm")

nlp.add_pipe("xx_coref", config={"model_name": "minilm"})

doc = nlp(text)

spans = []

for idx, cluster in enumerate(doc._.coref_clusters):

for span in cluster:

spans.append(

Span(doc, span[0], span[1]+1, str(idx).upper())

)

doc.spans["custom"] = spans

displacy.render(doc, style="span", options={"spans_key": "custom"})

More Examples