packaged ultralytics/yolov5

pip install yolov5

Overview

This yolov5 package contains everything from ultralytics/yolov5

at this commit plus:

1. Easy installation via pip:

pip install yolov5

2. Full CLI integration with

fire package

3. COCO dataset format support (for training)

4. Full

🤗 Hub integration

5.

S3 support (model and dataset upload)

6.

NeptuneAI logger support (metric, model and dataset logging)

7. Classwise AP logging during experiments

Install

Install yolov5 using pip (for Python >=3.7)

pip install yolov5

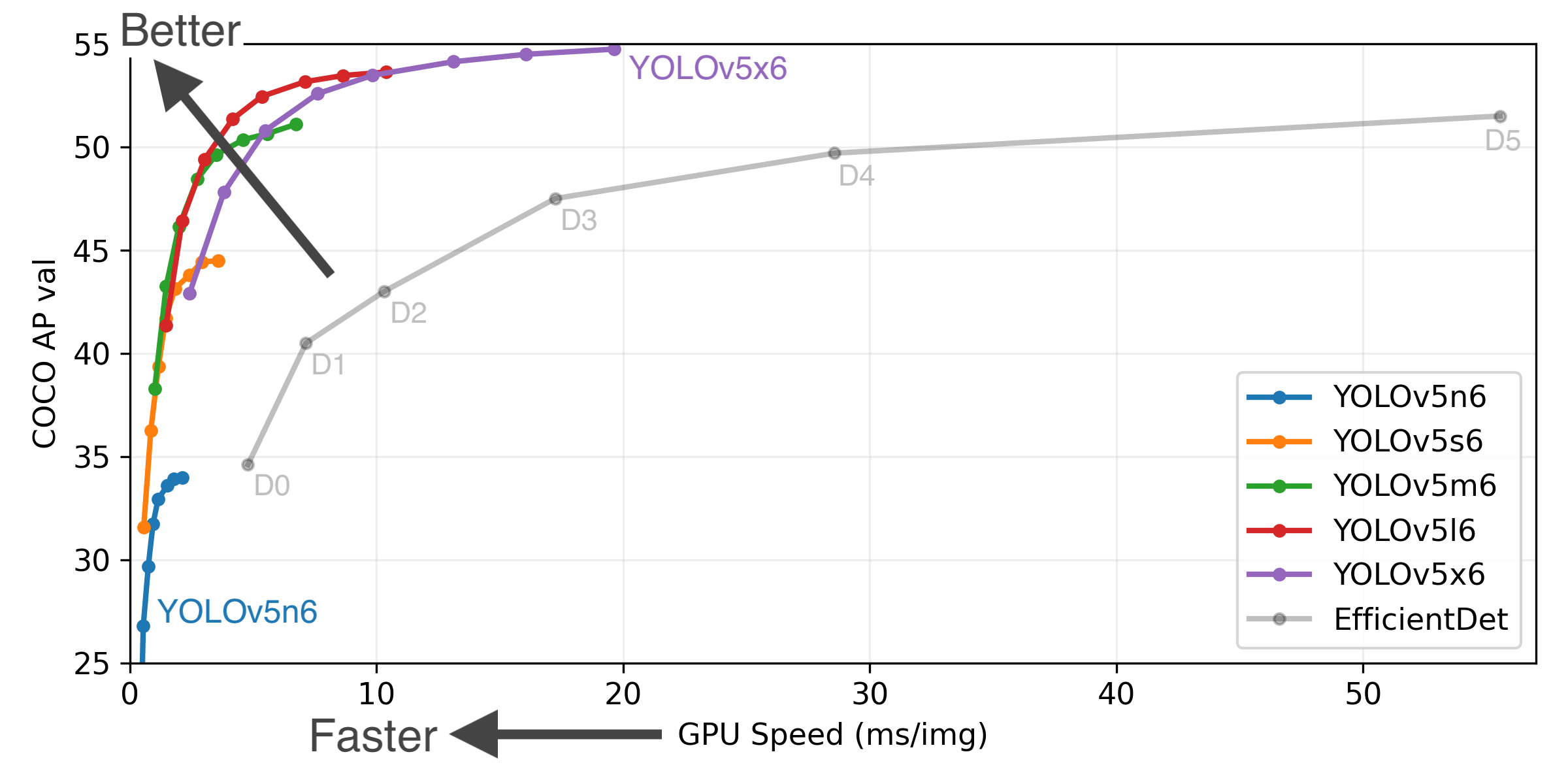

Model Zoo

Use from Python

import yolov5

model = yolov5.load('yolov5s.pt')

model = yolov5.load('train/best.pt')

model.conf = 0.25

model.iou = 0.45

model.agnostic = False

model.multi_label = False

model.max_det = 1000

img = 'https://github.com/ultralytics/yolov5/raw/master/data/images/zidane.jpg'

results = model(img)

results = model(img, size=1280)

results = model(img, augment=True)

predictions = results.pred[0]

boxes = predictions[:, :4]

scores = predictions[:, 4]

categories = predictions[:, 5]

results.show()

results.save(save_dir='results/')

Train/Detect/Test/Export

- You can directly use these functions by importing them:

from yolov5 import train, val, detect, export

train.run(imgsz=640, data='coco128.yaml')

val.run(imgsz=640, data='coco128.yaml', weights='yolov5s.pt')

detect.run(imgsz=640)

export.run(imgsz=640, weights='yolov5s.pt')

- You can pass any argument as input:

from yolov5 import detect

img_url = 'https://github.com/ultralytics/yolov5/raw/master/data/images/zidane.jpg'

detect.run(source=img_url, weights="yolov5s6.pt", conf_thres=0.25, imgsz=640)

Use from CLI

You can call yolov5 train, yolov5 detect, yolov5 val and yolov5 export commands after installing the package via pip:

Training

- Finetune one of the pretrained YOLOv5 models using your custom

data.yaml:

$ yolov5 train --data data.yaml --weights yolov5s.pt --batch-size 16 --img 640

yolov5m.pt 8

yolov5l.pt 4

yolov5x.pt 2

- Start a training using a COCO formatted dataset:

train_json_path: "train.json"

train_image_dir: "train_image_dir/"

val_json_path: "val.json"

val_image_dir: "val_image_dir/"

$ yolov5 train --data data.yaml --weights yolov5s.pt

- Visualize your experiments via Neptune.AI (neptune-client>=0.10.10 required):

$ yolov5 train --data data.yaml --weights yolov5s.pt --neptune_project NAMESPACE/PROJECT_NAME --neptune_token YOUR_NEPTUNE_TOKEN

$ yolov5 train --data data.yaml --weights yolov5s.pt --hf_model_id username/modelname --hf_token YOUR-HF-WRITE-TOKEN

- Automatically upload weights and datasets to AWS S3 (with Neptune.AI artifact tracking integration):

export AWS_ACCESS_KEY_ID=YOUR_KEY

export AWS_SECRET_ACCESS_KEY=YOUR_KEY

$ yolov5 train --data data.yaml --weights yolov5s.pt --s3_upload_dir YOUR_S3_FOLDER_DIRECTORY --upload_dataset

- Add

yolo_s3_data_dir into data.yaml to match Neptune dataset with a present dataset in S3.

train_json_path: "train.json"

train_image_dir: "train_image_dir/"

val_json_path: "val.json"

val_image_dir: "val_image_dir/"

yolo_s3_data_dir: s3://bucket_name/data_dir/

Inference

yolov5 detect command runs inference on a variety of sources, downloading models automatically from the latest YOLOv5 release and saving results to runs/detect.

$ yolov5 detect --source 0

file.jpg

file.mp4

path/

path/*.jpg

rtsp://170.93.143.139/rtplive/470011e600ef003a004ee33696235daa

rtmp://192.168.1.105/live/test

http://112.50.243.8/PLTV/88888888/224/3221225900/1.m3u8

Export

You can export your fine-tuned YOLOv5 weights to any format such as torchscript, onnx, coreml, pb, tflite, tfjs:

$ yolov5 export --weights yolov5s.pt --include torchscript,onnx,coreml,pb,tfjs

Classify

Train/Val/Predict with YOLOv5 image classifier:

$ yolov5 classify train --img 640 --data mnist2560 --weights yolov5s-cls.pt --epochs 1

$ yolov5 classify predict --img 640 --weights yolov5s-cls.pt --source images/

Segment

Train/Val/Predict with YOLOv5 instance segmentation model:

$ yolov5 segment train --img 640 --weights yolov5s-seg.pt --epochs 1

$ yolov5 segment predict --img 640 --weights yolov5s-seg.pt --source images/