Security News

Deno 2.6 + Socket: Supply Chain Defense In Your CLI

Deno 2.6 introduces deno audit with a new --socket flag that plugs directly into Socket to bring supply chain security checks into the Deno CLI.

redisvl

Advanced tools

The AI-native Redis Python client

Redis Vector Library (RedisVL) is the production-ready Python client for AI applications built on Redis. Lightning-fast vector search meets enterprise-grade reliability.

| 🎯 Core Capabilities | 🚀 AI Extensions | 🛠️ Dev Utilities |

|---|---|---|

| Index Management Schema design, data loading, CRUD ops | Semantic Caching Reduce LLM costs & boost throughput | CLI Index management from terminal |

| Vector Search Similarity search with metadata filters | LLM Memory Agentic AI context management | Async Support Async indexing and search for improved performance |

| Hybrid Queries Vector + text + metadata combined | Semantic Routing Intelligent query classification | Vectorizers 8+ embedding provider integrations |

| Multi-Query Types Vector, Range, Filter, Count queries | Embedding Caching Cache embeddings for efficiency | Rerankers Improve search result relevancy |

Install redisvl into your Python (>=3.9) environment using pip:

pip install redisvl

For more detailed instructions, visit the installation guide.

Choose from multiple Redis deployment options:

Redis Cloud: Managed cloud database (free tier available)

Redis Stack: Docker image for development

docker run -d --name redis-stack -p 6379:6379 -p 8001:8001 redis/redis-stack:latest

Redis Enterprise: Commercial, self-hosted database

Redis Sentinel: High availability with automatic failover

# Connect via Sentinel

redis_url="redis+sentinel://sentinel1:26379,sentinel2:26379/mymaster"

Azure Managed Redis: Fully managed Redis Enterprise on Azure

Enhance your experience and observability with the free Redis Insight GUI.

Design a schema for your use case that models your dataset with built-in Redis and indexable fields (e.g. text, tags, numerics, geo, and vectors). Load a schema from a YAML file:

index:

name: user-idx

prefix: user

storage_type: json

fields:

- name: user

type: tag

- name: credit_score

type: tag

- name: job_title

type: text

attrs:

sortable: true

no_index: false # Index for search (default)

unf: false # Normalize case for sorting (default)

- name: embedding

type: vector

attrs:

algorithm: flat

dims: 4

distance_metric: cosine

datatype: float32

from redisvl.schema import IndexSchema

schema = IndexSchema.from_yaml("schemas/schema.yaml")

Or load directly from a Python dictionary:

schema = IndexSchema.from_dict({

"index": {

"name": "user-idx",

"prefix": "user",

"storage_type": "json"

},

"fields": [

{"name": "user", "type": "tag"},

{"name": "credit_score", "type": "tag"},

{

"name": "job_title",

"type": "text",

"attrs": {

"sortable": True,

"no_index": False, # Index for search

"unf": False # Normalize case for sorting

}

},

{

"name": "embedding",

"type": "vector",

"attrs": {

"algorithm": "flat",

"datatype": "float32",

"dims": 4,

"distance_metric": "cosine"

}

}

]

})

Create a SearchIndex class with an input schema to perform admin and search operations on your index in Redis:

from redis import Redis

from redisvl.index import SearchIndex

# Define the index

index = SearchIndex(schema, redis_url="redis://localhost:6379")

# Create the index in Redis

index.create()

An async-compatible index class also available: AsyncSearchIndex.

Load and fetch data to/from your Redis instance:

data = {"user": "john", "credit_score": "high", "embedding": [0.23, 0.49, -0.18, 0.95]}

# load list of dictionaries, specify the "id" field

index.load([data], id_field="user")

# fetch by "id"

john = index.fetch("john")

Define queries and perform advanced searches over your indices, including the combination of vectors, metadata filters, and more.

VectorQuery - Flexible vector queries with customizable filters enabling semantic search:

from redisvl.query import VectorQuery

query = VectorQuery(

vector=[0.16, -0.34, 0.98, 0.23],

vector_field_name="embedding",

num_results=3,

# Optional: tune search performance with runtime parameters

ef_runtime=100 # HNSW: higher for better recall

)

# run the vector search query against the embedding field

results = index.query(query)

Incorporate complex metadata filters on your queries:

from redisvl.query.filter import Tag

# define a tag match filter

tag_filter = Tag("user") == "john"

# update query definition

query.set_filter(tag_filter)

# execute query

results = index.query(query)

RangeQuery - Vector search within a defined range paired with customizable filters

FilterQuery - Standard search using filters and the full-text search

CountQuery - Count the number of indexed records given attributes

TextQuery - Full-text search with support for field weighting and BM25 scoring

Read more about building advanced Redis queries.

Integrate with popular embedding providers to greatly simplify the process of vectorizing unstructured data for your index and queries:

from redisvl.utils.vectorize import CohereTextVectorizer

# set COHERE_API_KEY in your environment

co = CohereTextVectorizer()

embedding = co.embed(

text="What is the capital city of France?",

input_type="search_query"

)

embeddings = co.embed_many(

texts=["my document chunk content", "my other document chunk content"],

input_type="search_document"

)

Learn more about using vectorizers in your embedding workflows.

Integrate with popular reranking providers to improve the relevancy of the initial search results from Redis

We're excited to announce the support for RedisVL Extensions. These modules implement interfaces exposing best practices and design patterns for working with LLM memory and agents. We've taken the best from what we've learned from our users (that's you) as well as bleeding-edge customers, and packaged it up.

Have an idea for another extension? Open a PR or reach out to us at applied.ai@redis.com. We're always open to feedback.

Increase application throughput and reduce the cost of using LLM models in production by leveraging previously generated knowledge with the SemanticCache.

from redisvl.extensions.cache.llm import SemanticCache

# init cache with TTL and semantic distance threshold

llmcache = SemanticCache(

name="llmcache",

ttl=360,

redis_url="redis://localhost:6379",

distance_threshold=0.1

)

# store user queries and LLM responses in the semantic cache

llmcache.store(

prompt="What is the capital city of France?",

response="Paris"

)

# quickly check the cache with a slightly different prompt (before invoking an LLM)

response = llmcache.check(prompt="What is France's capital city?")

print(response[0]["response"])

>>> Paris

Learn more about semantic caching for LLMs.

Reduce computational costs and improve performance by caching embedding vectors with their associated text and metadata using the EmbeddingsCache.

from redisvl.extensions.cache.embeddings import EmbeddingsCache

from redisvl.utils.vectorize import HFTextVectorizer

# Initialize embedding cache

embed_cache = EmbeddingsCache(

name="embed_cache",

redis_url="redis://localhost:6379",

ttl=3600 # 1 hour TTL

)

# Initialize vectorizer with cache

vectorizer = HFTextVectorizer(

model="sentence-transformers/all-MiniLM-L6-v2",

cache=embed_cache

)

# First call computes and caches the embedding

embedding = vectorizer.embed("What is machine learning?")

# Subsequent calls retrieve from cache (much faster!)

cached_embedding = vectorizer.embed("What is machine learning?")

>>> Cache hit! Retrieved from Redis in <1ms

Learn more about embedding caching for improved performance.

Improve personalization and accuracy of LLM responses by providing user conversation context. Manage access to memory data using recency or relevancy, powered by vector search with the MessageHistory.

from redisvl.extensions.message_history import SemanticMessageHistory

history = SemanticMessageHistory(

name="my-session",

redis_url="redis://localhost:6379",

distance_threshold=0.7

)

# Supports roles: system, user, llm, tool

# Optional metadata field for additional context

history.add_messages([

{"role": "user", "content": "hello, how are you?"},

{"role": "llm", "content": "I'm doing fine, thanks."},

{"role": "user", "content": "what is the weather going to be today?"},

{"role": "llm", "content": "I don't know", "metadata": {"model": "gpt-4"}}

])

Get recent chat history:

history.get_recent(top_k=1)

>>> [{"role": "llm", "content": "I don't know", "metadata": {"model": "gpt-4"}}]

Get relevant chat history (powered by vector search):

history.get_relevant("weather", top_k=1)

>>> [{"role": "user", "content": "what is the weather going to be today?"}]

Filter messages by role:

# Get only user messages

history.get_recent(role="user")

# Or multiple roles

history.get_recent(role=["user", "system"])

Learn more about LLM memory.

Build fast decision models that run directly in Redis and route user queries to the nearest "route" or "topic".

from redisvl.extensions.router import Route, SemanticRouter

routes = [

Route(

name="greeting",

references=["hello", "hi"],

metadata={"type": "greeting"},

distance_threshold=0.3,

),

Route(

name="farewell",

references=["bye", "goodbye"],

metadata={"type": "farewell"},

distance_threshold=0.3,

),

]

# build semantic router from routes

router = SemanticRouter(

name="topic-router",

routes=routes,

redis_url="redis://localhost:6379",

)

router("Hi, good morning")

>>> RouteMatch(name='greeting', distance=0.273891836405)

Learn more about semantic routing.

Create, destroy, and manage Redis index configurations from a purpose-built CLI interface: rvl.

$ rvl -h

usage: rvl <command> [<args>]

Commands:

index Index manipulation (create, delete, etc.)

version Obtain the version of RedisVL

stats Obtain statistics about an index

Read more about using the CLI.

Redis is a proven, high-performance database that excels at real-time workloads. With RedisVL, you get a production-ready Python client that makes Redis's vector search, caching, and session management capabilities easily accessible for AI applications.

Built on the Redis Python client, RedisVL provides an intuitive interface for vector search, LLM caching, and conversational AI memory - all the core components needed for modern AI workloads.

For additional help, check out the following resources:

Please help us by contributing PRs, opening GitHub issues for bugs or new feature ideas, improving documentation, or increasing test coverage. Read more about how to contribute!

This project is supported by Redis, Inc on a good faith effort basis. To report bugs, request features, or receive assistance, please file an issue.

FAQs

Python client library and CLI for using Redis as a vector database

We found that redisvl demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 3 open source maintainers collaborating on the project.

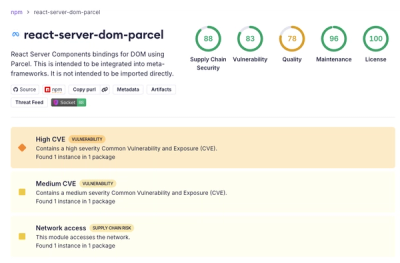

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Deno 2.6 introduces deno audit with a new --socket flag that plugs directly into Socket to bring supply chain security checks into the Deno CLI.

Security News

New DoS and source code exposure bugs in React Server Components and Next.js: what’s affected and how to update safely.

Security News

Socket CEO Feross Aboukhadijeh joins Software Engineering Daily to discuss modern software supply chain attacks and rising AI-driven security risks.