Security News

The Push to Ban Ransom Payments Is Gaining Momentum

Ransomware costs victims an estimated $30 billion per year and has gotten so out of control that global support for banning payments is gaining momentum.

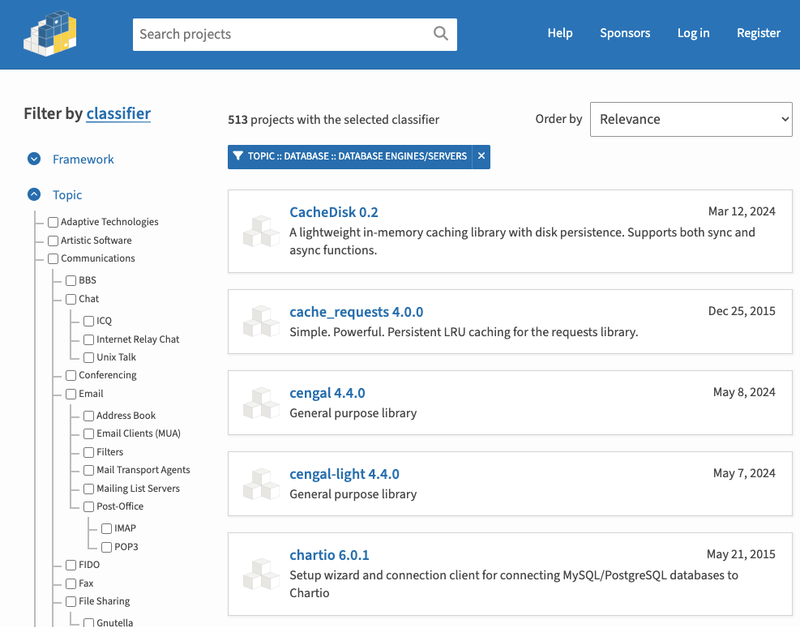

Implementation for Pytorch of the method described in our paper "Bolstering Stochastic Gradient Descent with Model Building", S. Ilker Birbil, Ozgur Martin, Gonenc Onay, Figen Oztoprak, 2021 (see https://arxiv.org/abs/2111.07058)

Readme

This repository includes a new fast and robust stochastic optimization algorithm for training deep learning models. The core idea of the algorithm is based on building models with local stochastic gradient information. The details of the algorithm is given in our recent paper.

Abstract

Stochastic gradient descent method and its variants constitute the core optimization algorithms that achieve good convergence rates for solving machine learning problems. These rates are obtained especially when these algorithms are fine-tuned for the application at hand. Although this tuning process can require large computational costs, recent work has shown that these costs can be reduced by line search methods that iteratively adjust the stepsize. We propose an alternative approach to stochastic line search by using a new algorithm based on forward step model building. This model building step incorporates a second-order information that allows adjusting not only the stepsize but also the search direction. Noting that deep learning model parameters come in groups (layers of tensors), our method builds its model and calculates a new step for each parameter group. This novel diagonalization approach makes the selected step lengths adaptive. We provide convergence rate analysis, and experimentally show that the proposed algorithm achieves faster convergence and better generalization in most problems. Moreover, our experiments show that the proposed method is quite robust as it converges for a wide range of initial stepsizes.

Keywords: model building; second-order information; stochastic gradient descent; convergence analysis

pip install git+https://github.com/sbirbil/SMB.git

Here is how you can use SMB:

import smb

optimizer = smb.SMB(model.parameters(), independent_batch=False) #independent_batch=True for SMBi optimizer

for epoch in range(100):

# training steps

model.train()

for batch_index, (data, target) in enumerate(train_loader):

# create loss closure for smb algorithm

def closure():

optimizer.zero_grad()

loss = torch.nn.CrossEntropyLoss()(model(data), target)

return loss

# forward pass

loss = optimizer.step(closure=closure)

You can also check our tutorial for a complete example (or the Colab notebook without installation). Set the hyper-parameter independent_batch to True in order to use the SMBi optimizer. Our paper includes more information.

See the following script in order to reproduce the results in our paper.

FAQs

Implementation for Pytorch of the method described in our paper "Bolstering Stochastic Gradient Descent with Model Building", S. Ilker Birbil, Ozgur Martin, Gonenc Onay, Figen Oztoprak, 2021 (see https://arxiv.org/abs/2111.07058)

We found that smb-optimizer demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 2 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Ransomware costs victims an estimated $30 billion per year and has gotten so out of control that global support for banning payments is gaining momentum.

Application Security

New SEC disclosure rules aim to enforce timely cyber incident reporting, but fear of job loss and inadequate resources lead to significant underreporting.

Security News

The Python Software Foundation has secured a 5-year sponsorship from Fastly that supports PSF's activities and events, most notably the security and reliability of the Python Package Index (PyPI).