Security News

The Push to Ban Ransom Payments Is Gaining Momentum

Ransomware costs victims an estimated $30 billion per year and has gotten so out of control that global support for banning payments is gaining momentum.

Readme

WVUtils is a collection of utilities that are shared across multiple Phosmic projects.

WVUtils requires Python 3.10 or higher and is platform independent.

If you discover an issue with WVUtils, please report it at https://github.com/Phosmic/wvutils/issues.

This program is free software: you can redistribute it and/or modify it under the terms of the GNU General Public License as published by the Free Software Foundation, either version 3 of the License, or (at your option) any later version.

This program is distributed in the hope that it will be useful, but WITHOUT ANY WARRANTY; without even the implied warranty of MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the GNU General Public License for more details.

You should have received a copy of the GNU General Public License along with this program. If not, see https://www.gnu.org/licenses/.

Most stable version from PyPi:

python3 -m pip install wvutils

Development version from GitHub:

git clone git+https://github.com/Phosmic/wvutils.git

cd wvutils

python3 -m pip install -e .

wvutils.awsUtilities for interacting with AWS services.

This module provides utilities for interacting with AWS services.

get_boto3_sessiondef get_boto3_session(region_name: AWSRegion) -> Session

Get the globally shared Boto3 session for a region (thread-safe).

Todo:

Arguments:

region_name AWSRegion - Region name for the session.Returns:

clear_boto3_sessionsdef clear_boto3_sessions() -> int

Clear all globally shared Boto3 sessions (thread-safe).

Returns:

boto3_client_ctx@contextmanager

def boto3_client_ctx(service_name: str, region_name: AWSRegion)

Context manager for a Boto3 client (thread-safe).

Todo:

Arguments:

service_name str - Name of the service.region_name AWSRegion - Region name for the service.Yields:

Raises:

ClientError - If an error occurs.parse_s3_uridef parse_s3_uri(s3_uri: str) -> tuple[str, str]

Parse the bucket name and path from a S3 URI.

Arguments:

s3_uri str - S3 URI to parse.Returns:

download_from_s3def download_from_s3(file_path: FilePath,

bucket_name: str,

bucket_path: str,

region_name: AWSRegion,

overwrite: bool = False) -> None

Download a file from S3.

Arguments:

file_path FilePath - Output path to use while downloading the file.bucket_name str - Name of the S3 bucket containing the file.bucket_path str - Path of the S3 bucket containing the file.region_name AWSRegion - Region name for S3.overwrite bool - Overwrite file on disk if already exists. Defaults to False.Raises:

FileExistsError - If the file already exists and overwrite is False.upload_file_to_s3def upload_file_to_s3(file_path: FilePath, bucket_name: str, bucket_path: str,

region_name: AWSRegion) -> None

Upload a file to S3.

Arguments:

file_path FilePath - Path of the file to upload.bucket_name str - Name of the S3 bucket to upload the file to.bucket_path str - Path in the S3 bucket to upload the file to.region_name AWSRegion - Region name for S3.Raises:

FileNotFoundError - If the file does not exist.upload_bytes_to_s3def upload_bytes_to_s3(raw_b: bytes, bucket_name: str, bucket_path: str,

region_name: AWSRegion) -> None

Write bytes to a file in S3.

Arguments:

raw_b bytes - Bytes of the file to be written.bucket_name str - Name of the S3 bucket to upload the file to.bucket_path str - Path in the S3 bucket to upload the file to.region_name AWSRegion - Region name for S3.secrets_fetchdef secrets_fetch(

secret_name: str,

region_name: AWSRegion) -> str | int | float | list | dict | None

Request and decode a secret from Secrets.

Arguments:

secret_name str - Secret name to use.region_name AWSRegion - Region name for Secrets.Returns:

Raises:

ClientError - If an error occurs while fetching the secret.ValueError - If the secret is not valid JSON.athena_execute_querydef athena_execute_query(query: str, database_name: str,

region_name: AWSRegion) -> str | None

Execute a query in Athena.

Arguments:

query str - Query to execute.database_name str - Name of database to execute the query against.region_name AWSRegion - Region name for Athena.Returns:

athena_retrieve_querydef athena_retrieve_query(qeid: str, database_name: str,

region_name: AWSRegion) -> str | None

Retrieve the S3 URI for results of a query in Athena.

Arguments:

qeid str - Query execution ID of the query to fetch.database_name str - Name of the database the query is running against (for debugging).region_name AWSRegion - Region name for Athena.Returns:

Raises:

ValueError - If the query execution ID is unknown or missing.athena_stop_querydef athena_stop_query(qeid: str, region_name: AWSRegion) -> None

Stop the execution of a query in Athena.

Arguments:

qeid str - Query execution ID of the query to stop.region_name AWSRegion - Region name for Athena.wvutils.errorsCustom errors.

This module contains custom exceptions that are used throughout the package.

WVUtilsError Objectsclass WVUtilsError(Exception)

Base class for all wvutils exceptions.

JSONError Objectsclass JSONError(Exception)

Base class for all JSON exceptions.

JSONEncodeError Objectsclass JSONEncodeError(JSONError, TypeError)

Raised when JSON serializing fails.

JSONDecodeError Objectsclass JSONDecodeError(JSONError, ValueError)

Raised when JSON deserializing fails.

PickleError Objectsclass PickleError(Exception)

Base class for all pickle exceptions.

PickleEncodeError Objectsclass PickleEncodeError(PickleError, TypeError)

Raised when pickle serializing fails.

PickleDecodeError Objectsclass PickleDecodeError(PickleError, ValueError)

Raised when unpickling fails.

HashError Objectsclass HashError(Exception)

Base class for all hashing exceptions.

HashEncodeError Objectsclass HashEncodeError(HashError, TypeError)

Raised when hashing fails.

wvutils.pathUtilities for working with paths.

This module provides utilities for working with paths.

is_pathlikedef is_pathlike(potential_path: Any) -> bool

Check if an object is path-like.

An object is path-like if it is a string or has a __fspath__ method.

Arguments:

potential_path Any - Object to check.Returns:

stringify_pathdef stringify_path(file_path: FilePath) -> str

Stringify a path-like object.

The path-like object is first converted to a string, then the user directory is expanded.

An object is path-like if it is a string or has a

__fspath__method.

Arguments:

file_path FilePath - Path-like object to stringify.Returns:

Raises:

TypeError - If the object is not path-like.ensure_abspathdef ensure_abspath(file_path: str) -> str

Make a path absolute if it is not already.

Arguments:

file_path str - Path to ensure is absolute.Returns:

resolve_pathdef resolve_path(file_path: FilePath) -> str

Stringify and resolve a path-like object.

The path-like object is first converted to a string, then the user directory is expanded, and finally the path is resolved to an absolute path.

An object is path-like if it is a string or has a

__fspath__method.

Arguments:

file_path FilePath - Path-like object to resolve.Returns:

Raises:

TypeError - If the object is not path-like.xdg_cache_pathdef xdg_cache_path() -> str

Base directory to store user-specific non-essential data files.

This should be '${HOME}/.cache', but the 'HOME' environment variable may not exist on non-POSIX-compliant systems. On POSIX-compliant systems, the XDG base directory specification is followed exactly since '~' expands to '$HOME' if it is present.

Returns:

wvutils.proxiesUtilities for working with proxies.

This module provides utilities for working with proxies.

ProxyManager Objectsclass ProxyManager()

Manages a list of proxies.

This class manages a list of proxies, allowing for randomization, re-use, etc.

ProxyManager.add_proxiesdef add_proxies(proxies: list[str], include_duplicates: bool = False) -> None

Add additional proxy addresses.

All proxy addresses added will be added to the end of the list.

Arguments:

proxies list[str] - List of proxy addresses.include_duplicates bool, optional - Whether to include duplicates. Defaults to False.ProxyManager.set_proxiesdef set_proxies(proxies: list[str]) -> None

Set the proxy addresses.

Note: This will clear all existing proxies.

Arguments:

proxies list[str] - List of proxy addresses.ProxyManager.can_cycle@property

def can_cycle() -> bool

Check if can cycle to the next proxy address.

Returns:

ProxyManager.cycledef cycle() -> None

Attempt to cycle to the next proxy address.

ProxyManager.proxy@property

def proxy() -> str | None

Current proxy address.

Returns:

https_to_httpdef https_to_http(address: str) -> str

Convert a HTTPS proxy address to HTTP.

Arguments:

address str - HTTPS proxy address.Returns:

Raises:

ValueError - If the address does not start with 'http://' or 'https://'.prepare_http_proxy_for_requestsdef prepare_http_proxy_for_requests(address: str) -> dict[str, str]

Prepare a HTTP(S) proxy address for use with the 'requests' library.

Arguments:

address str - HTTP(S) proxy address.Returns:

Raises:

ValueError - If the address does not start with 'http://' or 'https://'.wvutils.argsUtilities for parsing arguments from the command line.

This module provides utilities for parsing arguments from the command line.

nonempty_stringdef nonempty_string(name: str) -> Callable[[str], str]

Ensure a string is non-empty.

Example:

subparser.add_argument(

"hashtag",

type=nonempty_string("hashtag"),

help="A hashtag (without #)",

)

Arguments:

name str - Name of the function, used for debugging.Returns:

safechars_stringdef safechars_string(

name: str,

allowed_chars: Collection[str] | None = None) -> Callable[[str], str]

Ensure a string contains only safe characters.

Example:

subparser.add_argument(

"--session-key",

type=safechars_string,

help="Key to share a single token across processes",

)

Arguments:

name str - Name of the function, used for debugging.allowed_chars Collection[str] | None, optional - Custom characters used to validate the function name. Defaults to None.Returns:

Raises:

ValueError - If empty collection of allowed characters is provided.wvutils.generalGeneral utilities for working with Python.

This module provides general utilities for working with Python.

is_readable_iolikedef is_readable_iolike(potential_io: Any) -> bool

Check if an object is a readable IO-like.

An object is readable IO-like if it has one of the following:

readable method that returns TrueOr if it has all of the following:

read method.mode that contains "r".seek method.close method.__enter__ method.__exit__ method.Arguments:

potential_io Any - Object to check.Returns:

is_writable_iolikedef is_writable_iolike(potential_io: Any) -> bool

Check if an object is a writable IO-like.

An object is writable IO-like if it has one of the following:

writable method that returns TrueOr if it has all of the following:

write method.mode that contains "w".seek method.close method.__enter__ method.__exit__ method.Arguments:

potential_io Any - Object to check.Returns:

is_iolikedef is_iolike(potential_io: Any) -> bool

Check if an object is IO-like.

An object is IO-like if it has one of the following:

io.IOBase base classis_readable_iolike returns True. (see is_readable_iolike)is_writable_iolike returns True. (see is_writable_iolike)Arguments:

potential_io Any - Object to check.Returns:

count_lines_in_filedef count_lines_in_file(file_path: FilePath) -> int

Count the number of lines in a file.

Notes:

All files have at least 1 line (# of lines = # of newlines + 1).

Arguments:

file_path FilePath - Path of the file to count lines in.Returns:

sys_set_recursion_limitdef sys_set_recursion_limit() -> None

Raise recursion limit to allow for more recurse.

gc_set_thresholddef gc_set_threshold() -> None

Reduce Number of GC Runs to Improve Performance

Notes:

Only applies to CPython.

chunkerdef chunker(seq: Sequence[Any],

n: int) -> Generator[Sequence[Any], None, None]

Iterate a sequence in size n chunks.

Arguments:

seq Sequence[Any] - Sequence of values.n int - Number of values per chunk.Yields:

Raises:

ValueError - If n is 0 or negative.is_iterabledef is_iterable(obj: Any) -> bool

Check if an object is iterable.

Arguments:

obj Any - Object to check.Returns:

rename_keydef rename_key(obj: dict,

src_key: str,

dest_key: str,

in_place: bool = False) -> dict | None

Rename a dictionary key.

Todo:

All of the following are True:

isinstance(True, bool)

isinstance(True, int)

1 == True

1 in {1: "a"}

True in {1: "a"}

1 in {True: "a"}

True in {True: "a"}

1 in {1: "a", True: "b"}

True in {1: "a", True: "b"}

Arguments:

obj dict - Reference to the dictionary to modify.src str - Name of the key to rename.dest str - Name of the key to change to.in_place bool, optional - Perform in-place using the provided reference. Defaults to False.Returns:

unnest_keydef unnest_key(obj: dict, *keys: str) -> Any | None

Fetch a value from a deeply nested dictionary.

Arguments:

obj dict - Dictionary to recursively iterate.*keys str - Ordered keys to fetch.Returns:

sort_dict_by_keydef sort_dict_by_key(obj: dict,

reverse: bool = False,

deep_copy: bool = False) -> dict | None

Sort a dictionary by key.

Arguments:

obj dict - Dictionary to sort.reverse bool, optional - Sort in reverse order. Defaults to False.deep_copy bool, optional - Return a deep copy of the dictionary. Defaults to False.Returns:

in_place is True, None is returned.Raises:

ValueError - If the dictionary keys are not of the same type.dedupe_listdef dedupe_list(values: list[Any], raise_on_dupe: bool = False) -> list[Any]

Remove duplicate values from a list.

Example:

dedupe_list([1, 2, 3, 1, 2, 3])

# [1, 2, 3]

Arguments:

values list[Any] - List of values to dedupe.raise_on_dupe bool, optional - Raise an error if a duplicate is found. Defaults to False.Returns:

Raises:

ValueError - If a duplicate is found and raise_on_dupe is True.dupe_in_listdef dupe_in_list(values: list[Any]) -> bool

Check if a list has duplicate values.

Arguments:

values list[Any] - List of values to check.Returns:

invert_dict_of_strdef invert_dict_of_str(obj: dict[Any, str],

deep_copy: bool = False,

raise_on_dupe: bool = False) -> dict

Invert a dictionary of strings.

Notes:

The value of the last key with a given value will be used.

Example:

invert_dict_of_str({"a": "b", "c": "d"})

# {"b": "a", "d": "c"}

Arguments:

obj dict[Any, str] - Dictionary to invert.deep_copy bool, optional - Return a deep copy of the dictionary. Defaults to False.raise_on_dupe bool, optional - Raise an error if a duplicate is found. Defaults to False.Returns:

Raises:

ValueError - If a duplicate is found and raise_on_dupe is True.get_all_subclassesdef get_all_subclasses(cls: type) -> list[type]

Get all subclasses of a class.

Arguments:

cls type - Class to get subclasses of.Returns:

wvutils.dtDatetime utilities.

This module contains functions and classes that are used to work with datetimes.

dtformats Objectsclass dtformats()

Datetime formats.

Attributes:

twitter str - Twitter datetime format.reddit str - Reddit datetime format.general str - General datetime format.db str - Database datetime format.date str - Date format.time_12h str - 12-hour time format with timezone.time_24h str - 24-hour time format with timezone.num_days_in_monthdef num_days_in_month(year: int, month: int) -> int

Determine the number of days in a month.

Arguments:

year int - Year to check.month int - Month to check.Returns:

wvutils.parquetParquet utilities.

This module provides utilities for working with Parquet files.

get_parquet_sessiondef get_parquet_session(use_s3: bool,

region_name: AWSRegion | None = None) -> fs.FileSystem

Get the globally shared Parquet filesystem session.

Todo:

Arguments:

use_s3 bool - Use S3 if True, otherwise uses local filesystem.

region_name AWSRegion | None, optional - AWS region name. Defaults to None.

Returns:

clear_parquet_sessionsdef clear_parquet_sessions() -> int

Clear all globally shared Parquet filesystem sessions.

Returns:

create_pa_schemadef create_pa_schema(schema_template: dict[str, str]) -> pa.schema

Create a parquet schema from a template.

Example:

{

"key_a": "string",

"key_b": "integer",

"key_c": "float",

"key_d": "bool",

"key_e": "timestamp[s]",

"key_f": "timestamp[ms]",

"key_g": "timestamp[ns]",

}

becomes

pa.schema([

("key_a", pa.string()),

("key_b", pa.int64()),

("key_c", pa.float64()),

("key_d", pa.bool_()),

("key_e", pa.timestamp("s", tz=utc)),

("key_f", pa.timestamp("ms", tz=utc)),

("key_g", pa.timestamp("ns", tz=utc)),

])

Arguments:

schema_template Sequence[Sequence[str]] - Data names and parquet types for creating the schema.Returns:

Raises:

ValueError - If an unknown type name is encountered.force_dataframe_dtypesdef force_dataframe_dtypes(dataframe: pd.DataFrame,

template: dict[str, str]) -> pd.DataFrame

Force the data types of a dataframe using a template.

Arguments:

dataframe pd.DataFrame - Dataframe to force types.template dict[str, str] - Template to use for forcing types.Returns:

Raises:

ValueError - If an unknown type name is encountered.export_datasetdef export_dataset(data: list[dict] | deque[dict] | pd.DataFrame,

output_location: str | FilePath,

primary_template: dict[str, str],

partitions_template: dict[str, str],

*,

basename_template: str | None = None,

use_s3: bool = False,

region_name: AWSRegion | None = None,

use_threads: bool = False,

overwrite: bool = False) -> None

Write to dataset to local filesystem or AWS S3.

Arguments:

data list[dict] | deque[dict] | pd.DataFrame] - List, deque, or dataframe of objects to be written.output_location str | FilePath - Location to write the dataset to.primary_template dict[str, str] - Parquet schema template to use for the table (excluding partitions).

partitions_template(dict[str, str]): Parquet schema template to use for the partitions.basename_template str, optional - Filename template to use when writing to S3 or locally. Defaults to None.use_s3 bool, optional - Use S3 as the destination when exporting parquet files. Defaults to False.region_name AWSRegion, optional - AWS region to use when exporting to S3. Defaults to None.use_threads bool, optional - Use multiple threads when exporting. Defaults to False.overwrite bool, optional - Overwrite existing files. Defaults to False.wvutils.restructUtilities for restructuring data.

This module provides utilities for restructuring data, including serialization and hashing.

JSON

| Python | JSON |

|---|---|

| dict | object |

| list, tuple | array |

| str | string |

| int, float, int- & float-derived enums | number |

| True | true |

| False | false |

| None | null |

Hash

No content.

Pickle

An important difference between cloudpickle and pickle is that cloudpickle can serialize a function or class by value, whereas pickle can only serialize it by reference. Serialization by reference treats functions and classes as attributes of modules, and pickles them through instructions that trigger the import of their module at load time. Serialization by reference is thus limited in that it assumes that the module containing the function or class is available/importable in the unpickling environment. This assumption breaks when pickling constructs defined in an interactive session, a case that is automatically detected by cloudpickle, that pickles such constructs by value.

json_dumpsdef json_dumps(obj: JSONSerializable) -> str

Encode an object as JSON.

Arguments:

obj JSONSerializable - Object to encode.Returns:

Raises:

JSONEncodeError - If the object could not be encoded.jsonl_dumpsdef jsonl_dumps(objs: Iterable[JSONSerializable]) -> str

Encode objects as JSONL.

Arguments:

objs Iterable[JSONSerializable] - Objects to encode.Returns:

Raises:

JSONEncodeError - If the objects could not be encoded.json_dumpdef json_dump(obj: JSONSerializable, file_path: str) -> None

Encode an object as JSON and write it to a file.

Arguments:

file_path str - Path of the file to open.Raises:

JSONEncodeError - If the object could not be encoded.jsonl_dumpdef jsonl_dump(objs: Iterable[JSONSerializable], file_path: str) -> None

Encode objects as JSONL and write them to a file.

Arguments:

objs Iterable[JSONSerializable] - Objects to encode.file_path str - Path of the file to open.Raises:

JSONEncodeError - If the objects could not be encoded.json_loadsdef json_loads(encoded_obj: str) -> JSONSerializable

Decode a JSON-encoded object.

Arguments:

encoded_obj str - Object to decode.Returns:

Raises:

JSONDecodeError - If the object could not be decoded.json_loaddef json_load(file_path: FilePath) -> JSONSerializable

Decode a file containing a JSON-encoded object.

Arguments:

file_path FilePath - Path of the file to open.Returns:

Raises:

JSONDecodeError - If the file could not be decoded.jsonl_loaderdef jsonl_loader(

file_path: FilePath,

*,

allow_empty_lines: bool = False

) -> Generator[JSONSerializable, None, None]

Decode a file containing JSON-encoded objects, one per line.

Arguments:

file_path FilePath - Path of the file to open.allow_empty_lines bool, optional - Whether to allow (skip) empty lines. Defaults to False.Yields:

Raises:

JSONDecodeError - If the line could not be decoded, or if an empty line was found and allow_empty_lines is False.squeegee_loaderdef squeegee_loader(

file_path: FilePath) -> Generator[JSONSerializable, None, None]

Automatically decode a file containing JSON-encoded objects.

Supports multiple formats (JSON, JSONL, JSONL of JSONL, etc).

Todo:

Add support for pretty-printed JSON that has been appended to a file.

Arguments:

file_path FilePath - Path of the file to open.Yields:

Raises:

JSONDecodeError - If the line could not be decoded.gen_hashdef gen_hash(obj: MD5Hashable) -> str

Create an MD5 hash from a hashable object.

Note: Tuples and deques are not hashable, so they are converted to lists.

Arguments:

obj MD5Hashable - Object to hash.Returns:

Raises:

HashEncodeError - If the object could not be encoded.pickle_dumpdef pickle_dump(obj: PickleSerializable, file_path: FilePath) -> None

Serialize an object as a pickle and write it to a file.

Arguments:

obj JSONSerializable - Object to serialize.file_path FilePath - Path of the file to write.Raises:

PickleEncodeError - If the object could not be encoded.pickle_dumpsdef pickle_dumps(obj: PickleSerializable) -> bytes

Serialize an object as a pickle.

Arguments:

obj PickleSerializable - Object to serialize.Returns:

Raises:

PickleEncodeError - If the object could not be encoded.pickle_loaddef pickle_load(file_path: FilePath) -> PickleSerializable

Deserialize a pickle-serialized object from a file.

Note: Not safe for large files.

Arguments:

file_path FilePath - Path of the file to open.Returns:

Raises:

PickleDecodeError - If the object could not be decoded.pickle_loadsdef pickle_loads(serialized_obj: bytes) -> PickleSerializable

Deserialize a pickle-serialized object.

Arguments:

serialized_obj bytes - Object to deserialize.Returns:

Raises:

PickleDecodeError - If the object could not be decoded.FAQs

Collection of common utilities.

We found that wvutils demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Ransomware costs victims an estimated $30 billion per year and has gotten so out of control that global support for banning payments is gaining momentum.

Application Security

New SEC disclosure rules aim to enforce timely cyber incident reporting, but fear of job loss and inadequate resources lead to significant underreporting.

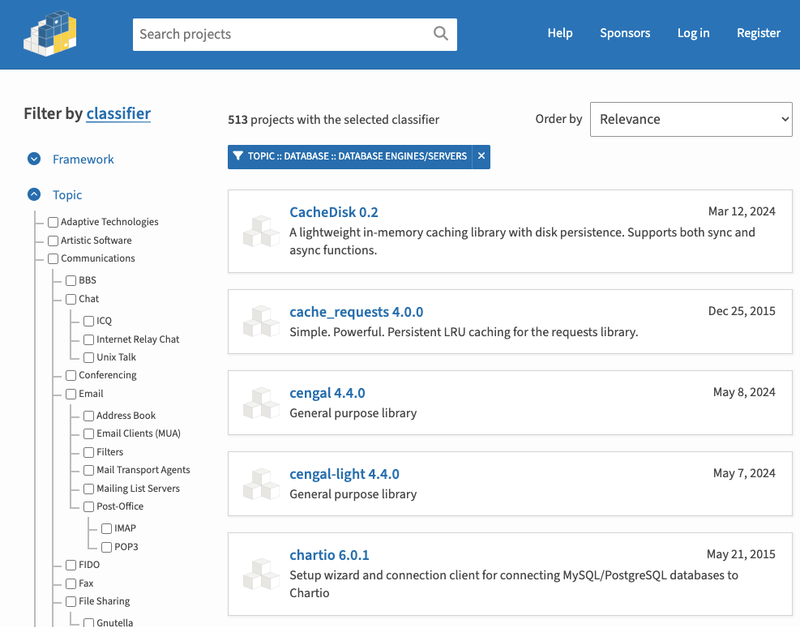

Security News

The Python Software Foundation has secured a 5-year sponsorship from Fastly that supports PSF's activities and events, most notably the security and reliability of the Python Package Index (PyPI).