A C++ implementation of a fast and memory efficient HAT-trie

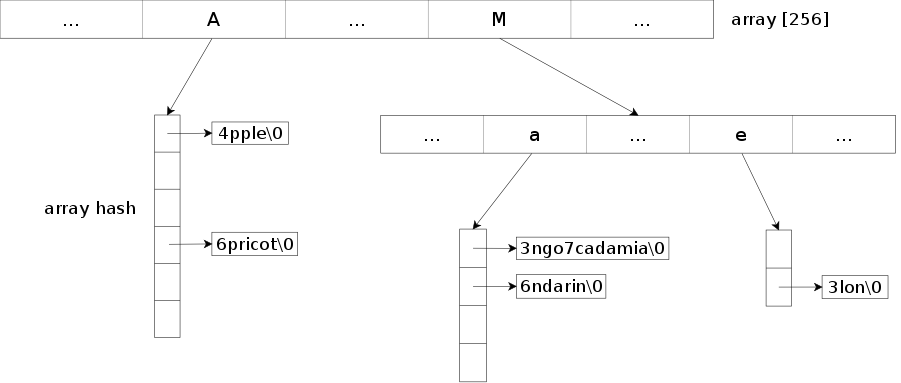

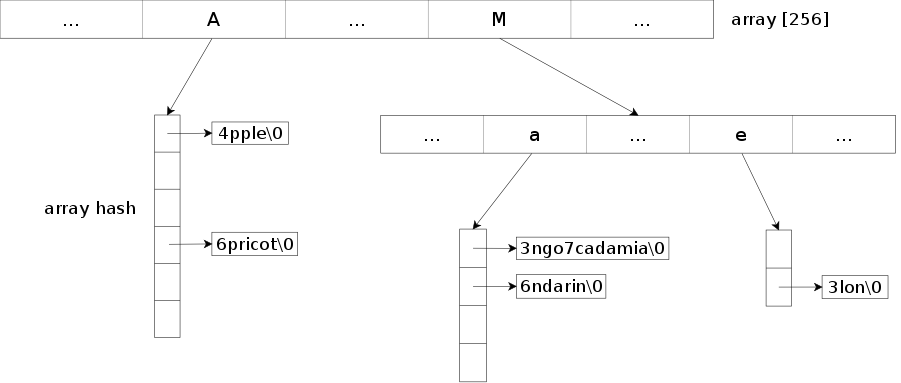

Trie implementation based on the "HAT-trie: A Cache-conscious Trie-based Data Structure for Strings." (Askitis Nikolas and Sinha Ranjan, 2007) paper. For now, only the pure HAT-trie has been implemented, the hybrid version may arrive later. Details regarding the HAT-trie data structure can be found here.

The library provides an efficient and compact way to store a set or a map of strings by compressing the common prefixes. It also allows to search for keys that match a prefix. Note though that the default parameters of the structure are geared toward optimizing exact searches, if you do a lot of prefix searches you may want to reduce the burst threshold through the burst_threshold method.

It's a well adapted structure to store a large number of strings.

For the array hash part, the array-hash project is used and included in the repository.

The library provides two classes: tsl::htrie_map and tsl::htrie_set.

Overview

- Header-only library, just add the include directory to your include path and you are ready to go. If you use CMake, you can also use the

tsl::hat_trie exported target from the CMakeLists.txt. - Low memory usage while keeping reasonable performances (see benchmark).

- Support prefix searches through

equal_prefix_range (usefull for autocompletion for example) and prefix erasures through erase_prefix. - Support longest matching prefix searches through

longest_prefix. - Support for efficient serialization and deserialization (see example and the

serialize/deserialize methods in the API for details). - Keys are not ordered as they are partially stored in a hash map.

- All operations modifying the data structure (insert, emplace, erase, ...) invalidate the iterators.

- Support null characters in the key (you can thus store binary data in the trie).

- Support for any type of value as long at it's either copy-constructible or both nothrow move constructible and nothrow move assignable.

- The balance between speed and memory usage can be modified through the

max_load_factor method. A lower max load factor will increase the speed, a higher one will reduce the memory usage. Its default value is set to 8.0. - The default burst threshold, which is the maximum size of an array hash node before a burst occurs, is set to 16 384 which provides good performances for exact searches. If you mainly use prefix searches, you may want to reduce it to something like 1024 or lower for faster iteration on the results through the

burst_threshold method. - By default the maximum allowed size for a key is set to 65 535. This can be raised through the

KeySizeT template parameter.

Thread-safety and exception guarantees are similar to the STL containers.

Hash function

The default hash function used by the structure depends on the presence of std::string_view. If it is available, std::hash<std::string_view> is used, otherwise a simple FNV-1a hash function is used to avoid any dependency.

If you can't use C++17 or later, we recommend to replace the hash function with something like CityHash, MurmurHash, FarmHash, ... for better performances. On the tests we did, CityHash64 offers a ~20% improvement on reads compared to FNV-1a.

#include <city.h>

struct str_hash {

std::size_t operator()(const char* key, std::size_t key_size) const {

return CityHash64(key, key_size);

}

};

tsl::htrie_map<char, int, str_hash> map;

The std::hash<std::string> can't be used efficiently as the structure doesn't store any std::string object. Any time a hash would be needed, a temporary std::string would have to be created.

Benchmark

Wikipedia dataset

The benchmark consists in inserting all the titles from the main namespace of the Wikipedia archive into the data structure, check the used memory space after the insert (including potential memory fragmentation) and search for all the titles again in the data structure. The peak memory usage during the insert process is also measured with time(1).

Each title is associated with an int (32 bits). All the hash based structures use CityHash64 as hash function. For the tests marked with reserve, the reserve function is called beforehand to avoid any rehash.

Note that tsl::hopscotch_map, std::unordered_map, google::dense_hash_map and spp::sparse_hash_map use std::string as key which imposes a minimum size of 32 bytes (on x64) even if the key is only one character long. Other structures may be able to store one-character keys with 1 byte + 8 bytes for a pointer (on x64).

The benchmark was compiled with GCC 6.3 and ran on Debian Stretch x64 with an Intel i5-5200u and 8Go of RAM. Best of 20 runs was taken.

The code of the benchmark can be found on Gist.

Unsorted

The enwiki-20170320-all-titles-in-ns0.gz dataset is alphabetically sorted. For this benchmark, we first shuffle the dataset through shuf(1) to avoid a biased sorted dataset.

- As the hash function can't be passed in parameter, the code of the library itself is modified to use CityHash64.

Sorted

The key are inserted and read in alphabetical order.

- As the hash function can't be passed in parameter, the code of the library itself is modified to use CityHash64.

Dr. Askitis dataset

The benchmark consists in inserting all the words from the "Distinct Strings" dataset of Dr. Askitis into the data structure, check the used memory space and search for all the words from the "Skew String Set 1" dataset (where a string can be present multiple times) in the data structure. Note that the strings in this dataset have a quite short average and median key length (which may not be a realistic use case compared to the Wikipedia dataset used above). It's similar to the one on the cedar homepage.

- Dataset: distinct_1 (write) / skew1_1 (read)

- Size: 290.45 MiB / 1 029.46 MiB

- Number of keys: 28 772 169 / 177 999 203

- Average key length: 9.59 / 5.06

- Median key length: 8 / 4

- Max key length: 126 / 62

The benchmark protocol is the same as for the Wikipedia dataset.

- As the hash function can't be passed in parameter, the code of the library itself is modified to use CityHash64.

Installation

To use the library, just add the include directory to your include path. It is a header-only library.

If you use CMake, you can also use the tsl::hat_trie exported target from the CMakeLists.txt with target_link_libraries.

# Example where the hat-trie project is stored in a third-party directory

add_subdirectory(third-party/hat-trie)

target_link_libraries(your_target PRIVATE tsl::hat_trie)

The code should work with any C++11 standard-compliant compiler and has been tested with GCC 4.8.4, Clang 3.5.0 and Visual Studio 2015.

To run the tests you will need the Boost Test library and CMake.

git clone https://github.com/Tessil/hat-trie.git

cd hat-trie/tests

mkdir build

cd build

cmake ..

cmake --build .

./tsl_hat_trie_tests

Usage

The API can be found here. If std::string_view is available, the API changes slightly and can be found here.

Example

#include <iostream>

#include <string>

#include <tsl/htrie_map.h>

#include <tsl/htrie_set.h>

int main() {

tsl::htrie_map<char, int> map = {{"one", 1}, {"two", 2}};

map["three"] = 3;

map["four"] = 4;

map.insert("five", 5);

map.insert_ks("six_with_extra_chars_we_ignore", 3, 6);

map.erase("two");

std::string key_buffer;

for(auto it = map.begin(); it != map.end(); ++it) {

it.key(key_buffer);

std::cout << "{" << key_buffer << ", " << it.value() << "}" << std::endl;

}

for(auto it = map.begin(); it != map.end(); ++it) {

std::cout << "{" << it.key() << ", " << *it << "}" << std::endl;

}

tsl::htrie_map<char, int> map2 = {{"apple", 1}, {"mango", 2}, {"apricot", 3},

{"mandarin", 4}, {"melon", 5}, {"macadamia", 6}};

auto prefix_range = map2.equal_prefix_range("ma");

for(auto it = prefix_range.first; it != prefix_range.second; ++it) {

std::cout << "{" << it.key() << ", " << *it << "}" << std::endl;

}

auto longest_prefix = map2.longest_prefix("apple juice");

if(longest_prefix != map2.end()) {

std::cout << "{" << longest_prefix.key() << ", "

<< *longest_prefix << "}" << std::endl;

}

map2.erase_prefix("ma");

for(auto it = map2.begin(); it != map2.end(); ++it) {

std::cout << "{" << it.key() << ", " << *it << "}" << std::endl;

}

tsl::htrie_set<char> set = {"one", "two", "three"};

set.insert({"four", "five"});

for(auto it = set.begin(); it != set.end(); ++it) {

it.key(key_buffer);

std::cout << "{" << key_buffer << "}" << std::endl;

}

}

Serialization

The library provides an efficient way to serialize and deserialize a map or a set so that it can be saved to a file or send through the network.

To do so, it requires the user to provide a function object for both serialization and deserialization.

struct serializer {

template<typename U>

void operator()(const U& value);

void operator()(const CharT* value, std::size_t value_size);

};

struct deserializer {

template<typename U>

U operator()();

void operator()(CharT* value_out, std::size_t value_size);

};

Note that the implementation leaves binary compatibilty (endianness, float binary representation, size of int, ...) of the types it serializes/deserializes in the hands of the provided function objects if compatibilty is required.

More details regarding the serialize and deserialize methods can be found in the API.

#include <cassert>

#include <cstdint>

#include <fstream>

#include <type_traits>

#include <tsl/htrie_map.h>

class serializer {

public:

serializer(const char* file_name) {

m_ostream.exceptions(m_ostream.badbit | m_ostream.failbit);

m_ostream.open(file_name);

}

template<class T,

typename std::enable_if<std::is_arithmetic<T>::value>::type* = nullptr>

void operator()(const T& value) {

m_ostream.write(reinterpret_cast<const char*>(&value), sizeof(T));

}

void operator()(const char* value, std::size_t value_size) {

m_ostream.write(value, value_size);

}

private:

std::ofstream m_ostream;

};

class deserializer {

public:

deserializer(const char* file_name) {

m_istream.exceptions(m_istream.badbit | m_istream.failbit | m_istream.eofbit);

m_istream.open(file_name);

}

template<class T,

typename std::enable_if<std::is_arithmetic<T>::value>::type* = nullptr>

T operator()() {

T value;

m_istream.read(reinterpret_cast<char*>(&value), sizeof(T));

return value;

}

void operator()(char* value_out, std::size_t value_size) {

m_istream.read(value_out, value_size);

}

private:

std::ifstream m_istream;

};

int main() {

const tsl::htrie_map<char, std::int64_t> map = {{"one", 1}, {"two", 2},

{"three", 3}, {"four", 4}};

const char* file_name = "htrie_map.data";

{

serializer serial(file_name);

map.serialize(serial);

}

{

deserializer dserial(file_name);

auto map_deserialized = tsl::htrie_map<char, std::int64_t>::deserialize(dserial);

assert(map == map_deserialized);

}

{

deserializer dserial(file_name);

const bool hash_compatible = true;

auto map_deserialized =

tsl::htrie_map<char, std::int64_t>::deserialize(dserial, hash_compatible);

assert(map == map_deserialized);

}

}

Serialization with Boost Serialization and compression with zlib

It's possible to use a serialization library to avoid some of the boilerplate if the types to serialize are more complex.

The following example uses Boost Serialization with the Boost zlib compression stream to reduce the size of the resulting serialized file.

#include <boost/archive/binary_iarchive.hpp>

#include <boost/archive/binary_oarchive.hpp>

#include <boost/iostreams/filter/zlib.hpp>

#include <boost/iostreams/filtering_stream.hpp>

#include <boost/serialization/split_free.hpp>

#include <boost/serialization/utility.hpp>

#include <cassert>

#include <cstdint>

#include <fstream>

#include <tsl/htrie_map.h>

template<typename Archive>

struct serializer {

Archive& ar;

template<typename T>

void operator()(const T& val) { ar & val; }

template<typename CharT>

void operator()(const CharT* val, std::size_t val_size) {

ar.save_binary(reinterpret_cast<const void*>(val), val_size*sizeof(CharT));

}

};

template<typename Archive>

struct deserializer {

Archive& ar;

template<typename T>

T operator()() { T val; ar & val; return val; }

template<typename CharT>

void operator()(CharT* val_out, std::size_t val_size) {

ar.load_binary(reinterpret_cast<void*>(val_out), val_size*sizeof(CharT));

}

};

namespace boost { namespace serialization {

template<class Archive, class CharT, class T>

void serialize(Archive & ar, tsl::htrie_map<CharT, T>& map, const unsigned int version) {

split_free(ar, map, version);

}

template<class Archive, class CharT, class T>

void save(Archive & ar, const tsl::htrie_map<CharT, T>& map, const unsigned int version) {

serializer<Archive> serial{ar};

map.serialize(serial);

}

template<class Archive, class CharT, class T>

void load(Archive & ar, tsl::htrie_map<CharT, T>& map, const unsigned int version) {

deserializer<Archive> deserial{ar};

map = tsl::htrie_map<CharT, T>::deserialize(deserial);

}

}}

int main() {

const tsl::htrie_map<char, std::int64_t> map = {{"one", 1}, {"two", 2},

{"three", 3}, {"four", 4}};

const char* file_name = "htrie_map.data";

{

std::ofstream ofs;

ofs.exceptions(ofs.badbit | ofs.failbit);

ofs.open(file_name, std::ios::binary);

boost::iostreams::filtering_ostream fo;

fo.push(boost::iostreams::zlib_compressor());

fo.push(ofs);

boost::archive::binary_oarchive oa(fo);

oa << map;

}

{

std::ifstream ifs;

ifs.exceptions(ifs.badbit | ifs.failbit | ifs.eofbit);

ifs.open(file_name, std::ios::binary);

boost::iostreams::filtering_istream fi;

fi.push(boost::iostreams::zlib_decompressor());

fi.push(ifs);

boost::archive::binary_iarchive ia(fi);

tsl::htrie_map<char, std::int64_t> map_deserialized;

ia >> map_deserialized;

assert(map == map_deserialized);

}

}

License

The code is licensed under the MIT license, see the LICENSE file for details.