Security News

/Research

Wallet-Draining npm Package Impersonates Nodemailer to Hijack Crypto Transactions

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

@mattersmedia/gcs-resumable-upload

Advanced tools

Upload a file to Google Cloud Storage with built-in resumable behavior

Upload a file to Google Cloud Storage with built-in resumable behavior

$ npm install --save gcs-resumable-upload

var upload = require('gcs-resumable-upload');

var fs = require('fs');

fs.createReadStream('titanic.mov')

.pipe(upload({ bucket: 'legally-owned-movies', file: 'titanic.mov' }))

.on('finish', function () {

// Uploaded!

});

Or from the command line:

$ npm install -g gcs-resumable-upload

$ cat titanic.mov | gcs-upload legally-owned-movies titanic.mov

If somewhere during the operation, you lose your connection to the internet or your tough-guy brother slammed your laptop shut when he saw what you were uploading, the next time you try to upload to that file, it will resume automatically from where you left off.

This module stores a file using ConfigStore that is written to when you first start an upload. It is aliased by the file name you are uploading to and holds the first 16kb chunk of data* as well as the unique resumable upload URI. (Resumable uploads are complicated)

If your upload was interrupted, next time you run the code, we ask the API how much data it has already, then simply dump all of the data coming through the pipe that it already has.

After the upload completes, the entry in the config file is removed. Done!

* The first 16kb chunk is stored to validate if you are sending the same data when you resume the upload. If not, a new resumable upload is started with the new data.

Oh, right. This module uses google-auto-auth and accepts all of the configuration that module does to strike up a connection as config.authConfig. See authConfig.

DuplexifyobjectConfiguration object.

GoogleAutoAuthIf you want to re-use an auth client from google-auto-auth, pass an instance here.

objectSee authConfig.

stringThe name of the destination bucket.

stringThe name of the destination file.

numberThis will cause the upload to fail if the current generation of the remote object does not match the one provided here.

string|bufferA customer-supplied encryption key.

objectAny metadata you wish to set on the object.

Set the length of the file being uploaded.

Set the content type of the incoming data.

numberThe starting byte of the upload stream, for resuming an interrupted upload.

stringSet an Origin header when creating the resumable upload URI.

stringApply a predefined set of access controls to the created file.

Acceptable values are:

authenticatedRead - Object owner gets OWNER access, and allAuthenticatedUsers get READER access.bucketOwnerFullControl - Object owner gets OWNER access, and project team owners get OWNER access.bucketOwnerRead - Object owner gets OWNER access, and project team owners get READER access.private - Object owner gets OWNER access.projectPrivate - Object owner gets OWNER access, and project team members get access according to their roles.publicRead - Object owner gets OWNER access, and allUsers get READER access.booleanMake the uploaded file private. (Alias for config.predefinedAcl = 'private')

booleanMake the uploaded file public. (Alias for config.predefinedAcl = 'publicRead')

stringIf you already have a resumable URI from a previously-created resumable upload, just pass it in here and we'll use that.

stringIf the bucket being accessed has requesterPays functionality enabled, this can be set to control which project is billed for the access of this file.

--

ErrorInvoked if the authorization failed, the request failed, or the file wasn't successfully uploaded.

ObjectThe HTTP response from request.

ObjectThe file's new metadata.

The file was uploaded successfully.

ErrorInvoked if the authorization failed or the request to start a resumable session failed.

StringThe resumable upload session URI.

FAQs

Upload a file to Google Cloud Storage with built-in resumable behavior

The npm package @mattersmedia/gcs-resumable-upload receives a total of 0 weekly downloads. As such, @mattersmedia/gcs-resumable-upload popularity was classified as not popular.

We found that @mattersmedia/gcs-resumable-upload demonstrated a not healthy version release cadence and project activity because the last version was released a year ago. It has 4 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

/Research

Malicious npm package impersonates Nodemailer and drains wallets by hijacking crypto transactions across multiple blockchains.

Security News

This episode explores the hard problem of reachability analysis, from static analysis limits to handling dynamic languages and massive dependency trees.

Security News

/Research

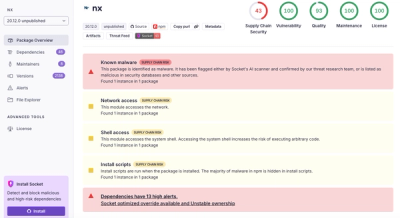

Malicious Nx npm versions stole secrets and wallet info using AI CLI tools; Socket’s AI scanner detected the supply chain attack and flagged the malware.