Product

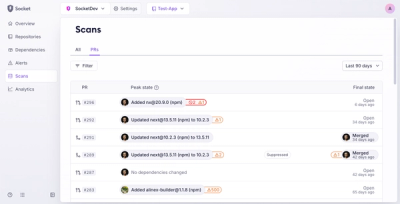

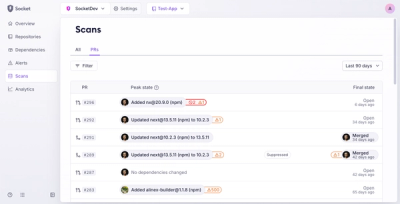

Introducing Pull Request Stories to Help Security Teams Track Supply Chain Risks

Socket’s new Pull Request Stories give security teams clear visibility into dependency risks and outcomes across scanned pull requests.

@mendable/firecrawl-js

Advanced tools

The Firecrawl Node SDK is a library that allows you to easily scrape and crawl websites, and output the data in a format ready for use with language models (LLMs). It provides a simple and intuitive interface for interacting with the Firecrawl API.

To install the Firecrawl Node SDK, you can use npm:

npm install @mendable/firecrawl-js

FIRECRAWL_API_KEY or pass it as a parameter to the FirecrawlApp class.Here's an example of how to use the SDK with error handling:

import Firecrawl from '@mendable/firecrawl-js';

const app = new Firecrawl({ apiKey: 'fc-YOUR_API_KEY' });

// Scrape a website

const scrapeResponse = await app.scrape('https://firecrawl.dev', {

formats: ['markdown', 'html'],

});

console.log(scrapeResponse);

// Crawl a website (waiter)

const crawlResponse = await app.crawl('https://firecrawl.dev', {

limit: 100,

scrapeOptions: { formats: ['markdown', 'html'] },

pollInterval: 2,

});

console.log(crawlResponse);

To scrape a single URL with error handling, use the scrape method. It takes the URL as a parameter and returns the scraped data.

const url = 'https://example.com';

const scrapedData = await app.scrape(url);

To crawl a website with error handling, use the crawl method. It takes the starting URL and optional parameters, including limits and per‑page scrapeOptions.

const crawlResponse = await app.crawl('https://firecrawl.dev', {

limit: 100,

scrapeOptions: { formats: ['markdown', 'html'] },

});

To start an asynchronous crawl, use startCrawl. It returns a job ID you can poll with getCrawlStatus.

const start = await app.startCrawl('https://mendable.ai', {

excludePaths: ['blog/*'],

limit: 5,

});

To check the status of a crawl job with error handling, use the getCrawlStatus method. It takes the job ID as a parameter and returns the current status.

const status = await app.getCrawlStatus(id);

Use extract with a prompt and schema. Zod schemas are supported directly.

import Firecrawl from '@mendable/firecrawl-js';

import { z } from 'zod';

const app = new Firecrawl({ apiKey: 'fc-YOUR_API_KEY' });

const schema = z.object({

title: z.string(),

});

const result = await app.extract({

urls: ['https://firecrawl.dev'],

prompt: 'Extract the page title',

schema,

showSources: true,

});

console.log(result.data);

Use map to generate a list of URLs from a website. Options let you customize the mapping process, including whether to utilize the sitemap or include subdomains.

const mapResult = await app.map('https://example.com');

console.log(mapResult);

To receive real‑time updates, start a crawl and attach a watcher.

const start = await app.startCrawl('https://mendable.ai', { excludePaths: ['blog/*'], limit: 5 });

const watch = app.watcher(start.id, { kind: 'crawl', pollInterval: 2 });

watch.on('document', (doc) => {

console.log('DOC', doc);

});

watch.on('error', (err) => {

console.error('ERR', err);

});

watch.on('done', (state) => {

console.log('DONE', state.status);

});

await watch.start();

To batch scrape multiple URLs with error handling, use the batchScrape method.

const batchScrapeResponse = await app.batchScrape(['https://firecrawl.dev', 'https://mendable.ai'], {

formats: ['markdown', 'html'],

});

To start an asynchronous batch scrape, use startBatchScrape and poll with getBatchScrapeStatus.

const asyncBatchScrapeResult = await app.startBatchScrape(['https://firecrawl.dev', 'https://mendable.ai'], {

formats: ['markdown', 'html'],

});

To use batch scrape with real‑time updates, start the job and watch it using the watcher.

const start = await app.startBatchScrape(['https://firecrawl.dev', 'https://mendable.ai'], { formats: ['markdown', 'html'] });

const watch = app.watcher(start.id, { kind: 'batch', pollInterval: 2 });

watch.on('document', (doc) => {

console.log('DOC', doc);

});

watch.on('error', (err) => {

console.error('ERR', err);

});

watch.on('done', (state) => {

console.log('DONE', state.status);

});

await watch.start();

The feature‑frozen v1 is still available under app.v1 with the original method names.

import Firecrawl from '@mendable/firecrawl-js';

const app = new Firecrawl({ apiKey: 'fc-YOUR_API_KEY' });

// v1 methods (feature‑frozen)

const scrapeV1 = await app.v1.scrapeUrl('https://firecrawl.dev', { formats: ['markdown', 'html'] });

const crawlV1 = await app.v1.crawlUrl('https://firecrawl.dev', { limit: 100 });

const mapV1 = await app.v1.mapUrl('https://firecrawl.dev');

The SDK handles errors returned by the Firecrawl API and raises appropriate exceptions. If an error occurs during a request, an exception will be raised with a descriptive error message. The examples above demonstrate how to handle these errors using try/catch blocks.

The Firecrawl Node SDK is licensed under the MIT License. This means you are free to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the SDK, subject to the following conditions:

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

Please note that while this SDK is MIT licensed, it is part of a larger project which may be under different licensing terms. Always refer to the license information in the root directory of the main project for overall licensing details.

FAQs

JavaScript SDK for Firecrawl API

The npm package @mendable/firecrawl-js receives a total of 127,481 weekly downloads. As such, @mendable/firecrawl-js popularity was classified as popular.

We found that @mendable/firecrawl-js demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 4 open source maintainers collaborating on the project.

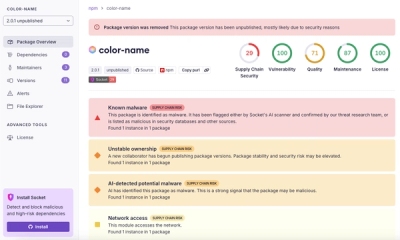

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Product

Socket’s new Pull Request Stories give security teams clear visibility into dependency risks and outcomes across scanned pull requests.

Research

/Security News

npm author Qix’s account was compromised, with malicious versions of popular packages like chalk-template, color-convert, and strip-ansi published.

Research

Four npm packages disguised as cryptographic tools steal developer credentials and send them to attacker-controlled Telegram infrastructure.