Security News

PyPI’s New Archival Feature Closes a Major Security Gap

PyPI now allows maintainers to archive projects, improving security and helping users make informed decisions about their dependencies.

aws-delivlib

Advanced tools

A fabulous library for defining continuous pipelines for building, testing and releasing code libraries.

aws-delivlib is a fabulous library for defining continuous pipelines for building, testing and publishing code libraries through AWS CodeBuild and AWS CodePipeline.

aws-delivlib is used by the AWS Cloud Development Kit and was designed to support simultaneous delivery of the AWS CDK in multiple programming languages packaged via jsii.

A delivlib pipeline consists of the following sequential stages. Each stage will execute all tasks concurrently:

+-----------+ +-----------+ +-----------+ +----------------+

| Source +---->+ Build +---->+ Test +---->+ Publish |

+-----------+ +-----------+ +-----+-----+ +-------+--------+

| |

v v

+-----+-----+ +-------+-------+

| Test1 | | npm |

+-----------+ +---------------+

| Test2 | | NuGet |

+-----------+ +---------------+

| Test3 | | Maven Central |

+-----------+ +---------------+

| ... | | GitHub Pages |

+-----------+ +---------------+

|GitHub Releases|

+---------------+

The following sections describe each stage and the configuration options available:

To install, use npm / yarn:

$ npm i aws-delivlib

or:

$ yarn add aws-delivlib

and import the library to your project:

import delivlib = require('aws-delivlib');

The next step is to add a pipeline to your app. When you define a pipeline, the minimum requirement is to specify the source repository. All other settings are optional.

const pipeline = new delivlib.Pipeline(this, 'MyPipeline', {

// options

});

The following sections will describe the various options available in your pipeline.

The only required option when defining a pipeline is to specify a source repository for your project.

repo: Source Repository (required)The repo option specifies your source code repository for your project. You

could use either CodeCommit or GitHub.

To use an existing repository:

import codecommit = require('@aws-cdk/aws-codecommit');

// import an existing repository

const myRepo = ccommit.Repository.import(this, 'TestRepo', {

repositoryName: 'delivlib-test-repo'

});

// ...or define a new repository (probably not what you want)

const myRepo = new ccomit.Repository(this, 'TestRepo');

// create a delivlib pipeline associated with this codebuild repo

new delivlib.Pipeline(this, 'MyPipeline', {

repo: new delivlib.CodeCommitRepo(myRepo),

// ...

});

To connect to GitHub, you will need to store a Personal GitHub Access Token as an SSM Parameter and provide the name of the SSM parameter.

new delivlib.Pipeline(this, 'MyPipeline', {

repo: new delivlib.GitHubRepo('<github-token-ssm-parameter-name>', 'myaccount/myrepo'),

// ...

})

branch: Source Control Branch (optional)The branch option can be used to specify the git branch to build from. The

default is master.

new delivlib.Pipeline(this, 'MyPipeline', {

repo: // ...

branch: 'dev',

})

The second stage of a pipeline is to build your code. The following options allow you to do customize your build environment and scripts:

buildSpec: Build Script (optional)The default behavior will use the buildspec.yaml file from the root of your

source repository to determine the build steps.

See the the buildspec reference documentation in the CodeBuild User Guide.

Note that if you don't have an "artifacts" section in your buildspec, you won't be able to run any tests against the build outputs or publish them to package managers.

If you wish, you can use the buildSpec option, in which case CodeBuild will not

use the checked-in buildspec.yaml:

new delivlib.Pipeline(this, 'MyPipeline', {

// ...

buildSpec: {

version: '0.2',

phases: {

build: {

commands: [

'echo "Hello, world!"'

]

}

},

artifacts: {

files: [ '**/*' ],

'base-directory': 'dist'

}

}

});

buildImage: Build container image (optional)The Docker image to use for the build container.

Default: the default image (if none is specified) is a custom Docker image which is bundled together with the delivlib library and called "superchain". It is an environment that supports building libraries that target multiple programming languages.

The default image is a union of the following official AWS CodeBuild images:

You can use the AWS CodeBuild API to specify any Linux/Windows Docker image for your build. Here are some examples:

codebuild.LinuxBuildImage.fromDockerHub('golang:1.11') - use an image from Docker Hubcodebuild.LinuxBuildImage.UBUNTU_14_04_OPEN_JDK_9 - OpenJDK 9 available from AWS CodeBuildcodebuild.WindowsBuildImage.WIN_SERVER_CORE_2016_BASE - Windows Server Core 2016 available from AWS CodeBuildcodebuild.LinuxBuildImage.fromEcrRepository(myRepo) - use an image from an ECR repositoryenv: Build environment variables (optional)Allows adding environment variables to the build environment:

new delivlib.Pipeline(this, 'MyPipeline', {

// ...

env: {

FOO: 'bar'

}

});

computeType: size of the AWS CodeBuild compute capacity (default: SMALL)privileged: run in privileged mode (default: false)The third stage of a delivlib pipeline is to execute tests. Tests are executed

in parallel only after a successful build and can access build artifacts as

defined in your buildspec.yaml.

The pipeline.addTest method can be used to add tests to your pipeline. Test

scripts are packaged as part of your delivlib CDK app.

delivlib.addTest('MyTest', {

platform: TestablePlatform.LinuxUbuntu, // or `TestablePlatform.Windows`

testDirectory: 'path/to/local/directory/with/tests'

});

testDirectory refers to a directory on the local file system which must

contain an entry-point file (either test.ps1 or test.sh). Preferably make

this path relative to the current file using path.join(__dirname, ...).

The test container will be populated the build output artifacts as well as all the files from the test directory.

Then, the entry-point will be executed. If it fails, the test failed.

The last step of the pipeline is to publish your artifacts to one or more package managers. Delivlib is shipped with a bunch of built-in publishing tasks, but you could add your own if you like.

To add a publishing target to your pipeline, you can either use the

pipeline.addPublish(publisher) method or one of the built-in

pipeline.publishToXxx methods. The first option is useful if you wish to

define your own publisher, which is class the implements the

delivlib.IPublisher interface.

Built-in publishers are designed to be idempotent: if the artifacts version is

already published to the package manager, the publisher will succeed. This

means that in order to publish a new version, all you need to do is bump the

version of your package artifact (e.g. change package.json) and the publisher

will kick in.

You can use the dryRun: true option when creating a publisher to tell the

publisher to do as much as it can without actually making the package publicly

available. This is useful for testing.

The following sections describe how to use each one of the built-in publishers.

The method pipeline.publishToNpm will add a publisher to your pipeline which

can publish JavaScript modules to npmjs.

The publisher will search for js/*.tgz in your build artifacts and will npm publish each of them.

To create npm tarballs, you can use npm pack as part of your build and emit

them to the js/ directory in your build artifacts. The version of the module

is deduced from the name of the tarball.

To use this publisher, you will first need to store an npm.js publishing token in AWS Secrets Manager and supply the secret ARN when you add the publisher.

pipeline.publishToNpm({

npmTokenSecret: { secretArn: 'my-npm-token-secret-arn' }

});

This publisher can publish .NET NuGet packages to nuget.org.

The publisher will search dotnet/**/*.nuget in your build artifacts and will

publish each package to NuGet. To create .nupkg files, see Creating NuGet

Packages.

Make sure you output the artifacts under the dotnet/ directory.

To use this publisher, you will first need to store a NuGet API Key with "Push" permissions in AWS Secrets Manager and supply the secret ARN when you add the publisher.

Use pipeline.publishToNuGet will add a publisher to your pipeline:

pipeline.publishToNuGet({

nugetApiKeySecret: { secretArn: 'my-nuget-token-secret-arn' }

});

Important: Limitations in the mono tools restrict the hash algorithms that

can be used in the signature to SHA-1. This limitation will be removed in the

future.

You can enable digital signatures for the .dll files enclosed in your NuGet

packages. In order to do so, you need to procure a Code-Signing Certificate

(also known as a Software Publisher Certificate, or SPC). If you don't have one

yet, you can refer to

Obtaining a new Code Signing Certificate

for a way to create a new certificate entirely in the Cloud.

In order to enable code signature, change the way the NuGet publisher is added

by adding an ICodeSigningCertificate for the codeSign key (it could be a

CodeSigningCertificate construct, or you may bring your own implementation if

you wish to use a pre-existing certificate):

pipeline.publishToNuGet({

nugetApiKeySecret: { secretArn: 'my-nuget-token-secret-arn' },

codeSign: codeSigningCertificate

});

If you want to create a new certificate, the CodeSigningCertificate construct

will provision a new RSA Private Key and emit a Certificate Signing Request in

an Output so you can pass it to your Certificate Authority (CA) of choice:

CodeSigningCertificate to your stack:

new delivlib.CodeSigningCertificate(stack, 'CodeSigningCertificate', {

distinguishedName: {

commonName: '<a name your customers would recognize>',

emailAddress: '<your@email.address>',

country: '<two-letter ISO country code>',

stateOrProvince: '<state or province>',

locality: '<city>',

organizationName: '<name of your company or organization>',

organizationalUnitName: '<name of your department within the origanization>',

}

});

$ cdk deploy $stack_name

...

Outputs:

$stack_name.CodeSigningCertificateXXXXXX = -----BEGIN CERTIFICATE REQUEST-----

...

-----END CERTIFICATE REQUEST-----

-----BEGIN CERTIFICATE REQUEST----- and ends with

-----END CERTIFICATE REQUEST-----) to a Certificate Authority, so they can

provde you with a signed certificate.certificate.pem that is in the same folder as file that uses the code:

// Import utilities at top of file:

import fs = require('fs');

import path = require('path');

// ...

new delivlib.CodeSigningCertificate(stack, 'CodeSigningCertificate', {

distinguishedName: {

commonName: '<a name your customers would recognize>',

emailAddress: '<your@email.address>',

country: '<two-letter ISO country code>',

stateOrProvince: '<state or province>',

locality: '<city>',

organizationName: '<name of your company or organization>',

organizationalUnitName: '<name of your department within the origanization>',

},

// Addin the signed certificate

pemCertificate: fs.readFileSync(path.join(__dirname, 'certificate.pem'))

});

$ cdk deploy $stackName

This publisher can publish Java packages to Maven Central.

This publisher expects to find a local maven repository under the java/

directory in your build output artifacts. You can create one using the

altDeploymentRepository option for mvn deploy (this assumes dist if the

root of your artifacts tree):

$ mvn deploy -D altDeploymentRepository=local::default::file://${PWD}/dist/java

Use pipeline.publishToMaven to add this publisher to your pipeline:

pipeline.publishToMaven({

mavenLoginSecret: { secretArn: 'my-maven-credentials-secret-arn' },

signingKey: mavenSigningKey,

stagingProfileId: '11a33451234521'

});

In order to configure the Maven publisher, you will need at least three pieces of information:

mavenLoginSecret) stored in AWS Secrets ManagersigningKey) to sign your Maven packagesstagingProfileId) assigned to your account in Maven Central.The following sections will describe how to obtain this information.

Since Maven Central requires that you sign your packages you will need to create a GPG key pair and publish it's public key to a well-known server:

This library includes a GPG key construct:

const mavenSigningKey = new delivlib.SigningKey(this, 'MavenCodeSign', {

email: 'your-email@domain.com',

identity: 'your-identity',

secretName: 'maven-code-sign' // secrets manager secret name

});

After you've deployed your stack once, you can go to the SSM Parameter Store console and copy the public key from the new parameter created by your stack under the specified secret name. Then, you should paste this key to any of the supported key servers (recommended: https://keyserver.ubuntu.com).

In order to publish to Maven Central, you'll need to follow the instructions in Maven Central's OSSRH Guide and create a Sonatype account and project via JIRA:

username and password key/value fields

that correspond to your account's credentials.After you've obtained a Sonatype account and Maven Central project:

https://oss.sonatype.org/#stagingProfiles;11a33451234521This is the value you should assign to the stagingProfileId option.

This publisher can package all your build artifacts, sign them and publish them to the "Releases" section of a GitHub project.

This publisher relies on two files to produce the release:

build.json a manifest that contains metadata about the release.CHANGELOG.md (optional) the changelog of your project, from which the

release notes are extracted. If not provided, no release notes are added

to the release.The file build.json is read from the root of your artifact tree. It should

include the following fields:

{

"name": "<project name>",

"version": "<project version>",

"commit": "<sha of commit>"

}

This publisher does the following:

${name}-${version}.zip.${name}-${version}.zip.sigv${version} in the GitHub

repository. If there is, bail out successfully.CHANGELOG.md file, and extract the release notes for

${version} (uses changelog-parser)v${version}, tag the specified ${commit}

with the release notes from the changelog.To add a GitHub release publisher to your pipeline, use the

pipeline.publishToGitHub method:

pipeline.publishToGitHub({

githubRepo: targetRepository,

signingKey: releaseSigningKey

});

The publisher requires the following information:

githubRepo): see instructions on how to connect

to a GitHub repository. It doesn't have to be the same repository as the source repository,

but it can be.signingKey): a delivlib.SigningKey object used to sign the

zip bundle. Make sure to publish the public key to a well-known server so your users

can validate the authenticity of your release (see GPG Signing Key for

details on how to create a signing key pair and extract it's public key). You can either useThis publisher allows you to publish versioned static web-site content to GitHub Pages.

The publisher commits the entire contents of the docs/ directory into the root of the specified

GitHub repository, and also under the ${version}/ directory of the repo (which allows users

to access old versions of the docs if they wish).

NOTE: static website content can grow big. Therefore, this publisher will always force-push

to the branch without history (history is preserved via the versions/ directory). Make sure

you don't protect this branch against force-pushing or otherwise the publisher will fail.

This publisher depends on the following artifacts:

build.json: build manifest (see schema above)docs/**: the static website contentsThis is how this publisher works:

version field from build.jsongh-pages branch of the target repository to a local working directorydocs/** both to versions/${version} and to / of the working copy.gh-pages branch on GitHubNOTE: if

docs/contains a fully rendered static website, you should also include a.nojekyllfile to bypass Jekyll rendering.

To add this publisher to your pipeline, use the pipeline.publishToGitHubPages method:

pipeline.publishToGitHubPages({

githubRepo,

sshKeySecret: { secretArn: 'github-ssh-key-secret-arn' },

commitEmail: 'foo@bar.com',

commitUsername: 'foobar',

branch: 'gh-pages' // default

});

In order to publish to GitHub Pages, you will need the following pieces of information:

githubRepo). See instructions on

how to connect to a GitHub repository. It doesn't have to be the same

repository as the source repository, but it can be.sshKeySecret) for pushing to that repository stored in AWS

Secrets Manager which is configured in your GitHub repository as a deploy key

with write permissions.commitEmail) and username (commitUsername).To create an ssh deploy key for your repository:

sshKeySecret option.See the contribution guide for details on how to submit issues, pull requests, setup a development environment and publish new releases of this library.

This library is licensed under the Apache 2.0 License.

2.0.0 (2019-02-11)

ICredentialPair now conveys ssm.IStringParameter and secretsManager.ISecret instead of the ARNs and related attributes of those.<a name="1.0.0"></a>

FAQs

A fabulous library for defining continuous pipelines for building, testing and releasing code libraries.

The npm package aws-delivlib receives a total of 928 weekly downloads. As such, aws-delivlib popularity was classified as not popular.

We found that aws-delivlib demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 0 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

PyPI now allows maintainers to archive projects, improving security and helping users make informed decisions about their dependencies.

Research

Security News

Malicious npm package postcss-optimizer delivers BeaverTail malware, targeting developer systems; similarities to past campaigns suggest a North Korean connection.

Security News

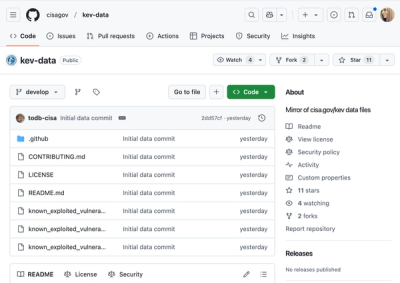

CISA's KEV data is now on GitHub, offering easier access, API integration, commit history tracking, and automated updates for security teams and researchers.