Security News

PyPI’s New Archival Feature Closes a Major Security Gap

PyPI now allows maintainers to archive projects, improving security and helping users make informed decisions about their dependencies.

puppeteer-ecommerce-scraper

Advanced tools

Client-side rendering approach for extracting product data from ecommerce websites with pagination using undetectable puppeteer-cluster

This is a flexible web scraper for extracting product data from various e-commerce websites:

npm i puppeteer-ecommerce-scraper

The examples folder contains my example scripts for different e-commerce websites. You can use them as a starting point for your own scraping tasks.

For example, the tiki1.js script configures the scraper to navigate through the Android and iPhone product pages of Tiki (a Vietnamese e-commerce website) and extract each product title, its price, and image URL from them, using a consistent user profile and a proxy server.

This script only uses 2 functions: clusterWrapper to wrap the scraping process and scrapeWithPagination, an end-to-end function to scrape, paginate, and save the product data from the website automatically. If you want a more customized scraping process, use the other functions provided in the different modules. I also provided scripts with post-fix 2 (such as tiki2.js) to demonstrate how to use these functions to scrape the same website.

The functions and utilities of the scraper are divided into 3 modules: clusterWrapper, scraper, and helpers. They are exported in src/index.js in the following order:

clusterWrapper.scraper: { scrapeWithPagination, autoScroll, saveProduct, navigatePage }.helpers: {

isFileExists,

createFile,

getWebName,

url2FileName,

getChromeProfilePath,

getChromeExecutablePath

}.clusterWrapper 🔝async function clusterWrapper({

func, // Function to be executed on each queue entry

queueEntries, // Array or Object of queue entries. This can be the keywords you want to peform the scape.

proxyEndpoint = '', // Must be in the form of http://username:password@host:port

monitor = false, // Whether to monitor the progress of the scraping process

useProfile = false, // Whether to use a consistent user profile

otherConfigs = {}, // Other configurations for Puppeteer

})

This function uses the puppeteer-cluster to launch multiple instances of the browser at the same time (maximum 5) and set up different web scraping tasks to execute for each queue entry with a default timeout of 10 seconds before closing the cluster. Here, the scraper uses several techniques to avoid detection:

useProfile option), the scraper can appear as a returning user rather than a new session each time. This option can be also beneficial when solving CAPTCHAs as we may avoid doing the same thing next time.You can run the test.js script to see the bot detection result when using this wrapper. Each task loads a page, gets the IP information, and then calls the func function with the Puppeteer page and queue data from the queueEntries.

scraper.scrapeWithPagination 🔝async function scrapeWithPagination({

page, // Puppeteer page object, which represents a single tab in Chrome

extractFunc, // Function to extract product info from product DOM

scrapingConfig = { // Configuration for scraping process

url: '', // URL of the webpage to scrape

productSelector: '', // CSS selector for product elements

filePath: '', // File path to save the scraped data. If not provided, the function will generate one based on the URL

fileHeader: '' // Header for the file

},

paginationConfig = { // Configuration for handling pagination

nextPageSelector: '', // CSS selector for the "next page" button

disabledSelector: '', // CSS selector for the disabled state of the "next page" button (to detect the end of pagination)

sleep: 1000, // Delay the execution to allow for page loading or other asynchronous operations to complete

maxPages: 0 // Maximum number of pages to scrape (0 for unlimited)

},

scrollConfig = { // Configuration for auto-scrolling

scrollDelay: NaN, // Delay between scrolls

scrollStep: NaN, // The amount (size) to scroll each time

numOfScroll: 1, // Number of scrolls to perform

direction: 'both' // Scroll direction ('up', 'down', 'both')

},

})

👉 return { products, totalPages, scrapingConfig, paginationConfig, scrollConfig }

The scraper can navigate through multiple pages of results using this function:

url and uses the nextPageSelector and disabledSelector from the paginationConfig to identify the "next page" button on the webpage and clicks it to load the next set of results.disabledSelector) or a maximum limit (maxPages) has been reached.scrollConfig setup. This is done to ensure that all product elements are fully rendered and can be scraped.extractFunc and then saveProduct to the file.paginationConfig parameters.scraper.autoScroll 🔝function autoScroll(

delay, // Delay between scrolls

scrollStep, // The amount (size) to scroll each time

direction // Scroll direction ('up', 'down', 'both')

)

This function automatically scrolls a Puppeteer page object in the specified direction (up, down, or both) by the specified scrollStep amount. It continues to scroll until the end of the page is reached, waiting for the specified delay between each scroll.

scraper.saveProduct 🔝function saveProduct(

products, // Array of product information

productInfo, // Object containing information about the product

filePath // File path to save the scraped data

)

If all productInfo's values are truthy, the function will push them into the products array and append (save) them to a file at the specified filePath.

scraper.navigatePage 🔝async function navigatePage({

page, // Puppeteer page object

nextPageSelector, // CSS selector for the "next page" button

disabledSelector, // CSS selector for the disabled state of the "next page" button (to detect the end of pagination)

sleep = 1000 // Delay the execution to allow for page loading or other asynchronous operations to complete

})

👉 return Boolean indicating whether the navigation was successful or if there is a "next page".

This function identifies if "next page" aimed to navigate is not the last page by using disabledSelector. If there is a "next page", it waits for current the navigation to complete and then clicks the nextPageSelector. Otherwise, it returns false, indicating that there is no "next page" to navigate. This could be used by the calling code to decide whether to continue scraping or stop.

helpers 🔝filePath): Checks if a file exists at the given filePath. It returns a boolean value indicating whether the file exists.filePath, header = ''): Creates a new file at the given filePath with the provided header as the first line. If the file already exists, it will not be overwritten.url): Extracts the website name from a URL.url): Converts a URL into a filename-safe string by removing invalid characters.This scraper is designed for educational purposes only. The user is responsible for complying with the terms of service of the websites being scraped. The scraper should be used responsibly and respectfully to avoid overloading the websites with requests and to prevent IP blocking or other forms of retaliation.

FAQs

Client-side rendering approach for extracting product data from ecommerce websites with pagination using undetectable puppeteer-cluster

The npm package puppeteer-ecommerce-scraper receives a total of 6 weekly downloads. As such, puppeteer-ecommerce-scraper popularity was classified as not popular.

We found that puppeteer-ecommerce-scraper demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 0 open source maintainers collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

PyPI now allows maintainers to archive projects, improving security and helping users make informed decisions about their dependencies.

Research

Security News

Malicious npm package postcss-optimizer delivers BeaverTail malware, targeting developer systems; similarities to past campaigns suggest a North Korean connection.

Security News

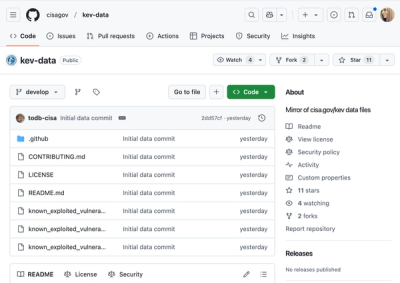

CISA's KEV data is now on GitHub, offering easier access, API integration, commit history tracking, and automated updates for security teams and researchers.