Security News

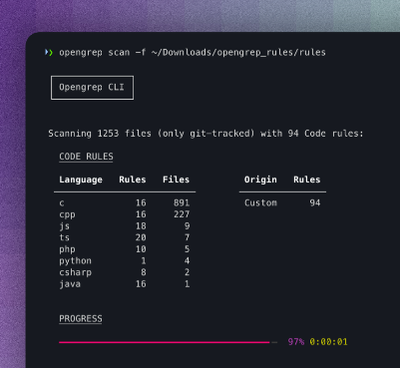

Opengrep Emerges as Open Source Alternative Amid Semgrep Licensing Controversy

Opengrep forks Semgrep to preserve open source SAST in response to controversial licensing changes.

Simplify. Unify. Amplify.

| Feature | AutoLLM | LangChain | LlamaIndex | LiteLLM |

|---|---|---|---|---|

| 100+ LLMs | ✅ | ✅ | ✅ | ✅ |

| Unified API | ✅ | ❌ | ❌ | ✅ |

| 20+ Vector Databases | ✅ | ✅ | ✅ | ❌ |

| Cost Calculation (100+ LLMs) | ✅ | ❌ | ❌ | ✅ |

| 1-Line RAG LLM Engine | ✅ | ❌ | ❌ | ❌ |

| 1-Line FastAPI | ✅ | ❌ | ❌ | ❌ |

easily install autollm package with pip in Python>=3.8 environment.

pip install autollm

for built-in data readers (github, pdf, docx, ipynb, epub, mbox, websites..), install with:

pip install autollm[readers]

video tutorials:

blog posts:

colab notebooks:

>>> from autollm import AutoQueryEngine, read_files_as_documents

>>> documents = read_files_as_documents(input_dir="path/to/documents")

>>> query_engine = AutoQueryEngine.from_defaults(documents)

>>> response = query_engine.query(

... "Why did SafeVideo AI develop this project?"

... )

>>> response.response

"Because they wanted to deploy rag based llm apis in no time!"

>>> from autollm import AutoQueryEngine

>>> query_engine = AutoQueryEngine.from_defaults(

... documents=documents,

... llm_model="gpt-3.5-turbo",

... llm_max_tokens="256",

... llm_temperature="0.1",

... system_prompt='...',

... query_wrapper_prompt='...',

... enable_cost_calculator=True,

... embed_model="huggingface/BAAI/bge-large-zh",

... chunk_size=512,

... chunk_overlap=64,

... context_window=4096,

... similarity_top_k=3,

... response_mode="compact",

... structured_answer_filtering=False,

... vector_store_type="LanceDBVectorStore",

... lancedb_uri="./lancedb",

... lancedb_table_name="vectors",

... exist_ok=True,

... overwrite_existing=False,

... )

>>> response = query_engine.query("Who is SafeVideo AI?")

>>> print(response.response)

"A startup that provides self hosted AI API's for companies!"

>>> import uvicorn

>>> from autollm import AutoFastAPI

>>> app = AutoFastAPI.from_query_engine(query_engine)

>>> uvicorn.run(app, host="0.0.0.0", port=8000)

INFO: Started server process [12345]

INFO: Waiting for application startup.

INFO: Application startup complete.

INFO: Uvicorn running on http://http://0.0.0.0:8000/

>>> from autollm import AutoFastAPI

>>> app = AutoFastAPI.from_query_engine(

... query_engine,

... api_title='...',

... api_description='...',

... api_version='...',

... api_term_of_service='...',

)

>>> uvicorn.run(app, host="0.0.0.0", port=8000)

INFO: Started server process [12345]

INFO: Waiting for application startup.

INFO: Application startup complete.

INFO: Uvicorn running on http://http://0.0.0.0:8000/

>>> from autollm import AutoQueryEngine

>>> os.environ["HUGGINGFACE_API_KEY"] = "huggingface_api_key"

>>> llm_model = "huggingface/WizardLM/WizardCoder-Python-34B-V1.0"

>>> llm_api_base = "https://my-endpoint.huggingface.cloud"

>>> AutoQueryEngine.from_defaults(

... documents='...',

... llm_model=llm_model,

... llm_api_base=llm_api_base,

... )

huggingface - ollama example:

>>> from autollm import AutoQueryEngine

>>> llm_model = "ollama/llama2"

>>> llm_api_base = "http://localhost:11434"

>>> AutoQueryEngine.from_defaults(

... documents='...',

... llm_model=llm_model,

... llm_api_base=llm_api_base,

... )

microsoft azure - openai example:

>>> from autollm import AutoQueryEngine

>>> os.environ["AZURE_API_KEY"] = ""

>>> os.environ["AZURE_API_BASE"] = ""

>>> os.environ["AZURE_API_VERSION"] = ""

>>> llm_model = "azure/<your_deployment_name>")

>>> AutoQueryEngine.from_defaults(

... documents='...',

... llm_model=llm_model

... )

google - vertexai example:

>>> from autollm import AutoQueryEngine

>>> os.environ["VERTEXAI_PROJECT"] = "hardy-device-38811" # Your Project ID`

>>> os.environ["VERTEXAI_LOCATION"] = "us-central1" # Your Location

>>> llm_model = "text-bison@001"

>>> AutoQueryEngine.from_defaults(

... documents='...',

... llm_model=llm_model

... )

aws bedrock - claude v2 example:

>>> from autollm import AutoQueryEngine

>>> os.environ["AWS_ACCESS_KEY_ID"] = ""

>>> os.environ["AWS_SECRET_ACCESS_KEY"] = ""

>>> os.environ["AWS_REGION_NAME"] = ""

>>> llm_model = "anthropic.claude-v2"

>>> AutoQueryEngine.from_defaults(

... documents='...',

... llm_model=llm_model

... )

🌟Pro Tip: autollm defaults to lancedb as the vector store:

it's setup-free, serverless, and 100x more cost-effective!

>>> from autollm import AutoQueryEngine

>>> import qdrant_client

>>> vector_store_type = "QdrantVectorStore"

>>> client = qdrant_client.QdrantClient(

... url="http://<host>:<port>",

... api_key="<qdrant-api-key>"

... )

>>> collection_name = "quickstart"

>>> AutoQueryEngine.from_defaults(

... documents='...',

... vector_store_type=vector_store_type,

... client=client,

... collection_name=collection_name,

... )

>>> from autollm import AutoServiceContext

>>> service_context = AutoServiceContext(enable_cost_calculation=True)

# Example verbose output after query

Embedding Token Usage: 7

LLM Prompt Token Usage: 1482

LLM Completion Token Usage: 47

LLM Total Token Cost: $0.002317

>>> from autollm import AutoFastAPI

>>> app = AutoFastAPI.from_config(config_path, env_path)

Here, config and env should be replaced by your configuration and environment file paths.

After creating your FastAPI app, run the following command in your terminal to get it up and running:

uvicorn main:app

switching from Llama-Index? We've got you covered.

>>> from llama_index import StorageContext, ServiceContext, VectorStoreIndex

>>> from llama_index.vectorstores import LanceDBVectorStore

>>> from autollm import AutoQueryEngine

>>> vector_store = LanceDBVectorStore(uri="./.lancedb")

>>> storage_context = StorageContext.from_defaults(vector_store=vector_store)

>>> service_context = ServiceContext.from_defaults()

>>> index = VectorStoreIndex.from_documents(

documents=documents,

storage_context=storage_contex,

service_context=service_context,

)

>>> query_engine = AutoQueryEngine.from_instances(index)

Q: Can I use this for commercial projects?

A: Yes, AutoLLM is licensed under GNU Affero General Public License (AGPL 3.0), which allows for commercial use under certain conditions. Contact us for more information.

our roadmap outlines upcoming features and integrations to make autollm the most extensible and powerful base package for large language model applications.

1-line Gradio app creation and deployment

Budget based email notification

Automated LLM evaluation

Add more quickstart apps on pdf-chat, documentation-chat, academic-paper-analysis, patent-analysis and more!

autollm is available under the GNU Affero General Public License (AGPL 3.0).

for more information, support, or questions, please contact:

love autollm? star the repo or contribute and help us make it even better! see our contributing guidelines for more information.

FAQs

Ship RAG based LLM Web API's, in seconds.

We found that autollm demonstrated a healthy version release cadence and project activity because the last version was released less than a year ago. It has 1 open source maintainer collaborating on the project.

Did you know?

Socket for GitHub automatically highlights issues in each pull request and monitors the health of all your open source dependencies. Discover the contents of your packages and block harmful activity before you install or update your dependencies.

Security News

Opengrep forks Semgrep to preserve open source SAST in response to controversial licensing changes.

Security News

Critics call the Node.js EOL CVE a misuse of the system, sparking debate over CVE standards and the growing noise in vulnerability databases.

Security News

cURL and Go security teams are publicly rejecting CVSS as flawed for assessing vulnerabilities and are calling for more accurate, context-aware approaches.